The machine learning industry has evolved rapidly over the past decade, and frameworks like PyTorch, TensorFlow, and Keras have become central to how modern AI systems are built. For anyone aiming to enter this field, understanding these tools is not just about learning syntax or libraries, but about understanding how companies actually build, deploy, and scale intelligent systems. Employers are no longer impressed by theoretical knowledge alone; they want practical ability to work with tools that are actively used in production environments. This is where the choice of framework becomes strategically important for career growth.

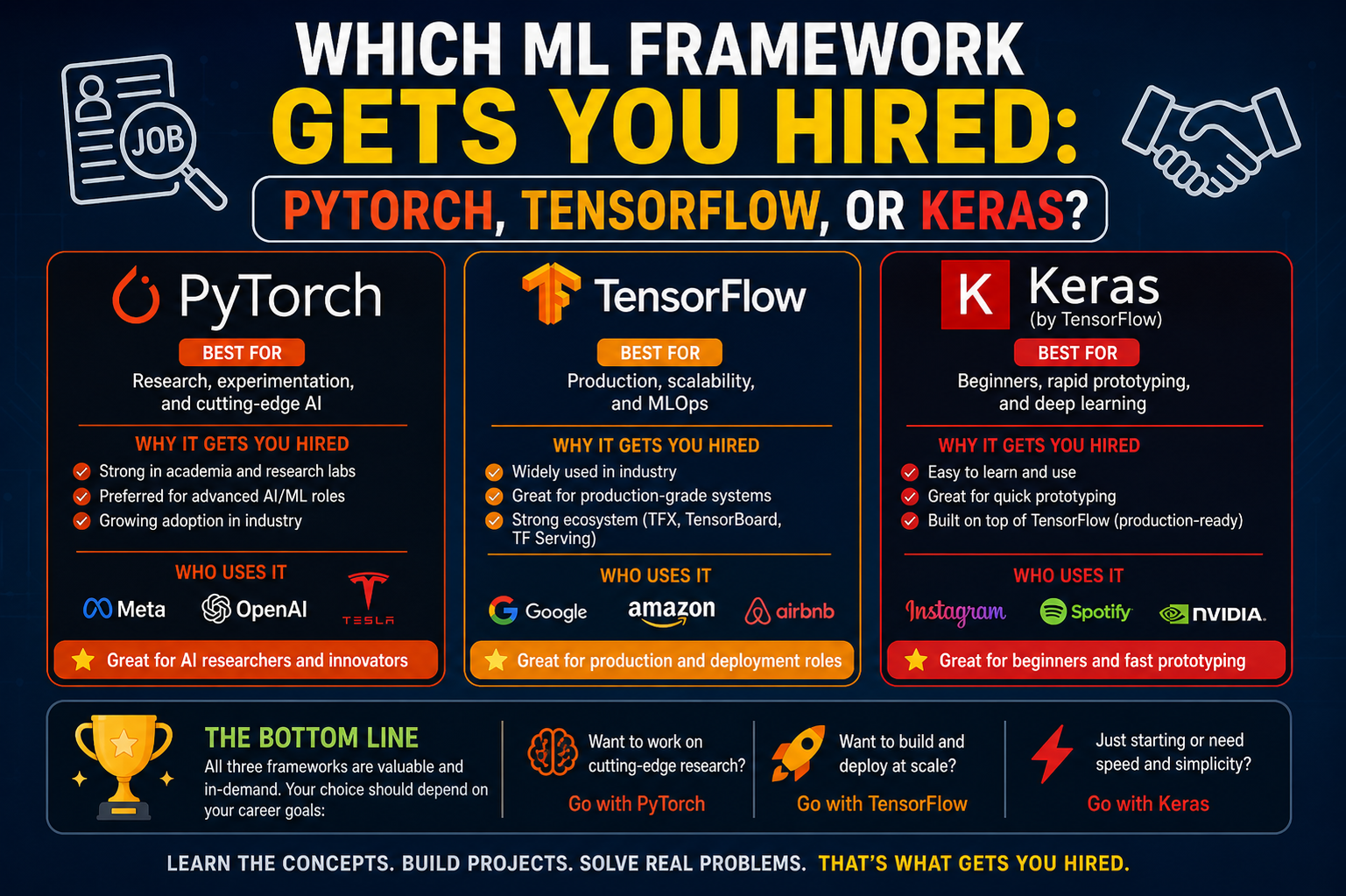

Each framework has developed its own ecosystem, community, and industry adoption pattern. PyTorch is strongly associated with research and innovation-driven teams, TensorFlow is widely used in enterprise-scale production systems, and Keras is often seen as the gateway for beginners entering deep learning. However, the boundaries between them are not rigid. Modern machine learning engineers are often expected to be adaptable across multiple frameworks, even if they specialize in one.

Why Framework Choice Matters for Employment Opportunities

When recruiters evaluate candidates in machine learning roles, they often look for signals of practical readiness. One of those signals is familiarity with frameworks used in their organization. A candidate who understands PyTorch may be preferred in a research-heavy AI lab, while another who has experience deploying TensorFlow models may be more suitable for a production engineering team.

The framework you choose also influences the kind of projects you work on while learning. PyTorch tends to encourage experimentation and academic-style exploration, while TensorFlow emphasizes structured pipelines and deployment readiness. Keras simplifies the learning curve, allowing beginners to focus on concepts rather than low-level implementation details. Because of this, your framework choice can shape not only your resume but also your thinking style as an AI practitioner.

PyTorch and Its Strong Position in Research and Innovation Roles

PyTorch has become extremely popular in the machine learning community due to its flexibility and developer-friendly design. One of its key strengths is dynamic computation, which allows developers to modify neural networks on the fly. This makes it highly suitable for research environments where experimentation is constant and models are frequently adjusted.

In recent years, many leading AI research labs and tech companies have adopted PyTorch as their primary framework for developing new models. This includes work in natural language processing, computer vision, and generative AI systems. The ease of debugging and intuitive Pythonic structure make it especially appealing for data scientists and researchers who prioritize speed of iteration.

From a hiring perspective, PyTorch skills are often associated with cutting-edge AI roles. Companies working on advanced technologies such as large language models, recommendation systems, and reinforcement learning frequently list PyTorch as a preferred or required skill. This means that mastering PyTorch can position you well for roles that involve innovation, experimentation, and research-heavy responsibilities.

However, PyTorch alone is not always sufficient for enterprise deployment scenarios. While it has improved significantly in production capabilities, some organizations still rely on more mature deployment pipelines built around other frameworks. This is why many professionals eventually expand their skills beyond PyTorch.

TensorFlow and Its Dominance in Production-Scale Systems

TensorFlow remains one of the most widely used machine learning frameworks in the industry, particularly in large-scale production environments. It was designed with scalability in mind, making it suitable for deploying models across distributed systems, cloud platforms, and mobile devices. This production-first mindset is one of the reasons it continues to be heavily used in enterprise settings.

Many global companies use TensorFlow to power real-world applications such as search engines, recommendation systems, fraud detection, and image recognition tools. Its ecosystem includes tools like TensorFlow Serving and TensorFlow Lite, which make it easier to deploy models in production and on edge devices. This makes TensorFlow particularly valuable for machine learning engineers focused on deployment and system integration.

From a hiring perspective, TensorFlow is often associated with roles that require strong engineering skills rather than purely research-oriented work. Employers value candidates who can build reliable pipelines, optimize performance, and maintain models in production environments. Knowledge of TensorFlow signals that a candidate is capable of handling real-world constraints such as latency, scalability, and system reliability.

While TensorFlow has historically been seen as more complex compared to PyTorch, its newer versions have significantly improved usability. Additionally, its integration with high-level APIs has made development more accessible, reducing the learning curve for new developers entering the field.

Keras as the Entry Point into Deep Learning

Keras is widely recognized as a beginner-friendly framework that simplifies the process of building neural networks. It provides a high-level interface that abstracts much of the complexity involved in deep learning implementation. This allows learners to focus on understanding core concepts such as layers, activation functions, and optimization without being overwhelmed by low-level details.

Because Keras is tightly integrated with TensorFlow, it acts as a bridge between simplicity and power. Beginners often start with Keras to build foundational knowledge before transitioning into more advanced TensorFlow workflows. This makes it an excellent educational tool as well as a practical framework for rapid prototyping.

In terms of employment, Keras alone is rarely the deciding factor for hiring in advanced machine learning roles. However, it plays an important role in the learning journey. Many professionals who are now working in AI engineering roles began their journey with Keras due to its simplicity. It helps build confidence and provides a smooth introduction to neural network design.

For entry-level positions, internships, or academic projects, Keras knowledge can still be beneficial. It demonstrates that a candidate understands basic deep learning concepts and can build functional models quickly. However, as career expectations increase, employers typically expect candidates to move beyond Keras into more advanced frameworks.

How Companies Actually Choose Between PyTorch and TensorFlow

In real-world hiring decisions, the choice between PyTorch and TensorFlow often depends on the nature of the company’s AI pipeline. Research-oriented teams, especially those working on new model architectures, tend to prefer PyTorch because of its flexibility. On the other hand, companies that prioritize large-scale deployment and system reliability often lean toward TensorFlow.

Some organizations even use both frameworks simultaneously. For example, a research team might develop a model in PyTorch, while the engineering team converts or adapts it into TensorFlow for deployment. This hybrid approach is becoming more common as machine learning systems become more complex.

Because of this trend, many job listings now mention both frameworks as desirable skills. Employers are increasingly looking for adaptable engineers who can work across different tools rather than being limited to a single ecosystem.

Skills That Matter More Than the Framework Itself

While knowing PyTorch, TensorFlow, or Keras is important, employers ultimately prioritize deeper skills that go beyond any single tool. Understanding machine learning fundamentals such as supervised learning, optimization, loss functions, and model evaluation is far more important than memorizing framework-specific syntax.

Data handling skills also play a major role in hiring decisions. Most machine learning projects involve significant time spent on data preprocessing, cleaning, and feature engineering. Candidates who can efficiently work with real-world datasets are often more valuable than those who only know how to build models.

Another critical area is deployment knowledge. Employers increasingly expect machine learning engineers to understand how models move from development to production. This includes knowledge of APIs, cloud platforms, containerization, and monitoring systems.

Because of this, frameworks should be seen as tools rather than goals. PyTorch, TensorFlow, and Keras are vehicles for implementing ideas, not the ideas themselves. Candidates who understand this distinction tend to perform better in interviews and on the job.

Industry Trends Shaping Framework Demand

The machine learning industry is continuously evolving, and framework popularity shifts over time. PyTorch has seen rapid growth in research and academic environments, largely due to its ease of use and strong community support. TensorFlow continues to maintain dominance in enterprise applications due to its mature deployment ecosystem.

At the same time, the rise of large-scale AI models and generative systems has increased demand for flexible experimentation frameworks, further strengthening PyTorch’s position. However, the need for scalable production systems ensures that TensorFlow remains highly relevant.

Keras, while not competing directly in enterprise adoption, continues to serve an important educational role. It lowers the barrier to entry for beginners and helps maintain a steady pipeline of new talent entering the AI field.

As the industry matures, the trend is moving toward interoperability rather than competition. Developers are increasingly expected to understand multiple frameworks and switch between them as needed.

How to Strategically Position Yourself for Machine Learning Jobs

For someone aiming to build a career in machine learning, the most effective strategy is not to focus on a single framework but to build a layered skill set. Starting with Keras can help establish foundational understanding, moving into TensorFlow can build production-oriented skills, and exploring PyTorch can develop research and experimentation capabilities.

This combination creates versatility, which is highly valued in the job market. Employers prefer candidates who can adapt to different project requirements rather than those who are limited to one tool.

Ultimately, getting hired in machine learning is less about choosing the “best” framework and more about demonstrating the ability to solve real problems. Frameworks are just instruments that help translate ideas into working systems. The real value lies in understanding data, algorithms, and how intelligent systems are built and deployed in the real world.

Bridging the Gap Between Learning and Real-World Machine Learning Jobs

Moving from learning machine learning frameworks to actually getting hired requires a shift in mindset. Many learners spend a lot of time comparing PyTorch, TensorFlow, and Keras at a surface level, but hiring decisions are rarely made on framework preference alone. Instead, companies evaluate how well a candidate can translate machine learning concepts into practical, scalable solutions. This means understanding workflows, not just libraries, becomes essential for career progression.

In real-world environments, machine learning projects are rarely isolated experiments. They are part of larger systems that involve data pipelines, APIs, cloud infrastructure, monitoring tools, and continuous updates. Frameworks like PyTorch and TensorFlow are just one piece of this ecosystem. Employers expect candidates to understand how models interact with other components in production environments.

From Model Building to System Thinking

One of the biggest differences between beginners and job-ready machine learning engineers is system thinking. Beginners often focus on building models that achieve good accuracy on a dataset. However, professionals are expected to think about how those models will behave in real-world conditions, including latency, scalability, memory usage, and data drift over time.

PyTorch is often used during the experimentation phase because it allows quick modifications and flexible model design. TensorFlow, on the other hand, is often used when transitioning models into production systems. Keras sits at the entry level, helping learners understand the basics before they move into more complex environments. However, none of these frameworks alone guarantees job readiness unless combined with system-level understanding.

The Role of Data Engineering in Machine Learning Careers

Another important factor that influences hiring is data engineering knowledge. Machine learning models are only as good as the data they are trained on, and in many companies, a significant portion of the work involves preparing and managing data rather than building models.

Candidates who understand how to clean datasets, handle missing values, normalize inputs, and structure pipelines are often more valuable than those who only focus on model architecture. This is because real-world data is messy, inconsistent, and constantly changing.

Frameworks like TensorFlow provide tools for building data pipelines, while PyTorch offers flexibility in handling dynamic datasets. However, the underlying skill is not framework-specific. It is about understanding data flow from raw input to model prediction. Employers prioritize this ability because it directly impacts model performance and reliability.

Deployment Skills as a Key Hiring Factor

In modern machine learning roles, deployment skills are just as important as model-building skills. Companies are no longer satisfied with models that only work in notebooks or research environments. They need models that can be integrated into real applications and serve predictions at scale.

TensorFlow has a strong advantage in this area due to its deployment ecosystem, which includes tools for serving models on cloud platforms, mobile devices, and embedded systems. PyTorch has also improved significantly with tools for production deployment, but TensorFlow remains widely adopted in enterprise systems.

Understanding how to containerize models, expose them through APIs, and monitor their performance in production is increasingly expected from machine learning engineers. This is why job descriptions often mention not just frameworks but also technologies like Docker, REST APIs, and cloud platforms alongside them.

Why Flexibility Matters More Than Specialization

The machine learning job market is dynamic, and companies often adjust their technology stacks based on project needs. Because of this, being flexible is more valuable than being overly specialized in a single framework.

A candidate who only knows PyTorch may struggle in a TensorFlow-based environment, and vice versa. However, someone who understands the underlying principles of machine learning can adapt to either framework with relative ease. This adaptability is what employers are really looking for.

Keras plays an important role in building this flexibility at an early stage. By simplifying complex concepts, it allows learners to focus on understanding how neural networks work rather than getting stuck in implementation details. Once these fundamentals are clear, transitioning between frameworks becomes much easier.

Research vs Industry: Two Different Hiring Expectations

Machine learning careers often split into two broad paths: research-oriented roles and industry-focused engineering roles. Each path values frameworks differently.

In research roles, especially in areas like deep learning innovation or natural language processing, PyTorch is often preferred because of its flexibility and ease of experimentation. Researchers need to test new ideas quickly, modify architectures, and iterate frequently. PyTorch supports this workflow effectively.

In contrast, industry roles focus more on reliability, scalability, and integration. TensorFlow is widely used here because of its mature deployment tools and strong support for production systems. Companies that operate large-scale services prioritize stability and performance over experimental flexibility.

Understanding this distinction helps candidates choose the right direction for their career. Instead of asking which framework is better, it becomes more useful to ask which type of work aligns with personal goals.

How Hiring Managers Evaluate Machine Learning Candidates

Hiring managers typically evaluate candidates based on a combination of technical knowledge, practical experience, and problem-solving ability. Framework knowledge is just one part of the evaluation process.

During interviews, candidates are often asked to explain how models work, how they would handle real-world datasets, and how they would deploy solutions. Even if a candidate knows multiple frameworks, they may still struggle if they cannot explain the reasoning behind their approach.

Practical experience, such as internships, personal projects, or contributions to real-world systems, carries significant weight. Employers want to see evidence that a candidate can apply machine learning concepts beyond theoretical exercises.

The Importance of Projects Over Framework Knowledge

One of the most effective ways to improve hiring prospects is through project-based learning. Instead of focusing solely on learning frameworks, building end-to-end projects demonstrates real capability.

For example, creating a project that involves data collection, preprocessing, model training, evaluation, and deployment shows a complete understanding of the machine learning lifecycle. Whether the project is built using PyTorch, TensorFlow, or Keras is less important than the ability to complete the entire workflow.

Projects also help candidates understand the limitations of frameworks. In real-world scenarios, issues like memory constraints, data imbalance, and deployment challenges often arise. These experiences are far more valuable than simply following tutorials.

Evolving Trends in Machine Learning Framework Usage

The machine learning landscape continues to evolve rapidly, and framework usage trends shift accordingly. PyTorch has seen increasing adoption due to its simplicity and strong community support. TensorFlow remains dominant in enterprise environments due to its long-standing ecosystem and deployment capabilities.

At the same time, hybrid approaches are becoming more common. Developers often use PyTorch for experimentation and TensorFlow for production deployment. This combination allows teams to leverage the strengths of both frameworks.

Keras continues to play a supporting role in education and rapid prototyping. While it is not typically the final choice for large-scale systems, it remains a valuable tool for learning and early-stage development.

Building a Long-Term Career Strategy in Machine Learning

A successful machine learning career is built on continuous learning rather than dependence on a single tool or framework. Technologies evolve, but core principles such as data understanding, model evaluation, and system design remain constant.

Candidates who focus on building strong foundations in mathematics, statistics, and programming are better equipped to adapt to new frameworks as they emerge. PyTorch, TensorFlow, and Keras are just tools that implement these principles in different ways.

Instead of aiming to master only one framework, a more effective strategy is to become comfortable switching between tools as needed. This adaptability ensures long-term career growth in a field that is constantly changing.

Ultimately, getting hired in machine learning is about demonstrating the ability to solve problems, not just the ability to use a specific library. Frameworks help implement solutions, but the real value lies in understanding what needs to be solved and why.

Translating Machine Learning Skills into Real Hiring Advantage

At this stage of a machine learning journey, the focus naturally shifts from learning tools to building employable expertise. PyTorch, TensorFlow, and Keras are no longer just frameworks to compare; they become instruments that reflect how you approach real problems. Employers rarely hire someone because they prefer one framework over another. Instead, they look for consistency in problem-solving, clarity in thinking, and the ability to take a model from idea to deployment.

What often separates shortlisted candidates from others is not the complexity of their projects, but how well they understand the complete workflow. A simple model built with proper data handling, evaluation, and deployment understanding is far more valuable than an advanced architecture that exists only in a notebook. This is why framework knowledge must always be paired with practical execution.

Understanding Production Reality Beyond Tutorials

A major gap between learning and industry expectations comes from how machine learning is taught versus how it is used. Tutorials often present clean datasets, perfect preprocessing steps, and models that train without issues. Real-world environments are completely different.

In production systems, data is incomplete, noisy, and constantly changing. Models must be retrained, monitored, and updated regularly. Frameworks like TensorFlow provide structured pipelines to manage this complexity, while PyTorch offers flexibility to adjust models quickly when data patterns shift. Keras helps in rapid experimentation, but it is rarely the final deployment tool in serious production environments.

Understanding this difference is critical because employers want engineers who can handle uncertainty. They are not just hiring someone who can build a model; they are hiring someone who can maintain it in a changing environment.

Why Adaptability is the Most Important Hiring Skill

One of the most underrated skills in machine learning careers is adaptability. Technology stacks change frequently, and companies evolve their infrastructure over time. A team that used TensorFlow a few years ago might gradually adopt PyTorch for research or switch to hybrid systems depending on project needs.

Because of this, employers prioritize candidates who can learn quickly rather than those who are tied to a single framework. If a candidate understands core machine learning principles deeply, switching from PyTorch to TensorFlow or vice versa becomes a matter of syntax rather than a complete learning barrier.

Keras plays a subtle but important role here by introducing learners to structured model building in a simplified way. Once this foundation is strong, transitioning into more complex frameworks becomes significantly easier.

The Hidden Importance of Debugging and Model Interpretation

Another area that strongly influences hiring decisions is debugging capability. Machine learning systems rarely work perfectly on the first attempt. Models may overfit, underperform, or behave unpredictably when exposed to new data.

PyTorch is often preferred during debugging-heavy workflows because of its dynamic computation graph, which makes it easier to inspect model behavior. TensorFlow, while initially more rigid, now also provides strong debugging and visualization tools. Keras simplifies this process but does not always provide deep control over internal operations.

Employers value candidates who can explain why a model is failing and how to fix it. This requires understanding beyond the framework level. It involves knowledge of loss functions, optimization behavior, feature distributions, and evaluation metrics.

Machine Learning as a Communication Skill

An often overlooked aspect of getting hired in machine learning is communication. Even the most technically strong model is useless if it cannot be explained clearly to stakeholders. Engineers are expected to communicate results to product managers, data teams, and sometimes non-technical decision-makers.

Frameworks do not directly teach communication, but they influence how clearly you can express ideas. For example, understanding TensorFlow’s structured pipeline can help explain how data flows through a system. PyTorch’s flexible design can help illustrate experimentation logic. Keras helps beginners articulate model structure in simple terms.

Being able to describe what your model does, why it performs a certain way, and what limitations it has is often a deciding factor in hiring decisions.

The Role of Real Projects in Building Job Readiness

Projects remain one of the strongest indicators of employability. However, not all projects are equally valuable. A project that simply replicates a tutorial adds limited value. On the other hand, a project that solves a real problem, even if simple, demonstrates deeper understanding.

For example, building a system that predicts outcomes based on real-world datasets, handles missing data, and includes a basic deployment pipeline shows practical awareness. The framework used becomes secondary to the structure of the solution.

Employers often evaluate projects to understand how candidates think, not just what tools they use. A well-documented project using PyTorch or TensorFlow is more impressive than advanced models without clear structure or explanation.

Framework Ecosystems and Industry Integration

Modern machine learning does not exist in isolation. Frameworks are part of larger ecosystems that include cloud services, data pipelines, and deployment tools. TensorFlow has a strong ecosystem for production integration, while PyTorch has rapidly expanded its support for deployment and scalability.

Companies often choose frameworks based on compatibility with their existing infrastructure. This is why job listings sometimes mention multiple frameworks instead of one. They are not looking for exclusivity; they are looking for compatibility.

Keras, while simpler, plays an important role in prototyping within these ecosystems. It allows teams to quickly test ideas before moving them into more complex frameworks for scaling.

Long-Term Career Growth in Machine Learning

A sustainable career in machine learning is built on continuous learning and adaptation. Frameworks will continue to evolve, new tools will emerge, and industry preferences will shift over time. What remains constant is the need for strong fundamentals.

Understanding linear algebra, probability, optimization, and data structures provides a foundation that supports long-term growth. Frameworks like PyTorch, TensorFlow, and Keras are simply ways to apply these concepts efficiently.

Professionals who focus only on tools may struggle when technologies change. However, those who focus on underlying principles can transition across frameworks and roles without difficulty.

Getting Hired in Machine Learning

Ultimately, getting hired in machine learning is not about choosing the “best” framework. It is about demonstrating the ability to solve real problems using the tools available. PyTorch, TensorFlow, and Keras each serve different purposes, but none of them guarantee employment on their own.

Employers look for candidates who understand data, think logically, communicate clearly, and adapt quickly. Framework knowledge is important, but it is only one part of a much larger skill set.

The strongest candidates are those who see frameworks as tools rather than identities. Once this mindset is developed, the focus naturally shifts from asking which framework gets you hired to how effectively you can use any framework to build meaningful solutions.

Moving Beyond Frameworks into Professional Machine Learning Engineering

As machine learning careers progress, the focus gradually shifts away from individual frameworks and moves toward building complete, reliable systems. At this stage, PyTorch, TensorFlow, and Keras are no longer the center of attention. Instead, they become interchangeable tools used to implement ideas within larger engineering workflows. What truly matters is the ability to design systems that work under real-world constraints such as scale, speed, and reliability.

Employers hiring for experienced machine learning roles expect candidates to think beyond model accuracy. They want engineers who understand how models interact with data pipelines, how predictions are served in real time, and how systems are maintained after deployment. Framework knowledge is assumed, but system-level thinking is what differentiates strong candidates.

Understanding End-to-End Machine Learning Systems

A production machine learning system typically involves several components working together. Data is collected from various sources, cleaned, transformed, and stored. Models are trained using frameworks like PyTorch or TensorFlow, evaluated using validation strategies, and then deployed into applications that serve predictions to users.

In this entire workflow, the framework is only one part of the pipeline. PyTorch might be used to experiment with a new model architecture, TensorFlow might be used to convert that model into a scalable service, and Keras might have been used earlier in the learning phase to understand the basics. However, none of these frameworks operate in isolation.

Employers value candidates who understand how these components connect. A well-designed system is more important than a highly complex model that cannot be deployed effectively.

The Importance of Scalability in Machine Learning Careers

Scalability is one of the most important factors in production machine learning systems. A model that works well on a small dataset is not necessarily useful in a system that handles millions of requests per day. This is where frameworks like TensorFlow have traditionally had an advantage due to their strong support for distributed computing and production deployment tools.

However, PyTorch has rapidly improved in this area, making it increasingly viable for large-scale systems as well. The gap between research and production frameworks is narrowing, and companies are now more flexible in their technology choices.

Despite this, scalability is not just about frameworks. It involves understanding infrastructure, load balancing, caching strategies, and efficient model design. Employers expect machine learning engineers to understand these concepts regardless of which framework they use.

Model Optimization and Performance Engineering

Another critical area in advanced machine learning roles is optimization. It is not enough for a model to be accurate; it must also be efficient. This includes reducing inference time, minimizing memory usage, and improving training speed.

Frameworks provide tools for optimization, but the underlying responsibility lies with the engineer. PyTorch allows fine-grained control over model components, making it easier to experiment with optimization techniques. TensorFlow offers built-in tools for performance tuning and deployment optimization. Keras simplifies model building but may abstract away some low-level optimization control.

Understanding how to optimize models for real-world environments is a key skill that directly impacts hiring decisions. Employers want engineers who can balance accuracy with efficiency.

The Shift from Framework Knowledge to Engineering Thinking

As candidates move from entry-level to mid-level and senior roles, the emphasis shifts from “which framework do you know” to “how do you solve complex system problems.” At this point, frameworks are simply implementation details.

Engineering thinking involves understanding trade-offs, designing scalable architectures, and anticipating system failures. For example, choosing between PyTorch and TensorFlow is less important than deciding how a model will handle data drift over time or how it will be retrained automatically when performance degrades.

This shift in thinking is what separates machine learning engineers from machine learning enthusiasts. Employers are not just hiring coders; they are hiring system designers.

Why Continuous Learning Matters More Than Tool Mastery

Machine learning is one of the fastest-evolving fields in technology. New models, architectures, and frameworks are introduced regularly. Because of this, long-term success depends on continuous learning rather than mastery of a single tool.

PyTorch and TensorFlow themselves are constantly evolving, adding new features and improving performance. Keras has also adapted over time, integrating more closely with TensorFlow. This constant change means that rigid specialization can become a limitation over time.

Candidates who are comfortable learning new tools quickly are far more valuable in the long run than those who are deeply attached to one framework. Adaptability becomes a core professional skill.

How Hiring Trends Are Evolving in Machine Learning

Modern hiring trends in machine learning show a clear shift toward versatility. Companies are no longer looking for candidates who only know one framework. Instead, they prefer engineers who understand multiple tools and can choose the right one for the task at hand.

Job descriptions increasingly emphasize skills like system design, data engineering, cloud computing, and model deployment alongside framework knowledge. This reflects the growing maturity of the machine learning industry.

In many cases, recruiters assume that candidates can learn frameworks quickly. What they cannot assume is whether a candidate understands how to apply machine learning in real-world systems. This is where differentiation happens.

The Role of Real-World Constraints in Hiring Decisions

In production environments, machine learning systems must operate under strict constraints. These include limited computational resources, strict latency requirements, and unpredictable user behavior.

Frameworks help manage these constraints, but they do not eliminate them. Engineers must make design decisions that balance performance, cost, and accuracy. For example, a slightly less accurate model might be preferred if it significantly reduces computation time and improves user experience.

Employers value candidates who understand these trade-offs. This kind of thinking goes beyond framework usage and enters the domain of engineering judgment.

Building a Sustainable Machine Learning Career Path

A sustainable career in machine learning is built on a combination of technical skills, practical experience, and continuous adaptation. PyTorch, TensorFlow, and Keras will continue to evolve, but the core principles of machine learning remain stable.

Understanding how data behaves, how models learn, and how systems operate in production is far more important than memorizing framework-specific functions. Once these foundations are strong, switching between tools becomes relatively easy.

Professionals who focus on long-term understanding rather than short-term tool mastery tend to grow faster in their careers. They are able to move between roles, industries, and technologies with minimal friction.

Final Insight

Ultimately, getting hired in machine learning is not about selecting the perfect framework. It is about demonstrating that you can build meaningful, reliable, and scalable systems using whatever tools are available.

PyTorch, TensorFlow, and Keras are all valuable, but they are only part of a much larger picture. Employers are looking for individuals who understand the entire lifecycle of machine learning systems, from data collection to deployment and maintenance.

Once this broader perspective is developed, frameworks stop being a deciding factor in hiring. Instead, they become flexible tools used to bring ideas to life in a constantly evolving technical landscape.