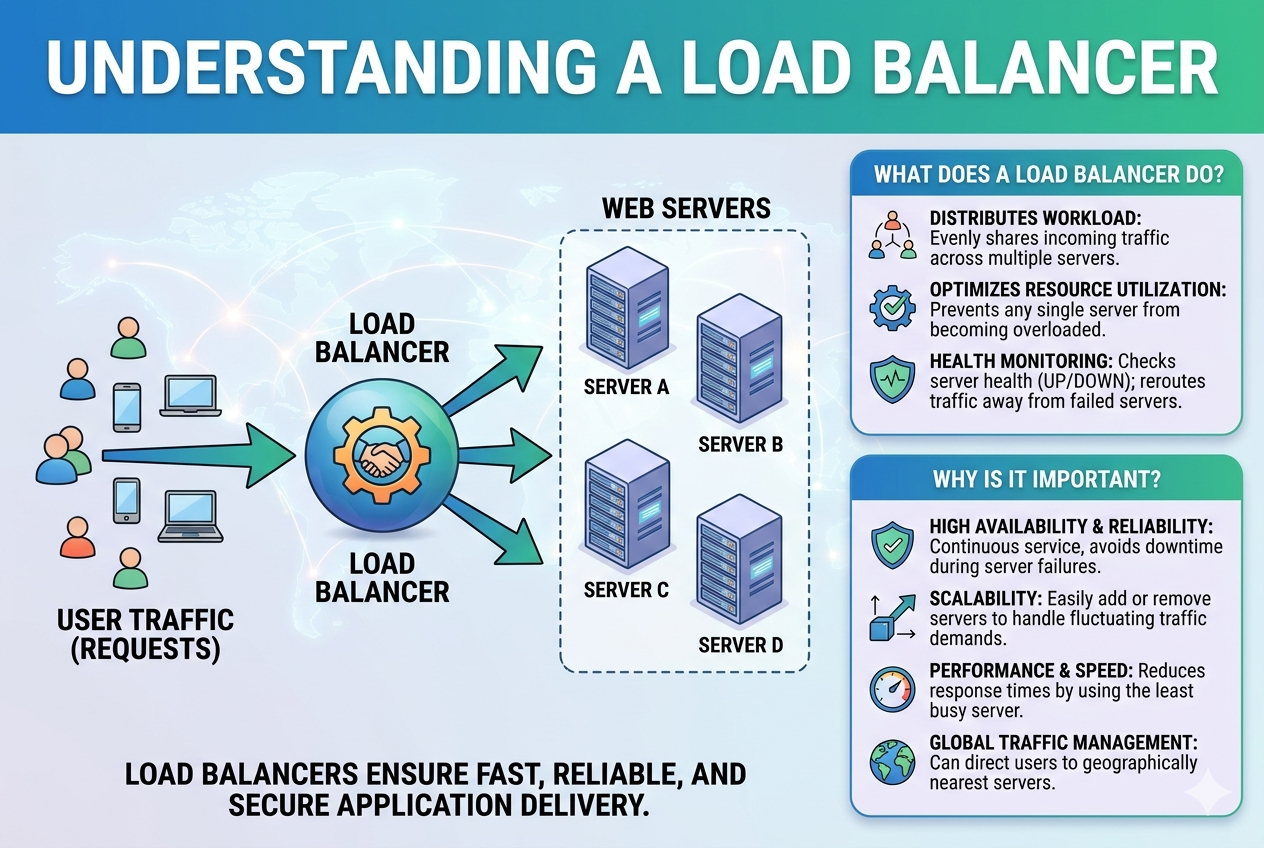

A load balancer is designed to act as an intelligent traffic distributor in a computing environment where multiple servers work together to handle user requests. Instead of allowing all incoming requests to go to a single server, it spreads the workload across several servers in a way that improves speed, reliability, and overall system efficiency. This ensures that applications remain responsive even when there is a sudden spike in traffic or when demand increases beyond normal levels. At its core, the load balancer helps maintain balance in the system so that no single server becomes a bottleneck or point of failure.

How Load Balancers Improve System Performance

Performance improvement is one of the primary reasons organizations use load balancers. When user requests are evenly distributed, each server handles a smaller portion of the total workload, allowing it to respond faster. Without this distribution, a single server may become overloaded, leading to slower response times or even complete failure. Load balancers continuously monitor the health and performance of servers and ensure that requests are directed to the most suitable one at any given moment. This dynamic adjustment plays a key role in keeping applications fast and responsive under varying levels of demand.

The Role of Load Balancing in High Availability

High availability refers to the ability of a system to remain operational even when part of it fails. Load balancers contribute directly to this by detecting server failures and automatically redirecting traffic to healthy servers. If one server goes offline due to maintenance or unexpected issues, the load balancer ensures that users are not affected by rerouting their requests elsewhere. This creates a seamless experience where downtime is minimized or completely hidden from the user. As a result, businesses can provide continuous service without interruptions that could affect user trust or revenue.

Different Methods Used to Distribute Traffic

Load balancers use various algorithms to decide how traffic should be distributed. One common method is round robin, where requests are assigned to servers in a rotating order. Another method is least connections, where the load balancer sends traffic to the server with the fewest active connections at that time. Some systems also use weighted distribution, where stronger servers handle more requests than weaker ones. More advanced techniques take server response time and current load into account to make even more efficient decisions. These methods ensure that resources are used optimally across the entire system.

Types of Load Balancers in Modern Systems

There are different types of load balancers based on where and how they operate. Some work at the network level, handling raw traffic and distributing it based on IP addresses and ports. Others function at the application level, making decisions based on the content of the request, such as URLs or headers. Application-based load balancers are more intelligent and can route traffic based on specific rules, making them suitable for complex modern applications. There are also hardware-based and software-based load balancers, with software solutions becoming more popular due to their flexibility and scalability.

Importance in Scalable System Design

Scalability is the ability of a system to handle increased load by adding more resources. Load balancers make this possible by allowing new servers to be added easily to the system. When demand grows, additional servers can be introduced, and the load balancer will automatically include them in the traffic distribution process. This prevents the system from becoming overwhelmed and allows it to grow smoothly without major redesigns. It also ensures that performance remains consistent even as the number of users increases significantly over time.

Enhancing Reliability Through Load Distribution

Reliability in a system means that it consistently performs its intended function without failure. Load balancers enhance reliability by reducing the dependency on a single server. Since multiple servers share the workload, the failure of one does not affect the entire system. Instead, traffic is redistributed instantly to other available servers. This redundancy ensures that services remain accessible even in the presence of hardware or software issues. Over time, this leads to a more stable and dependable infrastructure that users can trust.

Health Monitoring and Automatic Failover

One of the critical functions of a load balancer is continuous health monitoring. It regularly checks whether servers are functioning correctly by sending test requests or monitoring responses. If a server is detected to be slow or unresponsive, the load balancer temporarily removes it from the pool of active servers. This process is known as automatic failover. Once the server recovers and passes health checks again, it is brought back into service. This automated process reduces the need for manual intervention and ensures uninterrupted service delivery.

Load Balancers in Cloud-Based Environments

In modern cloud computing systems, load balancers play an even more important role. Cloud environments often involve dynamically changing resources where servers can be added or removed automatically based on demand. Load balancers work seamlessly in this environment by adapting to these changes in real time. They ensure that traffic is always directed to available and healthy resources without requiring manual configuration. This makes them essential components in cloud architecture, especially for applications that experience unpredictable or seasonal traffic patterns.

Security Benefits of Load Balancers

Beyond performance and reliability, load balancers also contribute to system security. By acting as an intermediary between users and servers, they help hide the internal structure of the system. This makes it harder for attackers to target specific servers directly. Some load balancers also include built-in security features such as protection against distributed denial-of-service attacks by filtering and managing excessive traffic. This adds an additional layer of defense, helping protect applications from malicious activity and traffic overload.

Reducing Downtime and Improving User Experience

Downtime can be costly for any digital service, both in terms of revenue and user trust. Load balancers help minimize downtime by ensuring continuous traffic flow even during failures or maintenance activities. When updates or repairs are required, servers can be taken offline one by one while others continue handling traffic. This allows maintenance to occur without affecting the user experience. As a result, users enjoy uninterrupted access to services, which improves satisfaction and engagement.

Efficient Resource Utilization Across Servers

Without load balancing, some servers may remain underused while others become overloaded. Load balancers solve this inefficiency by distributing traffic evenly across all available resources. This ensures that computing power, memory, and processing capabilities are used effectively. By optimizing resource utilization, organizations can reduce waste and improve the return on investment in infrastructure. It also helps extend the lifespan of servers by preventing excessive strain on individual machines.

Challenges in Load Balancing Systems

Despite its benefits, load balancing also comes with certain challenges. One of the main difficulties is ensuring accurate health checks so that only truly healthy servers receive traffic. Incorrect assessments can lead to inefficient routing. Another challenge is handling complex application architectures where multiple services depend on each other. In such cases, designing effective load balancing strategies requires careful planning. Additionally, maintaining consistency and session handling across multiple servers can be complex in certain applications.

Modern Trends in Load Balancing Technology

As technology evolves, load balancing systems are becoming more intelligent and automated. Modern systems can analyze traffic patterns in real time and adjust distribution strategies dynamically. Some even use predictive techniques to anticipate traffic spikes and prepare resources in advance. Integration with artificial intelligence and machine learning is also becoming more common, allowing systems to make more efficient routing decisions. These advancements are making load balancers more adaptive and capable than ever before.

A load balancer is a critical component in any modern digital infrastructure. It ensures that systems remain fast, reliable, scalable, and secure by intelligently distributing traffic across multiple servers. Without it, applications would struggle to handle high demand, suffer from frequent downtime, and deliver inconsistent performance. By managing traffic efficiently and adapting to changing conditions, load balancers play a foundational role in supporting the performance and stability of today’s online services.

Load Balancer and Fault Tolerance in Modern Systems

Fault tolerance is the ability of a system to continue functioning even when parts of it fail, and load balancers play a central role in achieving this. In a multi-server environment, failures are not uncommon due to hardware issues, software bugs, or network disruptions. A load balancer ensures that such failures do not affect the overall availability of the service. When a server stops responding or becomes unstable, the load balancer quickly detects the issue and stops sending traffic to that server. Instead, it redirects requests to other healthy servers in the pool. This automatic adjustment allows users to continue accessing applications without noticing any interruption. Over time, this creates a resilient system that can handle unexpected problems without collapsing.

Session Persistence and User Experience Consistency

In many applications, especially those involving login systems or shopping carts, maintaining session consistency is important. Session persistence, sometimes called sticky sessions, ensures that a user continues interacting with the same server during a session. This is useful because it prevents data loss or inconsistencies that may occur if requests are sent to different servers. Load balancers can be configured to support this behavior by tracking user sessions and directing their requests accordingly. At the same time, modern systems balance persistence with performance, ensuring that users still benefit from load distribution without sacrificing continuity. This balance is essential for delivering a smooth and predictable user experience.

Layered Architecture and Load Balancer Positioning

Load balancers are typically placed strategically within system architecture to maximize efficiency. They often sit between the user and the backend servers, acting as a gateway that manages all incoming traffic. In more complex systems, multiple layers of load balancing may exist, such as one at the network level and another at the application level. Each layer serves a different purpose, from handling basic routing to making content-based decisions. This layered approach allows systems to handle different types of traffic more effectively and ensures that each request is processed in the most efficient way possible.

Horizontal Scaling and Load Balancer Support

Horizontal scaling refers to adding more servers to a system rather than increasing the power of a single server. Load balancers are essential for enabling this approach because they distribute traffic evenly across all available servers. When a new server is added, the load balancer automatically begins sending requests to it without requiring major system changes. This flexibility allows organizations to scale their infrastructure quickly in response to increasing demand. Unlike vertical scaling, which has physical limitations, horizontal scaling supported by load balancing offers virtually unlimited growth potential.

Latency Reduction Through Intelligent Routing

Latency refers to the delay between a user’s request and the system’s response. Load balancers help reduce latency by directing traffic to servers that can respond fastest. Some advanced load balancing systems consider factors such as server location, current load, and network conditions before making routing decisions. By selecting the optimal server for each request, they minimize delays and improve overall responsiveness. This is especially important for global applications where users are spread across different regions and expect fast performance regardless of their location.

Geographic Load Balancing Across Regions

In large-scale systems, users may access services from different parts of the world. Geographic load balancing helps distribute traffic across servers located in multiple regions. This ensures that users are connected to the nearest or most efficient data center, reducing latency and improving speed. It also provides redundancy in case an entire region experiences issues. By spreading traffic geographically, organizations can deliver a more consistent experience globally while also protecting against regional failures or outages.

Impact on Resource Optimization and Cost Efficiency

Efficient use of infrastructure resources directly impacts operational costs. Load balancers help organizations avoid over-provisioning by ensuring that all servers are used effectively. Instead of running a few overloaded servers, traffic is distributed evenly across many machines, maximizing their utilization. This means businesses can achieve better performance without unnecessary hardware investment. Additionally, cloud-based environments often charge based on usage, so balanced traffic distribution can lead to significant cost savings over time.

Security Filtering and Traffic Management

Load balancers can also act as a first line of defense by filtering incoming traffic. They can block suspicious requests, limit excessive traffic from certain sources, and prevent overload conditions caused by malicious attacks. This helps protect backend servers from being directly exposed to harmful traffic. Some systems include rate limiting features, which control how many requests a user or IP address can send within a specific time. These capabilities enhance overall system security and reduce the risk of service disruption.

Integration with Modern DevOps Practices

In modern software development environments, load balancers are closely integrated with DevOps practices. As applications are updated frequently, load balancers help ensure that new versions can be deployed without downtime. This is achieved through techniques such as blue-green deployments or rolling updates, where traffic is gradually shifted from old servers to new ones. This approach allows continuous delivery of updates while maintaining system stability and user access. Load balancers make it possible to manage complex deployment workflows smoothly and safely.

Handling Traffic Spikes and Sudden Demand

One of the most important roles of a load balancer is handling sudden increases in traffic. Whether caused by marketing campaigns, viral content, or seasonal demand, traffic spikes can overwhelm unprepared systems. Load balancers distribute this surge across all available servers, preventing crashes and maintaining performance. In cloud environments, they can also work alongside auto-scaling systems that add new servers automatically when demand increases. This combination ensures that systems remain stable even under extreme load conditions.

Monitoring, Analytics, and Performance Insights

Load balancers often provide detailed insights into traffic patterns, server performance, and system behavior. This data helps administrators understand how resources are being used and identify potential bottlenecks. By analyzing this information, organizations can make informed decisions about scaling, optimization, and infrastructure improvements. Monitoring tools associated with load balancers also help detect anomalies early, allowing proactive action before issues become serious problems.

Challenges in Advanced Load Balancing Systems

While load balancers provide many benefits, managing them in large-scale environments can be complex. Ensuring consistent performance across distributed systems requires careful configuration and ongoing monitoring. Misconfigured routing rules can lead to uneven traffic distribution or unexpected behavior. Additionally, balancing session persistence with scalability can be difficult in some applications. Despite these challenges, the advantages of load balancing far outweigh the difficulties when implemented correctly.

Future of Load Balancing Technology

The future of load balancing is moving toward greater automation and intelligence. With advancements in artificial intelligence and machine learning, systems are becoming capable of predicting traffic patterns and adjusting configurations automatically. This reduces the need for manual intervention and improves efficiency. Future load balancers may also integrate more deeply with application performance monitoring tools, creating fully adaptive systems that optimize themselves in real time based on user behavior and system conditions.

Load Balancer in Microservices Architecture

Modern applications are increasingly built using microservices architecture, where a single application is divided into multiple small, independent services. Each service handles a specific function, such as authentication, payment processing, or data retrieval. In such environments, a load balancer becomes even more critical because it manages traffic not just at the application level, but also between different services. It ensures that requests are routed to the correct service instance efficiently. Since each microservice may have multiple replicas running simultaneously, the load balancer distributes requests evenly among them, preventing overload and ensuring smooth communication between services. This improves modularity, scalability, and fault isolation within the system.

Dynamic Traffic Routing and Adaptive Decisions

Load balancers are no longer static systems that simply distribute traffic in a fixed pattern. Modern implementations make dynamic decisions based on real-time system conditions. They continuously evaluate server health, response times, CPU usage, and network latency before deciding where to send each request. This adaptive behavior ensures that traffic is always directed to the most optimal server at any given moment. If one server becomes slow due to high load or temporary issues, the load balancer automatically reduces traffic to it and shifts requests elsewhere. This dynamic routing significantly enhances system responsiveness and stability under changing workloads.

Role in Content Delivery Optimization

Load balancers also play an important role in improving content delivery, especially for applications that serve media, images, or large data files. By distributing requests across multiple servers or caching layers, they reduce the strain on individual systems and speed up content delivery to users. Some advanced setups integrate load balancing with caching mechanisms, ensuring that frequently accessed content is delivered quickly from the nearest or least busy server. This reduces bandwidth usage and improves user experience, particularly in high-traffic environments such as streaming platforms or online marketplaces.

Health Checks and Continuous System Verification

Continuous monitoring is one of the core responsibilities of a load balancer. It regularly performs health checks on all connected servers to ensure they are functioning correctly. These checks may include simple response tests or more advanced performance evaluations. If a server fails these checks, it is immediately marked as unhealthy and removed from the active pool of servers. This prevents users from being directed to malfunctioning systems. Once the server recovers and passes the required checks, it is reintegrated into the system. This constant verification process ensures high reliability and prevents service disruption.

Load Balancer and API Management

In modern digital ecosystems, APIs (Application Programming Interfaces) are essential for communication between services. Load balancers are often used to manage API traffic efficiently. They ensure that API requests are evenly distributed across multiple backend servers, preventing overload on any single endpoint. This is especially important for high-traffic applications where thousands or millions of API calls may occur every second. By balancing this load, systems maintain stable response times and avoid bottlenecks that could slow down application performance.

Reducing Single Points of Failure

One of the major risks in any system is the presence of a single point of failure, where one component’s malfunction can bring down the entire system. Load balancers help eliminate this risk by distributing traffic across multiple servers. If one server fails, the system continues to function using other available servers. This redundancy ensures that there is no single dependency that can cause complete system failure. As a result, applications become more robust and capable of handling unexpected disruptions without affecting users.

Load Balancer Configuration and Flexibility

Load balancers offer a high level of configuration flexibility, allowing system administrators to define how traffic should be managed. They can set rules based on IP addresses, request types, user locations, or application paths. This flexibility allows organizations to customize traffic flow according to their specific needs. For example, certain servers can be dedicated to handling premium users, while others handle general traffic. This level of control helps optimize performance and ensures that resources are allocated efficiently across different user groups and services.

Scalability in Distributed Systems

In distributed systems, where applications are deployed across multiple locations or cloud environments, load balancers ensure seamless scalability. As demand grows, new servers can be added to the system without disrupting existing operations. The load balancer automatically recognizes these new resources and includes them in its traffic distribution logic. This ability to scale horizontally is essential for modern applications that experience unpredictable or rapidly growing user bases. It allows businesses to expand their infrastructure without major architectural changes.

Performance Tuning and Optimization Strategies

Load balancing is not a one-time setup but an ongoing process that requires tuning and optimization. Administrators often analyze traffic data to adjust load balancing strategies for better performance. For example, if certain servers consistently handle more traffic efficiently, weights can be adjusted to favor them. Similarly, routing algorithms may be changed based on observed patterns. This continuous optimization ensures that the system operates at peak efficiency and adapts to evolving demands over time.

Role in Disaster Recovery Planning

Disaster recovery is a critical aspect of system design, and load balancers play an important role in it. In the event of a major failure such as a data center outage, load balancers can redirect traffic to backup servers located in different regions. This ensures that services remain available even during catastrophic events. By integrating with disaster recovery strategies, load balancers help organizations maintain business continuity and minimize the impact of unexpected failures.

Impact on Global Application Performance

For applications with a global user base, load balancers help ensure consistent performance across different geographical regions. By directing users to the nearest or fastest available server, they reduce latency and improve responsiveness. This is particularly important for services such as online gaming, video streaming, and financial platforms, where even small delays can significantly affect user experience. Geographic distribution combined with intelligent routing creates a smooth and efficient global network.

Automation and Self-Healing Systems

Modern load balancing systems are increasingly automated and capable of self-healing. They can detect issues, isolate failing components, and reroute traffic without human intervention. This reduces operational overhead and improves system reliability. Automation also allows systems to respond faster than manual processes, ensuring that problems are resolved almost instantly. Self-healing capabilities are a key feature of advanced infrastructure designs, especially in cloud-native environments.

Cost Control Through Efficient Traffic Management

Efficient load balancing also contributes to better cost management. By optimizing how resources are used, organizations can avoid unnecessary infrastructure expansion. Instead of overloading a few servers or maintaining excess capacity, load balancers ensure that existing resources are fully utilized. In cloud environments where billing is based on usage, this efficiency directly translates into cost savings. Over time, proper load balancing can significantly reduce operational expenses while maintaining high performance.

Evolution of Load Balancing Technologies

Load balancing technology has evolved significantly over the years. Early systems were simple and rule-based, while modern systems are intelligent, adaptive, and integrated with cloud ecosystems. Today’s load balancers can handle complex workloads, support hybrid environments, and integrate with container orchestration platforms. This evolution reflects the growing complexity of modern applications and the need for more advanced traffic management solutions.

Load Balancer in Cloud-Native Environments

In cloud-native systems, applications are designed to run in highly dynamic environments where resources can be created, destroyed, or modified at any time. Load balancers are essential in such setups because they automatically adjust to these changes without requiring manual intervention. When new instances of an application are launched, the load balancer detects them and begins routing traffic accordingly. Similarly, when instances are removed or replaced, it ensures that no requests are sent to unavailable resources. This flexibility allows cloud applications to remain stable even when the underlying infrastructure is constantly changing. It also enables organizations to deploy updates and scale services seamlessly without downtime.

Integration with Container Orchestration Systems

Modern applications often rely on container technologies where services are packaged into lightweight, portable units. In such environments, load balancers work closely with orchestration systems to manage traffic distribution across container clusters. Since containers can be created or terminated within seconds, the load balancer must continuously update its routing logic. It ensures that traffic is always directed to active containers while ignoring those that are no longer available. This tight integration allows applications to scale rapidly and efficiently while maintaining consistent performance.

Load Balancer and Edge Computing

Edge computing brings processing closer to the user by placing servers at the edge of the network, near data sources or end users. Load balancers play an important role in managing traffic in these distributed environments. They help decide whether a request should be processed locally at the edge or sent to a central data center. This decision is based on factors such as latency, server availability, and processing requirements. By intelligently routing traffic, load balancers reduce delays and improve real-time responsiveness, which is especially important for applications like IoT systems, autonomous devices, and live data processing.

Smart Routing Based on User Behavior

Advanced load balancing systems are capable of analyzing user behavior patterns to improve routing decisions. Instead of treating all requests equally, they can prioritize traffic based on usage trends, time of day, or application type. For example, frequently accessed services may be directed to high-performance servers, while less critical tasks are routed elsewhere. This behavioral awareness allows systems to optimize performance in a more intelligent and user-focused way. Over time, this leads to smoother experiences and better resource utilization.

Role in Hybrid Infrastructure Environments

Many organizations today use hybrid infrastructure models that combine on-premises servers with cloud-based resources. Load balancers help unify these environments by distributing traffic across both local and remote systems. They ensure that users experience a seamless interface regardless of where the underlying processing is happening. This hybrid approach provides flexibility, allowing businesses to keep sensitive data on-premises while still leveraging the scalability of the cloud. Load balancers act as the bridge that connects these two environments efficiently.

Traffic Prioritization and Quality of Service

Not all network traffic is equal, and load balancers can prioritize certain types of requests over others. This concept, known as quality of service, ensures that critical operations receive the necessary resources first. For instance, real-time communication or payment processing requests may be given higher priority compared to background tasks. By managing priorities effectively, load balancers help maintain system stability even during peak load conditions. This ensures that essential services remain responsive at all times.

Role in Real-Time Applications

Real-time applications such as online gaming, video conferencing, and financial trading platforms require extremely low latency and high reliability. Load balancers are crucial in these systems because they ensure that requests are processed as quickly as possible. They continuously monitor server performance and route traffic to the fastest available option. Any delay or inefficiency can significantly impact user experience, so precise load distribution is essential. This makes load balancing a foundational technology in real-time digital ecosystems.

Load Balancer Security Enhancements in Modern Systems

Security has become a major focus in modern infrastructure, and load balancers contribute significantly in this area. They help protect backend systems by filtering traffic and blocking suspicious requests before they reach internal servers. Some advanced load balancers also include built-in encryption handling, ensuring secure communication between users and servers. Additionally, they can limit excessive requests from a single source, helping prevent abuse and protecting systems from overload attacks. This combination of traffic control and security enforcement makes them a vital defense layer.

Adaptive Load Distribution in High-Traffic Scenarios

During periods of extremely high traffic, such as product launches or global events, systems can experience sudden surges in demand. Load balancers respond by dynamically adjusting traffic distribution strategies to maintain stability. They may shift from simple routing methods to more advanced algorithms that consider real-time performance metrics. In some cases, they can work alongside auto-scaling systems to add new resources automatically. This adaptive behavior ensures that applications remain stable even under extreme conditions.

Data-Driven Decision Making in Load Balancing

Modern load balancers rely heavily on data to make intelligent routing decisions. They collect and analyze metrics such as response time, error rates, server load, and network conditions. This data-driven approach allows them to continuously refine their performance. Instead of relying on static rules, they evolve based on real system behavior. Over time, this leads to more accurate and efficient traffic distribution, improving both reliability and user experience.

Cross-Region Failover Strategies

In globally distributed systems, load balancers play a key role in cross-region failover planning. If an entire region becomes unavailable due to network issues or disasters, traffic is automatically redirected to another region. This ensures that users can still access services without interruption. Cross-region failover adds an additional layer of resilience, making systems more robust against large-scale disruptions. It is especially important for critical applications that require continuous availability across the world.

Load Balancing in Serverless Architectures

Serverless computing removes the need for traditional server management, but load balancing still plays a role in distributing function executions. In these environments, load balancers help manage incoming requests and distribute them across multiple function instances. Since serverless systems automatically scale based on demand, load balancing ensures that requests are efficiently routed without delay. This allows developers to focus on application logic while the infrastructure automatically handles scaling and distribution.

Continuous Improvement Through Feedback Loops

Modern load balancing systems often include feedback loops that help improve performance over time. These loops collect performance data, analyze system behavior, and adjust routing strategies accordingly. This continuous improvement cycle ensures that the system becomes more efficient as it operates. It also allows load balancers to adapt to changing workloads, application updates, and user behavior patterns without requiring manual reconfiguration.

Long-Term Impact on Digital Infrastructure Stability

The long-term impact of load balancers on digital infrastructure is significant. They provide the foundation for stable, scalable, and resilient systems that can handle increasing global demand. Without load balancing, modern applications would struggle to maintain performance, especially under heavy or unpredictable workloads. By distributing traffic intelligently, they ensure that systems remain operational, responsive, and efficient over time. Their role continues to grow as digital ecosystems become more complex and interconnected, making them an indispensable part of modern computing architecture.

Load Balancer in High-Availability Architecture Design

High-availability architecture is designed to ensure that applications remain accessible even in the presence of failures, and load balancers are a central component of this design. They distribute incoming requests across multiple servers that are often placed in different zones or data centers. This separation ensures that even if one location experiences an outage, the system as a whole continues to function. Load balancers constantly monitor all available resources and intelligently reroute traffic away from problematic areas. This creates a stable environment where downtime is minimized and users experience uninterrupted access to services regardless of backend issues.

Load Balancer Role in Traffic Isolation and Segmentation

In complex systems, it is often necessary to isolate different types of traffic for better performance and control. Load balancers help achieve this by segmenting traffic based on rules such as user type, request type, or service category. For example, internal administrative traffic can be separated from external user traffic, ensuring that critical operations are not affected by public demand spikes. This segmentation improves system efficiency and also enhances security by reducing unnecessary exposure between different parts of the system. It allows organizations to maintain structured and controlled communication flows across their infrastructure.

Load Balancer and System Observability

Observability refers to the ability to understand the internal state of a system based on its outputs, and load balancers contribute significantly to this capability. They generate valuable data such as request counts, response times, error rates, and server performance metrics. This information helps system administrators gain insights into how applications are behaving under different conditions. By analyzing this data, teams can detect performance bottlenecks, identify failing components, and optimize system behavior. Load balancers therefore act not only as traffic managers but also as key sources of operational intelligence.

Load Balancing in Multi-Tier Application Systems

Many modern applications are built using multi-tier architecture, where different layers handle different responsibilities such as presentation, business logic, and data storage. Load balancers are used at various points within these layers to ensure smooth communication between them. For example, one load balancer may manage traffic between users and application servers, while another handles communication between application servers and database clusters. This layered approach ensures that each tier operates efficiently and is protected from overload caused by other layers in the system.

Adaptive Recovery and Self-Healing Behavior

Self-healing systems are designed to recover automatically from failures without human intervention, and load balancers are a key enabler of this capability. When a server becomes unresponsive, the load balancer immediately stops sending traffic to it and redistributes requests to healthy servers. Once the failed server recovers, it is automatically reintroduced into the system after passing health checks. This continuous cycle of detection, isolation, and reintegration ensures that the system remains stable and self-sustaining, even in unpredictable conditions.

Load Balancer Impact on User-Centric Performance Optimization

User experience is one of the most important aspects of modern applications, and load balancers play a direct role in improving it. By reducing latency, preventing server overload, and ensuring consistent response times, they create a smoother and more reliable experience for users. Load balancers can also prioritize traffic for premium users or time-sensitive requests, ensuring that critical interactions are handled quickly. This user-focused optimization helps organizations maintain satisfaction and engagement across their platforms.

Load Balancing in Distributed Database Systems

In distributed database systems, load balancers help manage query distribution across multiple database nodes. Instead of sending all queries to a single database server, requests are distributed evenly to improve performance and reduce contention. This approach ensures faster query processing and prevents database overload. It also improves fault tolerance, as queries can be rerouted to other nodes if one becomes unavailable. This makes large-scale data management more efficient and reliable.

Importance of Load Balancers in Modern Digital Ecosystems

As digital ecosystems become more complex, involving cloud platforms, mobile applications, APIs, and global users, load balancers serve as the central coordination layer. They ensure that all components work together smoothly by managing how traffic flows between them. Without load balancers, systems would struggle to handle scale, performance demands would increase, and reliability would decrease. Their role is not limited to traffic distribution but extends to performance optimization, security enforcement, and system stability.

Load Balancer Evolution with Artificial Intelligence Integration

The integration of artificial intelligence into load balancing systems is transforming how traffic is managed. AI-powered load balancers can analyze historical data, predict traffic surges, and adjust routing strategies in advance. This predictive capability allows systems to prepare for demand before it occurs, reducing strain and improving performance. Machine learning models also help identify patterns that traditional systems might miss, resulting in smarter and more efficient load distribution decisions over time.

Conclusion

Load balancers are a fundamental component of modern computing infrastructure, playing a critical role in ensuring that applications remain fast, reliable, scalable, and secure. They distribute traffic intelligently across multiple servers, preventing overload and eliminating single points of failure. Beyond simple request routing, they contribute to performance optimization, system monitoring, security enforcement, and disaster recovery. In today’s highly connected digital world, where applications must serve millions of users simultaneously, load balancers provide the stability and flexibility needed to maintain consistent performance. As technology continues to evolve with cloud computing, microservices, and artificial intelligence, the importance of load balancers will only continue to grow, making them an essential backbone of all modern digital systems.