Social engineering attacks are among the most dangerous cybersecurity threats because they focus on manipulating human behavior instead of breaking technical defenses. Attackers exploit natural human tendencies such as trust, curiosity, fear, urgency, and helpfulness. Unlike traditional hacking methods that rely on software vulnerabilities, social engineering depends on psychological manipulation, making it harder to detect and prevent without awareness and training.

These attacks can occur in both physical and digital environments, and they often serve as the first step in larger cyber intrusions. Understanding how these methods work is essential for building strong security habits in personal and professional environments.

Tailgating in Physical Security

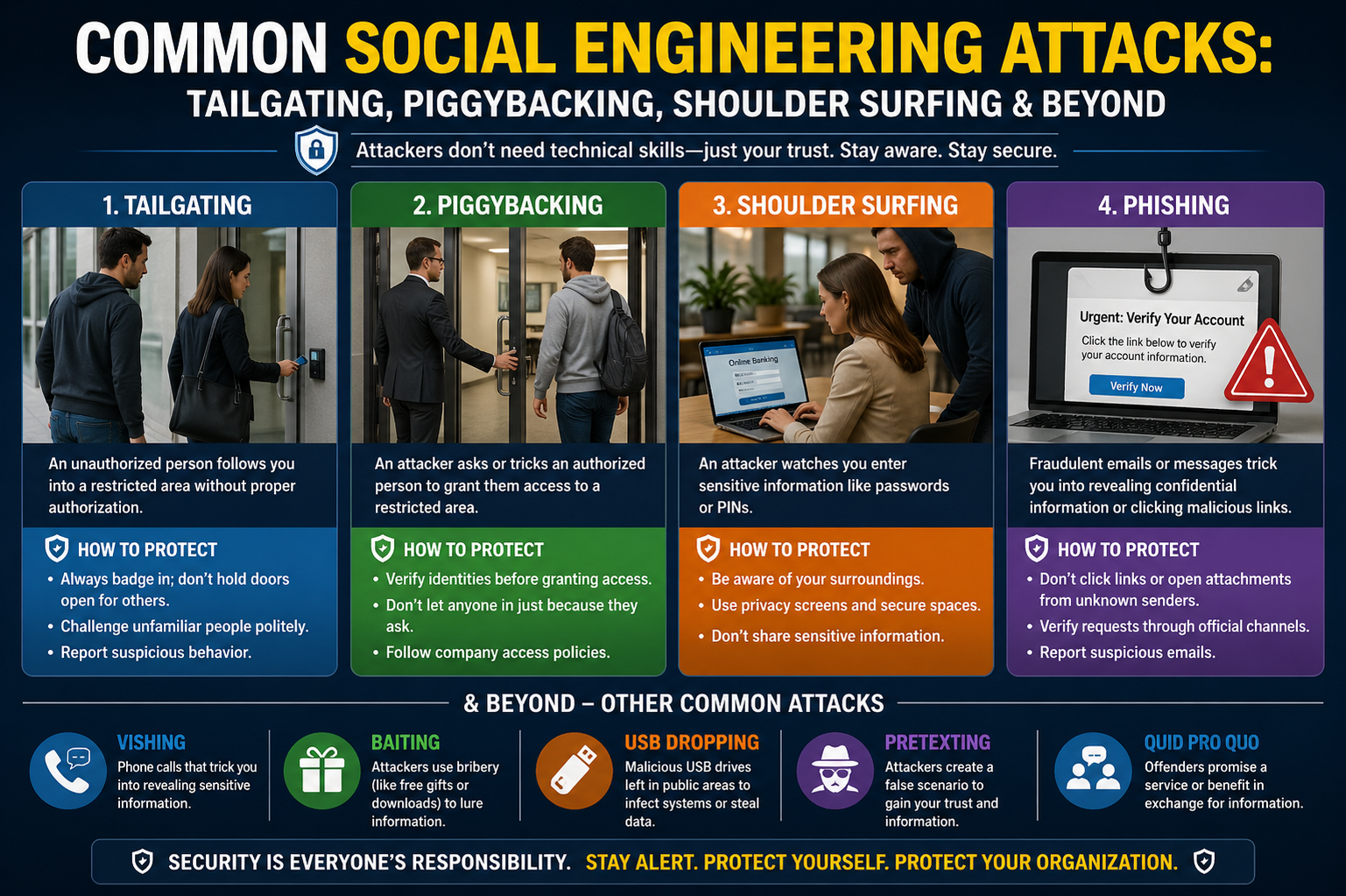

Tailgating is a physical security breach where an unauthorized individual gains access to a restricted area by closely following someone who is authorized. This usually happens in workplaces, data centers, or secured buildings where access control systems like keycards or biometric scanners are in place.

Attackers rely on timing and human politeness. For example, when an employee opens a secure door, the attacker walks in immediately behind them without using credentials. In many cases, the authorized person does not question the presence of the stranger, assuming they also belong there. This small lapse in attention can lead to serious security breaches.

Tailgating is especially effective in environments where employees are not trained to challenge unfamiliar individuals. Even simple habits like holding doors open for others can unintentionally create vulnerabilities if security awareness is low.

Piggybacking and Social Compliance

Piggybacking is closely related to tailgating but involves a higher level of social interaction. In this case, the attacker may directly request permission to enter a secure area, often using a believable excuse. For instance, they might claim to have forgotten their access card or pretend to be a delivery person or technician.

Because people are naturally inclined to be helpful, they may allow the individual to enter without verifying their identity. Unlike tailgating, piggybacking often involves active consent from the victim, even though it is given under false pretenses.

This method demonstrates how social pressure and courtesy can override security protocols when individuals are not cautious.

Shoulder Surfing and Visual Eavesdropping

Shoulder surfing is a technique where an attacker observes someone entering sensitive information by watching over their shoulder or from a nearby position. This can occur in crowded environments such as offices, airports, cafes, or public transportation.

The attacker may look for passwords, PIN codes, credit card details, or confidential messages displayed on screens. In some cases, they may use cameras or recording devices to capture information without being noticed.

This attack highlights the importance of physical awareness in addition to digital security. Even strong passwords become useless if someone observes them during entry.

Phishing Attacks and Deceptive Communication

Phishing is one of the most widespread forms of social engineering. It involves sending fraudulent messages that appear to come from trusted sources such as banks, companies, or colleagues. These messages often encourage the victim to click on a link, download an attachment, or provide sensitive information.

The success of phishing lies in creating urgency or fear. For example, a message might warn that an account will be suspended unless immediate action is taken. This pressure causes individuals to act without verifying the legitimacy of the request.

More targeted versions of phishing exist, where attackers personalize messages using specific information about the victim. This increases credibility and makes detection more difficult.

Pretexting and Fabricated Identity

Pretexting involves creating a false identity or scenario to trick individuals into revealing confidential information. The attacker may impersonate someone in authority, such as a manager, IT support staff, or government official.

They build trust by presenting a believable story and asking for information under the guise of verification or assistance. For example, an attacker might claim they are resetting a system and need login credentials to proceed.

The effectiveness of pretexting depends on how convincing the fabricated role is and how willing the victim is to comply with perceived authority.

Baiting Techniques and Temptation-Based Attacks

Baiting relies on offering something attractive to lure victims into a trap. This could include free downloads, movies, software, or even physical devices like infected USB drives.

When the victim interacts with the bait, malicious software may be installed on their system, or sensitive data may be exposed. The psychological trigger in baiting is curiosity or greed, which encourages individuals to take risks they normally would avoid.

This method is particularly dangerous because it often appears harmless or beneficial at first glance.

Quid Pro Quo Social Engineering

Quid pro quo attacks involve offering a service or benefit in exchange for information. Attackers may pose as technical support agents offering help with system issues. In return, they request login credentials or remote access to the device.

The victim believes they are receiving assistance, while in reality, they are giving away control or sensitive data. This method relies heavily on trust and the perception of mutual benefit.

It is especially effective in corporate environments where employees frequently interact with support teams.

Vishing and Voice-Based Manipulation

Vishing, or voice phishing, is conducted over phone calls. Attackers impersonate trusted entities such as banks, service providers, or internal departments. They use persuasive communication skills to convince victims to share personal information or perform actions like transferring money.

Because phone conversations feel more personal and immediate, victims are often less cautious than they would be with written communication. Attackers may also use spoofed numbers to appear legitimate.

Smishing and Mobile-Based Attacks

Smishing is a variation of phishing that occurs through SMS messages. These messages often contain links or instructions that lead to malicious websites or downloads. They may claim urgent issues such as package delivery problems, account verification, or security alerts.

Mobile users are particularly vulnerable because messages are often read quickly and on the go, reducing the likelihood of careful verification.

Dumpster Diving and Physical Data Theft

Dumpster diving involves searching through trash or discarded materials to find sensitive information. Attackers may look for printed documents, old devices, notes, or storage media that were improperly disposed of.

Organizations that fail to securely destroy physical documents or data storage devices can unintentionally expose confidential information through this method.

Proper disposal practices, such as shredding documents and securely wiping digital storage, are essential to prevent this type of attack.

Pharming and Digital Redirection Attacks

Pharming is a more advanced technique where attackers redirect users from legitimate websites to fake ones without their knowledge. This is often achieved by compromising DNS systems or modifying local host settings.

Even when a user enters the correct website address, they may be silently redirected to a malicious site designed to steal login credentials or personal data.

Because the user does not realize anything is wrong, pharming can be extremely difficult to detect without technical safeguards.

Impersonation and Authority Exploitation

Impersonation attacks involve pretending to be someone else to gain trust and access. Attackers may pose as employees, contractors, or officials. They often use uniforms, fake IDs, or convincing communication styles to strengthen credibility.

The success of impersonation depends on how easily authority is accepted without verification. In environments where questioning identity is discouraged, this attack becomes particularly effective.

Watering Hole Attacks and Targeted Compromise

In watering hole attacks, cybercriminals compromise websites that are frequently visited by a specific group of users. Instead of directly attacking individuals, they infect a trusted platform and wait for victims to visit it.

When users access the compromised site, malicious code may be executed on their devices, leading to data theft or system compromise.

This method is effective because it exploits trust in familiar online environments.

Business Email Compromise and Corporate Fraud

Business email compromise involves attackers gaining access to corporate email accounts or spoofing them to send fraudulent instructions. These emails may request financial transfers, sensitive data, or changes in payment details.

Because the messages appear to come from trusted executives or partners, employees may comply without verifying authenticity. This can lead to significant financial losses for organizations.

Deepfake-Based Social Engineering

Advancements in artificial intelligence have introduced deepfake-based attacks, where audio or video is manipulated to impersonate real individuals. Attackers can mimic voices or facial expressions to create highly convincing fraudulent communications.

These techniques can be used to authorize transactions, spread misinformation, or manipulate victims emotionally.

As technology evolves, these attacks are becoming more realistic and harder to detect without specialized tools.

Social Engineering Threats

Social engineering attacks continue to evolve as attackers develop more sophisticated psychological and technological methods. While the techniques may vary, they all rely on manipulating human behavior rather than breaking systems directly.

Awareness, skepticism, verification habits, and security training are the most effective defenses. Understanding these attack methods helps individuals and organizations reduce risk and strengthen overall security posture.

Advanced Social Engineering Techniques and Emerging Threats

Social engineering continues to evolve alongside technology, becoming more sophisticated and harder to detect. While basic methods rely on simple deception, advanced techniques combine psychology, digital intelligence gathering, and technical exploitation. These attacks often target organizations with layered strategies, where human error becomes the weakest link.

Modern attackers rarely rely on a single method. Instead, they combine multiple approaches, such as gathering information from social media, impersonating trusted identities, and exploiting security fatigue in users. This layered approach significantly increases success rates.

Open Source Intelligence (OSINT) in Social Engineering

Before launching an attack, many cybercriminals conduct detailed research using publicly available information. This process is known as Open Source Intelligence gathering. Attackers analyze social media profiles, professional networking platforms, company websites, and public records to build a detailed profile of their target.

This information may include job roles, email formats, internal tools, colleagues’ names, and even travel schedules. With this knowledge, attackers can craft highly personalized messages that appear legitimate and relevant.

OSINT makes social engineering attacks more convincing because the victim is more likely to trust messages that reference real details from their professional or personal life.

Reverse Social Engineering

Reverse social engineering is a more deceptive and indirect method where the attacker does not initiate contact first. Instead, they manipulate the environment so that the victim approaches them voluntarily.

This can be achieved by creating a problem and positioning the attacker as the solution. For example, an attacker might sabotage a system or create confusion in a network, then pose as technical support offering help.

Because the victim initiates contact, trust is often established more easily. The psychological impact of seeking help makes individuals more vulnerable to manipulation.

MFA Fatigue Attacks and Notification Bombing

Multi-factor authentication is designed to enhance security, but attackers have developed methods to exploit user behavior around it. MFA fatigue attacks involve repeatedly sending authentication prompts to a user’s device until they become annoyed or distracted and approve one by mistake.

This is often combined with notification bombing, where multiple login attempts trigger a flood of approval requests. Eventually, the victim may accept the request just to stop the interruptions.

These attacks rely on human frustration and inattentiveness rather than technical weaknesses.

SIM Swapping and Identity Takeover

SIM swapping is a method where attackers take control of a victim’s phone number by transferring it to a SIM card they control. This is often achieved by tricking mobile service providers into believing the attacker is the legitimate account owner.

Once successful, the attacker can intercept calls and messages, including one-time passwords used for account verification. This allows them to access banking accounts, email, and other sensitive services.

SIM swapping is particularly dangerous because it bypasses SMS-based security measures.

Insider Threats and Internal Manipulation

Not all social engineering attacks come from external actors. Insider threats occur when employees or contractors misuse their access or are manipulated by external attackers into revealing sensitive information.

In some cases, insiders may be coerced, bribed, or socially engineered into providing access or data. Because they already have legitimate access, their actions are harder to detect.

Insider threats are especially damaging because they bypass many external security controls.

Psychological Principles Behind Social Engineering

Social engineering attacks are highly effective because they exploit fundamental human psychological tendencies. Attackers often rely on principles such as authority, urgency, scarcity, trust, and reciprocity.

People tend to comply with requests that appear to come from authority figures. They also react quickly to urgent situations, sometimes without verifying authenticity. Similarly, when something appears rare or limited, individuals may act impulsively.

Attackers carefully design messages to trigger these emotional responses, reducing the victim’s ability to think critically.

Security Fatigue and User Overload

In modern digital environments, users are constantly exposed to security prompts, password changes, verification requests, and alerts. Over time, this leads to security fatigue, where users become less attentive and more likely to bypass warnings.

Attackers take advantage of this exhaustion by blending malicious prompts into the normal flow of notifications. When users are overwhelmed, they may approve requests without proper verification.

This highlights a key challenge in cybersecurity: balancing security with usability.

Social Media Exploitation and Behavioral Profiling

Social media platforms are rich sources of personal and professional information. Attackers analyze posts, connections, check-ins, and interactions to understand a target’s habits, interests, and relationships.

This allows them to craft believable messages that align with the victim’s lifestyle or current activities. For example, referencing a recent event, job change, or travel plan can significantly increase credibility.

Oversharing online often increases vulnerability to targeted social engineering attacks.

Fake Profiles and Identity Fabrication

Attackers frequently create fake online identities to build trust over time. These profiles may appear as colleagues, recruiters, or industry professionals. Over days or weeks, they engage with the victim, slowly building rapport.

Once trust is established, the attacker may request sensitive information or direct the victim to malicious links. Because the relationship feels familiar, victims are less likely to question the request.

This long-term manipulation strategy is particularly effective in professional networking environments.

Deep Social Engineering in Corporate Environments

In organizational settings, attackers often conduct multi-step campaigns targeting employees at different levels. They may start with low-level staff to gather information and gradually move toward higher-value targets.

This approach, sometimes called a “chain attack,” allows them to map internal structures, understand workflows, and identify key decision-makers.

Once enough information is gathered, attackers can impersonate internal roles more convincingly.

Voice Manipulation and AI-Based Impersonation

Advances in artificial intelligence have made it possible to replicate human voices with high accuracy. Attackers can use recorded samples to generate synthetic voice calls that sound like real executives or employees.

These calls may be used to authorize financial transfers, request sensitive data, or issue fake instructions. Because voice communication carries a strong sense of trust, victims may be more easily convinced.

As this technology becomes more accessible, voice-based deception is becoming a significant security concern.

Physical Security Manipulation Beyond Tailgating

Beyond tailgating and piggybacking, attackers may use uniforms, fake credentials, or impersonation to gain physical access. They may pose as maintenance staff, delivery personnel, or contractors to blend into legitimate activity within a facility.

Once inside, they can access unsecured systems, gather sensitive documents, or install malicious devices.

Physical social engineering remains highly effective because many security systems focus primarily on digital threats.

Environmental Awareness Attacks

Some attackers exploit distractions in the environment to carry out their activities. For example, during busy office hours or events, security awareness tends to decrease. Attackers take advantage of this reduced vigilance to move unnoticed.

This form of manipulation is subtle but effective, as it relies on timing rather than direct interaction.

Detection of Social Engineering Attempts

Recognizing social engineering attempts requires attention to behavioral and contextual warning signs. Unusual requests, pressure to act quickly, inconsistent communication styles, or requests for confidential data are all potential indicators.

Another warning sign is when a request bypasses normal procedures or comes from an unexpected channel.

Developing a habit of verification, such as confirming requests through independent channels, significantly reduces risk.

Organizational Defense Strategies

Organizations can reduce social engineering risks by implementing layered defenses. Security awareness training is one of the most effective measures, helping employees recognize suspicious behavior and respond appropriately.

Strict access controls, identity verification protocols, and multi-channel confirmation for sensitive actions also strengthen defenses.

Encouraging a culture where employees feel comfortable questioning unusual requests is equally important.

Zero Trust Security Approach

The zero trust model assumes that no user or system should be automatically trusted, even if they are inside the network. Every request must be verified before access is granted.

This approach limits the damage that can occur if social engineering attacks succeed. Even if credentials are compromised, additional verification layers can prevent unauthorized actions.

Zero trust is increasingly adopted as a response to modern social engineering threats.

Human-Centered Security Culture

The most effective defense against social engineering is a strong security culture. This means integrating security awareness into everyday behavior rather than treating it as a separate task.

Employees should be encouraged to verify identities, report suspicious activity, and follow established protocols without exception. Security becomes stronger when it is shared responsibility rather than an isolated function.

Perspective on Evolving Threats

Social engineering continues to grow in complexity as attackers combine psychological manipulation with advanced technology. From simple deception to AI-generated impersonation, the core strategy remains the same: exploiting human trust.

Staying protected requires continuous awareness, skepticism toward unexpected requests, and adherence to security practices. As long as human behavior remains a factor in security systems, social engineering will remain one of the most persistent threats in cybersecurity.

Defensive Strategies Against Social Engineering

Protecting against social engineering attacks requires more than just technical security tools. Since these attacks target human behavior, the most effective defense is a combination of awareness, structured processes, and organizational discipline. Security systems alone cannot fully prevent manipulation if individuals are not trained to recognize deceptive behavior.

A strong defense begins with understanding that every unexpected request for information or access should be treated with caution. Whether the communication comes through email, phone, messaging apps, or in person, verification must always be part of the response process.

Security Awareness and Human Training

One of the most important defenses against social engineering is continuous security awareness training. Employees and users must be educated on how attackers operate, what warning signs to look for, and how to respond appropriately.

Training should focus on real-world scenarios rather than just theory. For example, users should learn how phishing emails are structured, how fake authority is established, and how urgency is used to bypass logical thinking. When individuals understand these techniques, they become less likely to fall for manipulation.

Regular training sessions help reinforce these concepts over time. Since attackers constantly evolve their methods, awareness must also be continuously updated.

Verification Culture in Organizations

A strong verification culture is essential in preventing social engineering success. This means that employees should never rely solely on the appearance of legitimacy. Instead, they should verify requests through independent and trusted channels.

For example, if a request is received via email, it should be confirmed through a separate communication method such as a phone call or internal messaging system. This simple habit can stop many impersonation attempts.

Organizations that encourage questioning and verification reduce the likelihood of attackers exploiting trust or authority.

Multi-Factor Authentication as a Defensive Layer

Multi-factor authentication adds an additional barrier that makes it harder for attackers to gain unauthorized access. Even if login credentials are compromised through phishing or deception, the attacker still needs a second form of verification.

However, multi-factor authentication is not completely immune to manipulation. Attackers may attempt to trick users into approving login requests or bypassing authentication prompts. This is why users must be trained not to approve unexpected authentication requests.

When properly implemented and combined with awareness, multi-factor authentication significantly reduces risk.

Principle of Least Privilege

The principle of least privilege ensures that individuals only have access to the information and systems necessary for their roles. By limiting access, organizations reduce the potential damage caused by compromised accounts or manipulated employees.

If an attacker successfully targets a low-level user, restricted access prevents them from reaching sensitive systems. This containment strategy limits the impact of social engineering attacks.

Regular review of access permissions is essential to maintain this security principle effectively.

Email and Communication Security Controls

Email remains one of the most common channels for social engineering attacks. Organizations use filtering systems to detect and block suspicious messages before they reach users. These systems analyze patterns, attachments, and sender behavior to identify potential threats.

However, attackers continuously adapt to bypass these filters, making user vigilance equally important. Employees must be cautious when opening attachments, clicking links, or responding to unexpected messages.

Clear communication policies help ensure that sensitive requests are handled through secure and verified channels only.

Incident Response and Reporting Mechanisms

Even with strong defenses, some social engineering attempts may succeed. This makes incident response planning a critical component of cybersecurity strategy.

Organizations should have clear procedures for reporting suspicious activity. Employees must feel comfortable reporting potential threats without fear of blame or punishment. Early reporting allows security teams to respond quickly and minimize damage.

Incident response teams analyze the attack, contain its impact, and implement corrective measures to prevent recurrence.

Psychological Resistance to Manipulation

Beyond technical and procedural defenses, individuals can develop psychological resistance to social engineering. This involves training the mind to pause before reacting to emotional triggers such as urgency, fear, or excitement.

Attackers often rely on impulsive reactions. By slowing down decision-making and questioning the intent behind requests, individuals reduce the effectiveness of manipulation.

This habit of critical thinking becomes a powerful defense mechanism over time.

Role of Security Policies and Enforcement

Clear and well-defined security policies help establish boundaries for acceptable behavior. These policies outline how sensitive information should be handled, how verification should occur, and what actions are prohibited.

However, policies are only effective when consistently enforced. If rules are ignored or applied inconsistently, attackers may exploit these gaps.

Strong enforcement ensures that security practices become routine rather than optional.

Red Teaming and Simulation Exercises

Organizations often use simulated attacks to test their defenses against social engineering. These exercises, sometimes conducted by internal security teams or external experts, replicate real-world attack scenarios.

The goal is to identify weaknesses in human behavior, processes, and technical systems. For example, simulated phishing emails can reveal how employees respond to deceptive messages.

These exercises help improve awareness and strengthen overall security posture.

Penetration Testing with Human Focus

While traditional penetration testing focuses on technical vulnerabilities, social engineering testing focuses on human weaknesses. Testers may attempt impersonation, phishing simulations, or physical access attempts to evaluate real-world defenses.

The results provide valuable insights into where training or policies need improvement.

By identifying vulnerabilities before attackers do, organizations can proactively strengthen their defenses.

Emerging Threats in Social Engineering

As technology evolves, social engineering attacks are becoming more advanced. Artificial intelligence is increasingly being used to generate realistic messages, voices, and even videos that mimic real individuals.

These developments make it harder to distinguish between authentic and fake communication. Attackers can automate large-scale campaigns that are highly personalized and convincing.

This shift means that traditional awareness techniques must evolve alongside technological advancements.

Deepfake-Driven Deception Risks

Deepfake technology allows attackers to create realistic audio and video impersonations. This can be used to simulate executives giving instructions, family members requesting help, or colleagues authorizing transactions.

Because humans naturally trust visual and auditory cues, these attacks can be highly persuasive.

Defending against deepfake manipulation requires verification through independent channels rather than relying solely on voice or video identity.

AI-Enhanced Phishing Campaigns

Artificial intelligence has made phishing campaigns more sophisticated. Messages can now be automatically tailored based on a target’s behavior, communication style, and online activity.

This increases the likelihood that victims will believe the message is genuine. Unlike older phishing attempts that contained obvious errors, AI-generated content is often highly polished and convincing.

This evolution requires users to rely more on verification rather than linguistic cues.

Mobile Device Vulnerabilities

Mobile devices are frequently targeted in social engineering attacks due to their constant use and smaller interfaces. Users often interact with messages quickly without thorough inspection.

Attackers exploit this behavior through SMS scams, fake application alerts, and deceptive notifications.

Because mobile devices are often linked to authentication systems, compromising them can lead to wider security breaches.

Human Error as a Persistent Risk Factor

Despite advances in cybersecurity, human error remains the most significant vulnerability in social engineering attacks. Mistakes such as clicking malicious links, sharing credentials, or ignoring security warnings often provide attackers with entry points.

Reducing human error requires continuous education, simple security processes, and systems designed to minimize complexity.

When security systems are too complicated, users are more likely to bypass them, increasing risk.

Building Long-Term Security Awareness

Effective security awareness is not achieved through one-time training. It requires ongoing reinforcement, reminders, and practical engagement. Organizations that regularly update training materials and simulate real threats are more successful in reducing risk.

Security awareness should become part of daily behavior rather than an occasional requirement.

Future Outlook of Social Engineering Threats

Social engineering will continue to evolve as long as human behavior remains part of security systems. Attackers will increasingly rely on automation, artificial intelligence, and psychological profiling to improve their success rates.

At the same time, defensive strategies will also advance, focusing more on behavioral analysis, anomaly detection, and stronger identity verification methods.

The balance between attack and defense will continue to shift, but human awareness will always remain a critical factor.

Final Understanding of Social Engineering Defense

Social engineering is not just a technical problem but a human one. While tools and systems play an important role in defense, the strongest protection comes from informed and cautious behavior.

By combining awareness, verification, structured policies, and technological safeguards, individuals and organizations can significantly reduce their exposure to manipulation.

Ultimately, the most effective defense is a mindset that questions, verifies, and does not rely on assumption when dealing with sensitive information or unexpected requests.

Strengthening Everyday Cyber Hygiene

Social engineering defense is not limited to workplaces or organizations; it extends into everyday personal behavior. Many attacks begin with small, unnoticed interactions such as a suspicious message, an unexpected phone call, or a fake notification. Developing strong cyber hygiene habits reduces the chances of becoming a victim.

This includes being cautious about what is shared online, avoiding oversharing personal details on public platforms, and regularly reviewing privacy settings on digital accounts. The less information attackers can gather, the harder it becomes for them to build convincing scams.

Another important habit is verifying the authenticity of communication before responding. Even simple actions like confirming a request through a known contact channel can prevent major security incidents.

Importance of Digital Identity Protection

Digital identity has become one of the most valuable targets for attackers. It includes email accounts, social media profiles, banking credentials, and any online presence linked to a person or organization. Once compromised, attackers can impersonate victims and launch further attacks.

Protecting digital identity requires strong, unique passwords for each account and the use of secure authentication methods. It also involves monitoring accounts for unusual activity and responding quickly to suspicious behavior.

Limiting exposure of personal information online also reduces the chances of identity-based attacks being successful.

Role of Technology in Supporting Human Security

While social engineering primarily targets humans, technology plays a crucial supporting role in defense. Security systems such as spam filters, intrusion detection systems, and authentication tools help reduce exposure to malicious attempts.

However, technology alone is not enough. Attackers continuously adapt to bypass automated defenses, which is why human awareness remains essential. The most effective protection comes from combining technology with informed user behavior.

Security tools should be designed to assist users without overwhelming them, reducing the chances of mistakes caused by confusion or fatigue.

Behavioral Patterns Exploited by Attackers

Attackers often study and exploit predictable human behaviors. For example, people tend to respond quickly to messages that appear urgent or threatening. They also tend to trust familiar names, logos, and communication styles.

Another common behavior is the tendency to follow instructions from perceived authority figures without questioning them. Attackers use these natural tendencies to manipulate decisions.

Understanding these behavioral patterns helps individuals recognize when they are being influenced and take a step back before acting.

Social Engineering in Remote Work Environments

With the rise of remote work, social engineering attacks have become more common and more difficult to detect. Employees working from home rely heavily on digital communication, making it easier for attackers to impersonate colleagues or supervisors.

Attackers may send fake meeting requests, fraudulent invoices, or impersonate IT support teams. Since remote environments lack physical verification, trust is often placed entirely in digital communication.

To counter this, organizations must establish strict remote communication protocols and verification procedures.

The Role of Trust in Cybersecurity

Trust is both a necessary component of communication and a major vulnerability in security systems. Social engineering attacks succeed because they exploit trust relationships between individuals and systems.

Building a secure environment requires balanced trust—where individuals trust verified systems and processes but remain cautious of unexpected or unusual requests.

Blind trust increases risk, while informed trust strengthens security.

Emotional Manipulation Techniques

One of the most powerful tools in social engineering is emotional manipulation. Attackers often create scenarios that trigger fear, urgency, excitement, or sympathy.

For example, a message may claim that an account has been compromised, creating panic and forcing quick action. Alternatively, attackers may offer rewards or opportunities that trigger excitement and reduce critical thinking.

By controlling emotional responses, attackers reduce rational decision-making, increasing the chances of success.

Importance of Verification Channels

A key defense strategy is the use of separate verification channels. This means confirming requests through an independent method rather than relying on the same communication source.

For instance, if an email requests financial transfer, verification should be done through a phone call or secure internal system. This prevents attackers from controlling both sides of communication.

Verification channels act as a safeguard against impersonation and message tampering.

Social Engineering and Data Breaches

Many large-scale data breaches begin with social engineering attacks. Instead of directly hacking systems, attackers trick employees into revealing credentials or granting access.

Once inside the system, attackers may escalate privileges, move laterally across networks, and extract sensitive data.

This demonstrates that human vulnerability is often the entry point for major cybersecurity incidents.

Psychological Defense Strategies

Defending against social engineering requires developing psychological resilience. This involves training oneself to resist pressure, question unusual requests, and avoid impulsive actions.

A useful approach is adopting a “pause and verify” mindset before responding to any sensitive request. This small delay allows rational thinking to override emotional reactions.

Over time, this habit significantly reduces the effectiveness of manipulation attempts.

Organizational Responsibility in Security

Security is not solely the responsibility of individuals; organizations also play a critical role. They must create environments where security practices are easy to follow and consistently enforced.

This includes clear communication policies, regular training, and strong leadership support for security initiatives. When organizations prioritize security culture, employees are more likely to adopt safe behaviors.

A weak organizational structure can create opportunities for attackers, even if individuals are aware of risks.

Continuous Evolution of Attack Techniques

Social engineering techniques continue to evolve alongside technology and communication methods. As new platforms emerge, attackers adapt their strategies to exploit them.

For example, messaging apps, collaboration tools, and social media platforms are now common targets. Each new communication channel introduces new opportunities for deception.

This constant evolution means that security awareness must also continuously adapt.

Human Factor in Cybersecurity Ecosystem

Despite advancements in artificial intelligence, automation, and encryption, the human factor remains the most unpredictable element in cybersecurity. People can be influenced, distracted, or misled in ways that machines cannot easily predict.

This makes human behavior both a strength and a weakness in security systems. When properly educated, humans become a strong defense layer. When unprepared, they become the easiest target.

Conclusion

Social engineering attacks represent one of the most persistent and evolving threats in cybersecurity because they exploit human psychology rather than technical flaws. From tailgating and phishing to advanced AI-driven impersonation and deepfake manipulation, these attacks continue to grow in complexity and impact.

The foundation of protection lies in awareness, verification, and disciplined behavior. Technical defenses such as authentication systems and security software provide important support, but they cannot replace informed human judgment.

A strong defense strategy combines security technology, organizational policies, and continuous education. Individuals must learn to recognize manipulation tactics such as urgency, authority pressure, and emotional exploitation, while organizations must build systems that encourage verification and accountability.

Ultimately, cybersecurity is not only about protecting systems but also about protecting human decision-making. When individuals and organizations adopt a culture of caution, verification, and continuous learning, the risk of social engineering attacks can be significantly reduced.