Top-of-Rack (ToR) switching is a foundational design approach used in modern data centers to manage how servers connect to the network. In this architecture, a network switch is placed at the top or within each server rack, and all servers inside that rack connect directly to it. This design is widely adopted because it improves scalability, reduces cable complexity, and supports high-performance computing environments where large volumes of data move rapidly between servers.

At its core, ToR switching simplifies how network connectivity is distributed across a data center. Instead of relying on a single centralized switching layer for many racks, each rack operates with its own dedicated switching device. This localized approach helps reduce bottlenecks and improves overall network efficiency, especially in environments where internal data traffic between servers is high.

Understanding the Data Center Network Structure

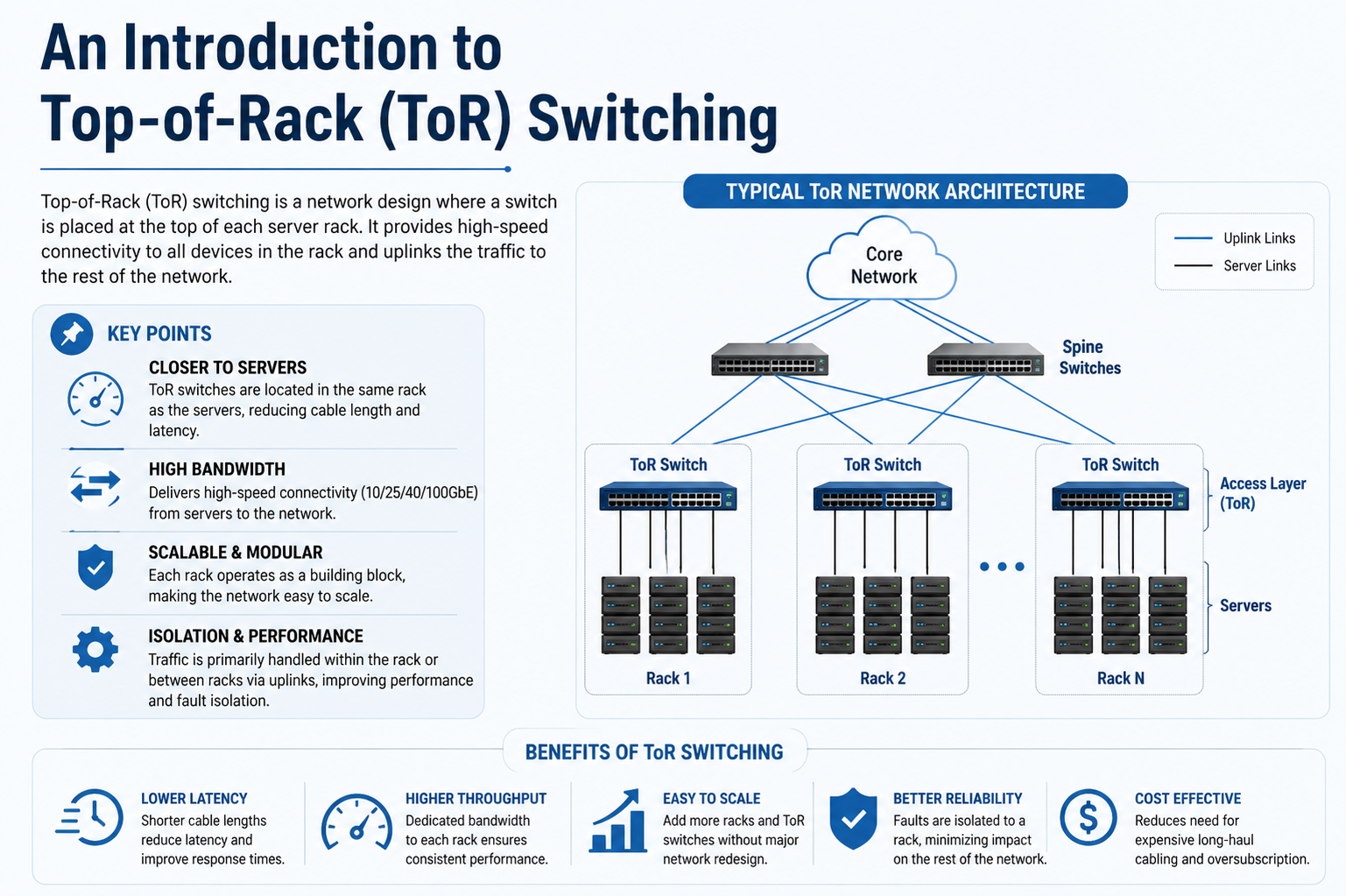

To fully understand ToR switching, it is important to view it within the broader context of data center networking. A typical data center is organized in layers, where servers connect to access switches, and those switches connect to aggregation or core switches. The ToR switch sits at the access layer, acting as the first point of contact for server traffic.

Each rack in the data center becomes a self-contained network unit. Inside this unit, servers communicate through the ToR switch before data is sent outward. This structure helps isolate traffic, meaning that issues in one rack are less likely to affect others. It also allows network engineers to scale the infrastructure by simply adding more racks, each with its own switching capability.

Physical Layout and Connectivity

In a ToR design, physical cabling is significantly simplified compared to older architectures. Each server connects to the ToR switch using short Ethernet cables. These short connections reduce cable clutter inside the rack and make it easier to manage hardware components.

The ToR switch itself is typically mounted at the top of the rack to minimize cable distance. From there, it connects upward to higher-level switches in the data center network. These uplinks carry aggregated traffic from all servers within the rack to other parts of the infrastructure, enabling communication between different racks and external networks.

This layout reduces the need for long cable runs across the data center floor, which not only improves organization but also enhances airflow and cooling efficiency within racks.

Traffic Flow in ToR Architectures

Traffic in a ToR system is generally divided into two types: east-west traffic and north-south traffic. East-west traffic refers to communication between servers within the same data center, often within the same rack or across racks. North-south traffic refers to data moving in and out of the data center, such as communication with external users or cloud services.

In modern applications such as cloud computing, virtualization, and distributed systems, east-west traffic has become dominant. ToR switching is particularly effective in handling this type of traffic because it keeps communication localized within racks, reducing latency and improving throughput.

When a server sends data to another server in the same rack, the traffic remains within the ToR switch, never needing to travel up to higher network layers. This local switching capability significantly reduces response times and improves efficiency.

Scalability and Modular Design

One of the key strengths of ToR switching is its modular nature. Each rack functions as an independent network unit, meaning that additional racks can be added without requiring major redesign of the existing infrastructure. This makes it ideal for rapidly growing environments such as cloud service providers and enterprise data centers.

As demand increases, new racks can be deployed with their own switches, and these switches can be connected to the existing network fabric. This incremental scaling model is far more flexible than traditional centralized switching systems, which often require complex reconfiguration when expanding.

The modular approach also simplifies capacity planning. Instead of designing a single large switching system for the entire data center, engineers can scale horizontally by adding more identical rack units.

Oversubscription and Performance Considerations

In ToR architectures, oversubscription is an important design factor. Oversubscription occurs when the total potential bandwidth of server connections exceeds the uplink capacity of the switch. This is common because not all servers transmit data at maximum capacity simultaneously.

However, careful planning is required to ensure that oversubscription does not lead to congestion or performance degradation. In high-performance environments, engineers may reduce oversubscription ratios by increasing uplink speeds or deploying multiple uplinks per ToR switch.

The goal is to balance cost and performance. Fully non-blocking designs, where all traffic can flow at maximum capacity without congestion, are expensive. ToR switching allows designers to optimize this balance based on workload requirements.

Redundancy and Reliability

Reliability is a critical aspect of any data center design, and ToR switching supports redundancy at multiple levels. A common approach is to deploy dual ToR switches per rack, allowing each server to connect to two separate switches. If one switch fails, traffic can continue flowing through the other, minimizing downtime.

Additionally, uplinks from ToR switches to aggregation layers are often configured with redundant paths. This ensures that even if a link or switch fails, data can still reach its destination through an alternative route.

This redundancy is essential in environments where continuous availability is required, such as financial systems, cloud services, and enterprise applications.

Cable Management and Physical Efficiency

One of the most visible advantages of ToR switching is improved cable management. In traditional designs, long cables often run across racks or even across entire data center rows, creating clutter and complicating maintenance. ToR switching eliminates much of this complexity by keeping most connections within a single rack.

Shorter cables not only improve organization but also reduce signal degradation. This contributes to better network performance and reliability. Additionally, improved airflow within racks helps with cooling efficiency, which is critical in high-density server environments.

The simplified cabling structure also reduces installation time and makes it easier to perform upgrades or replacements without disrupting the entire system.

Comparison with Other Network Architectures

ToR switching is often compared with End-of-Row (EoR) and Middle-of-Row (MoR) designs. In EoR architectures, a single switch located at the end of a row of racks handles connectivity for multiple racks. While this reduces the number of switches required, it increases cable length and complexity.

MoR is a variation where switches are placed in the middle of a row, attempting to balance cable distance and hardware distribution. However, both EoR and MoR designs are less modular compared to ToR.

ToR stands out because it distributes switching resources evenly across the data center, making it more adaptable to modern workloads that require high scalability and low latency.

Impact on Latency and Network Efficiency

Latency is a critical factor in data center performance, especially for applications such as real-time analytics, artificial intelligence, and high-frequency trading systems. ToR switching reduces latency by minimizing the number of hops data must take within a rack.

Since communication between servers in the same rack is handled locally by the ToR switch, data does not need to traverse higher network layers. This localized processing significantly reduces transmission delays.

Even for inter-rack communication, ToR switches provide efficient aggregation points, ensuring that traffic moves quickly through the network hierarchy.

Management and Operational Considerations

Managing a large number of ToR switches can introduce operational complexity. Each rack has its own switching device, which means configuration, monitoring, and updates must be handled across multiple units.

To address this, modern data centers often use centralized management systems and automation tools. These systems allow administrators to configure multiple switches simultaneously, monitor performance metrics, and detect issues in real time.

Automation also plays a key role in maintaining consistency across racks, ensuring that network policies are uniformly applied.

Integration with Virtualization and Cloud Environments

ToR switching aligns well with virtualization technologies, which are widely used in modern data centers. Virtual machines frequently communicate across servers, generating significant east-west traffic. The localized nature of ToR switching supports this pattern efficiently.

In cloud environments, where workloads are dynamically allocated and scaled, ToR architectures provide the flexibility needed to handle rapid changes in traffic patterns. Virtual networks can be mapped onto physical ToR infrastructure without major disruptions.

This compatibility makes ToR switching a preferred choice for cloud providers and large-scale enterprise deployments.

Security Considerations

Security in ToR environments involves both physical and network-level controls. Since each rack has its own switch, security policies can be applied at a granular level. This allows for better isolation between different workloads or tenants in multi-tenant environments.

Network segmentation techniques such as VLANs and access control lists are commonly implemented at the ToR level to control traffic flow and prevent unauthorized access.

However, the distributed nature of ToR switching also requires consistent security management across all devices to avoid configuration inconsistencies that could lead to vulnerabilities.

Challenges and Limitations

Despite its advantages, ToR switching is not without challenges. The increased number of switches in a data center can lead to higher hardware costs and greater power consumption. Each switch also requires management and maintenance, adding to operational overhead.

Another challenge is uplink congestion. If not properly designed, the uplinks from ToR switches to aggregation layers can become bottlenecks, especially in high-traffic environments.

Careful planning of bandwidth allocation and redundancy is necessary to mitigate these risks.

Modern Trends and Evolution

ToR switching continues to evolve alongside advancements in data center architecture. It is often combined with leaf-spine network topologies, where ToR switches function as leaf nodes connecting to spine switches that form the core of the network.

This evolution supports even greater scalability and performance, making it suitable for hyperscale data centers and cloud-native applications.

As data demands continue to grow, ToR switching remains a key building block in designing efficient, flexible, and high-performance network infrastructures.

Advanced Design Considerations in Top-of-Rack (ToR) Switching

As data centers continue to evolve, Top-of-Rack (ToR) switching is no longer viewed as just a physical placement strategy but as a key architectural decision that influences performance, scalability, and long-term operational efficiency. Modern implementations require careful design choices that go beyond simply installing a switch in each rack. Engineers must consider traffic behavior, redundancy models, automation strategies, and integration with broader network fabrics.

At a deeper level, ToR switching is tightly connected to how applications behave. Modern applications are distributed by design, meaning that a single service may run across dozens or even hundreds of servers. This creates a constant flow of internal communication, especially between microservices. ToR architectures are well suited for this environment because they localize traffic handling and reduce unnecessary movement across the network hierarchy.

Leaf-Spine Integration and ToR Role

In modern data center design, ToR switches are commonly integrated into a leaf-spine architecture. In this model, ToR switches act as leaf nodes, while a set of spine switches form the high-speed backbone of the network. Every leaf switch connects to every spine switch, creating multiple equal-cost paths between any two endpoints in the data center.

This structure eliminates the traditional bottlenecks found in hierarchical three-tier designs. Instead of relying on a single aggregation layer, traffic is distributed evenly across the spine layer. ToR switches play a crucial role in this system by acting as the first aggregation point for all server traffic within a rack.

This design ensures predictable latency, since data typically travels from server → ToR switch → spine switch → ToR switch → destination server, with a consistent number of hops regardless of location.

Traffic Engineering and Load Distribution

Efficient traffic distribution is one of the most important aspects of ToR-based architectures. Without proper load balancing, some uplinks or switches can become overloaded while others remain underutilized. To prevent this, modern networks use advanced routing protocols and hashing techniques to distribute traffic evenly across available paths.

Equal-cost multi-path (ECMP) routing is commonly used in conjunction with ToR switches. It allows multiple active paths between source and destination, ensuring that traffic is spread across all available uplinks. This improves bandwidth utilization and reduces congestion risk.

Load balancing also becomes more complex in environments with virtual machines and containerized workloads, where traffic patterns can change rapidly. ToR switches must therefore support dynamic adaptation to shifting workloads.

High-Speed Interfaces and Bandwidth Scaling

As application demands increase, ToR switches have evolved to support much higher port speeds. Early implementations used 1 Gbps or 10 Gbps connections, but modern data centers frequently rely on 25 Gbps, 40 Gbps, 100 Gbps, and even higher-speed interfaces.

This increase in bandwidth allows ToR switches to handle significantly larger volumes of traffic without becoming bottlenecks. However, it also introduces new challenges in terms of cost, power consumption, and thermal management.

Higher-speed uplinks are particularly important in reducing oversubscription ratios. By increasing uplink capacity, designers can ensure that traffic leaving the rack does not experience unnecessary congestion, especially during peak workloads.

Power Efficiency and Thermal Design

Power consumption is a critical factor in ToR deployments. Since each rack contains at least one switch, the total number of switches in a data center can be very large. This increases overall energy usage and heat generation.

To address this, modern ToR switches are designed with energy-efficient hardware components, including low-power ASICs and optimized cooling systems. Additionally, placing switches within racks allows them to share cooling infrastructure with servers, which can improve overall thermal efficiency when properly engineered.

However, improper airflow design can lead to hot spots within racks. This makes physical placement and rack-level airflow management essential considerations in ToR deployments.

Automation and Software-Defined Networking

As data centers scale, manual configuration of ToR switches becomes impractical. This has led to widespread adoption of automation and software-defined networking (SDN) principles.

In an SDN-enabled environment, the control plane is separated from the data plane, allowing centralized controllers to manage multiple ToR switches simultaneously. This enables faster configuration changes, consistent policy enforcement, and improved network visibility.

Automation tools are often used to deploy standardized configurations across all racks. This reduces human error and ensures that each ToR switch operates according to the same network policies.

Additionally, telemetry systems continuously collect performance data from ToR switches, enabling real-time monitoring of traffic patterns, latency, and congestion.

Multi-Tenant and Cloud-Native Environments

ToR switching is especially important in multi-tenant environments such as public cloud data centers. In these systems, multiple customers share the same physical infrastructure while maintaining logical separation of their workloads.

ToR switches help enforce this separation through virtualization technologies such as VLANs, VXLANs, and network overlays. These mechanisms allow each tenant to operate within isolated network segments even though they share underlying physical hardware.

In cloud-native environments, workloads are often ephemeral, meaning they can be created, moved, or destroyed dynamically. ToR switches must therefore support rapid reconfiguration and high adaptability to changing network topologies.

Fault Tolerance and Failure Domains

One of the key advantages of ToR switching is the reduction of failure domains. A failure domain is the portion of a system that is affected when a component fails. In centralized architectures, a single switch failure can impact multiple racks. In ToR designs, failures are localized to a single rack.

If a ToR switch fails, only the servers within that rack are affected, and even then, redundancy mechanisms can minimize disruption. Dual-switch configurations are often used to further reduce risk, ensuring that a backup path is always available.

This isolation improves overall data center reliability and makes troubleshooting more straightforward, as issues can be quickly confined to specific racks.

Latency Optimization Techniques

Latency optimization is a major design goal in ToR architectures. Since many modern applications are sensitive to delays, even small improvements in network latency can have significant performance impacts.

ToR switches help reduce latency by minimizing the number of intermediate hops between servers. Additionally, features such as cut-through switching allow data to be forwarded before the entire packet is received, further reducing transmission delays.

Careful tuning of buffer sizes and queue management policies also plays a role in ensuring consistent latency under heavy load conditions.

Security Enforcement at the Rack Level

Security in ToR-based networks is often implemented at the rack boundary. This allows administrators to enforce policies at a very granular level. For example, specific racks may be assigned to different security zones depending on workload sensitivity.

Access control lists, port security features, and segmentation technologies are commonly applied directly at the ToR switch level. This provides a strong first line of defense against unauthorized access or lateral movement within the network.

In addition, monitoring systems can detect unusual traffic patterns at the rack level, enabling faster detection of potential security incidents.

Operational Complexity and Management Challenges

While ToR switching simplifies physical cabling, it introduces operational complexity due to the large number of distributed devices. Managing hundreds or thousands of individual switches requires robust orchestration systems.

Configuration drift, where different switches gradually become inconsistent over time, is a common challenge in large deployments. Automation and centralized policy management help mitigate this issue.

Network engineers must also maintain consistent firmware updates, security patches, and performance tuning across all ToR devices to ensure stability.

Cost Considerations and Infrastructure Planning

Deploying ToR switching at scale involves significant investment in hardware, cabling, and management tools. Each rack requires at least one switch, and often two for redundancy. This increases the total number of network devices compared to centralized architectures.

However, the improved scalability and performance often justify the cost in large-scale environments. Additionally, the modular nature of ToR deployments allows organizations to expand incrementally rather than making large upfront investments.

Careful planning of rack density, bandwidth requirements, and growth projections is essential to optimize cost efficiency.

Evolving Role in Hyperscale Data Centers

In hyperscale environments, ToR switching has become a standard building block. These environments prioritize horizontal scalability, where capacity is increased by adding more identical units rather than expanding a single centralized system.

ToR switches fit naturally into this model because each rack operates as a self-contained unit. This makes it easier to replicate configurations across thousands of racks while maintaining consistency and performance.

As workloads continue to grow in complexity and scale, ToR switching remains a critical enabler of modern data center design, supporting everything from cloud computing to artificial intelligence workloads.

Future Directions of ToR Switching

The future of ToR switching is closely tied to advancements in automation, virtualization, and high-speed networking. As data rates continue to increase, switches will need to support even higher bandwidths while maintaining low latency and energy efficiency.

Integration with artificial intelligence for network management is also becoming more common. AI-driven systems can analyze traffic patterns and automatically optimize routing decisions in real time.

Ultimately, ToR switching will continue to evolve as part of broader efforts to build faster, more flexible, and more intelligent data center networks capable of handling the growing demands of modern digital infrastructure.

Operational Best Practices in Top-of-Rack (ToR) Switching

In large-scale data center environments, the effectiveness of Top-of-Rack (ToR) switching depends heavily on how well it is operated and maintained over time. While the architecture itself provides structural advantages, poor operational practices can still lead to congestion, downtime, or inefficient resource usage. For this reason, organizations adopt structured operational frameworks that focus on consistency, automation, and continuous monitoring.

A key best practice is maintaining configuration standardization across all ToR switches. Since each rack contains its own switching device, inconsistency between configurations can quickly lead to network instability. Standard templates are typically used to ensure that VLANs, routing policies, security rules, and interface settings remain uniform across the entire infrastructure.

Monitoring and Real-Time Visibility

Continuous monitoring is essential in ToR-based environments because issues can arise at both the server and switch level. Modern data centers rely on telemetry systems that collect real-time performance data from each ToR switch. This includes metrics such as bandwidth usage, packet loss, latency, CPU utilization, and temperature.

By analyzing this data, network teams can identify congestion points before they escalate into serious performance issues. Real-time visibility also helps in capacity planning, allowing engineers to understand how traffic patterns evolve over time and adjust infrastructure accordingly.

Advanced monitoring systems often integrate alerting mechanisms that automatically notify administrators when thresholds are exceeded, ensuring rapid response to potential failures.

Change Management and Network Stability

Change management plays a critical role in maintaining stability in ToR environments. Since even small configuration changes can impact an entire rack, updates must be carefully planned and tested before deployment.

Most organizations follow structured change control processes that include staging environments, rollback plans, and approval workflows. This reduces the risk of unintended disruptions caused by configuration errors or incompatible updates.

In highly automated environments, changes are often deployed programmatically, allowing for controlled and repeatable updates across large numbers of ToR switches.

Firmware and Lifecycle Management

ToR switches, like all network hardware, require regular firmware updates to maintain security, performance, and compatibility with evolving network protocols. Managing firmware across hundreds or thousands of devices can be complex, especially when ensuring minimal downtime.

Lifecycle management strategies are used to track hardware age, performance degradation, and end-of-life timelines. This helps organizations plan timely upgrades and avoid unexpected failures due to outdated equipment.

Rolling upgrades are commonly used in ToR environments, where switches are updated in phases to ensure continuous network availability.

Network Segmentation and Traffic Isolation

Network segmentation is a fundamental concept in ToR architectures, particularly in environments that support multiple applications, departments, or tenants. By dividing network traffic into isolated segments, organizations can improve both security and performance.

ToR switches enforce segmentation using technologies such as VLANs and overlay networks. This ensures that traffic from one segment does not interfere with another, even when they share the same physical infrastructure.

In cloud environments, segmentation is even more dynamic, with virtual networks being created and destroyed on demand as workloads change.

Performance Tuning and Optimization

To achieve optimal performance, ToR switches often require fine-tuning based on workload characteristics. Different applications generate different traffic patterns, and understanding these patterns is essential for efficient configuration.

For example, latency-sensitive applications may benefit from low-buffer, cut-through switching configurations, while data-heavy workloads may require larger buffer sizes to handle bursts of traffic.

Queue management policies also play a role in ensuring fair bandwidth distribution among multiple applications sharing the same network resources.

Troubleshooting in ToR Environments

Troubleshooting network issues in ToR-based architectures is generally more straightforward than in centralized designs because problems are localized to individual racks. However, the distributed nature of the system also means that issues can occur at many different points.

Common troubleshooting steps include checking link status, analyzing traffic logs, verifying configuration consistency, and monitoring hardware health indicators. Since each rack operates independently, isolating the source of a problem is often faster and more precise.

In advanced environments, automated diagnostic tools can identify and sometimes even resolve common issues without human intervention.

Impact of Virtualization on ToR Design

Virtualization has significantly influenced how ToR switching is implemented. In virtualized environments, multiple virtual machines operate on a single physical server, each generating its own network traffic. This increases the importance of efficient rack-level switching.

ToR switches must handle large numbers of virtual network interfaces while maintaining performance and isolation. Technologies such as virtual switching extensions and overlay networks help manage this complexity.

As virtualization continues to expand into containerized workloads, the demand for flexible and high-performance ToR infrastructure continues to grow.

Containerization and Microservices Influence

Modern software architectures based on microservices and containerization have dramatically increased east-west traffic within data centers. Applications are no longer monolithic; instead, they are composed of many small services that frequently communicate with each other.

ToR switching is well suited to this environment because it efficiently handles localized traffic between servers within the same rack. This reduces dependency on higher-level network layers and improves response times for service-to-service communication.

As container orchestration platforms dynamically schedule workloads across servers, ToR switches must adapt to rapidly changing traffic patterns.

Security Challenges in Distributed Switching

While ToR switching enhances security through segmentation, it also introduces challenges due to its distributed nature. Each switch must be individually secured, monitored, and updated, increasing the surface area for potential misconfiguration or vulnerabilities.

Common security practices include disabling unused ports, enforcing strict authentication mechanisms for management access, and continuously auditing configuration changes.

Intrusion detection systems can also be deployed to monitor traffic at the rack level, identifying suspicious behavior early in the network path.

Hardware Evolution and Port Density

Over time, ToR switches have evolved to support higher port densities, allowing more servers to connect to a single device. This reduces the number of switches required per data center while still maintaining the benefits of rack-level switching.

Higher port density also improves space efficiency within racks, which is particularly important in environments where physical space is limited.

At the same time, advancements in ASIC design have enabled faster processing speeds, allowing modern ToR switches to handle significantly larger traffic loads with lower latency.

Energy Efficiency and Sustainability Considerations

Energy efficiency has become an increasingly important factor in data center design. Since ToR architectures involve a large number of distributed switches, even small improvements in power consumption per device can lead to significant overall savings.

Modern ToR switches are designed with energy-efficient components and dynamic power scaling features that adjust energy usage based on traffic load. This helps reduce operational costs and supports sustainability goals.

Efficient cooling strategies also contribute to reduced energy consumption by optimizing airflow within racks and minimizing heat buildup.

Role in Edge Computing Environments

ToR switching is not limited to traditional centralized data centers. It also plays an important role in edge computing environments, where computing resources are deployed closer to end users.

In edge locations, space and power constraints make compact and efficient networking solutions essential. ToR switches provide a scalable and manageable way to connect local servers while maintaining connectivity to central data centers.

This enables low-latency processing for applications such as IoT, real-time analytics, and content delivery.

Automation-Driven Network Evolution

The future of ToR switching is increasingly driven by automation. As networks grow in size and complexity, manual management becomes impractical. Automation tools are now responsible for configuration, monitoring, troubleshooting, and even optimization.

Machine learning systems are being integrated into network management platforms to predict congestion, detect anomalies, and recommend configuration changes.

This shift toward intelligent networking reduces operational burden and improves overall efficiency.

Final Perspective on ToR Architecture

Top-of-Rack switching represents a mature and highly effective approach to modern data center networking. Its combination of modularity, scalability, and performance makes it well suited for environments ranging from enterprise IT systems to hyperscale cloud infrastructures.

While it introduces challenges in terms of management complexity and cost, these are largely offset by its operational benefits and adaptability to modern workloads.

As digital infrastructure continues to evolve, ToR switching will remain a foundational element in building fast, resilient, and scalable networks capable of supporting the next generation of computing demands.

Advanced Troubleshooting Strategies in Top-of-Rack (ToR) Switching Environments

In large-scale data center operations, troubleshooting Top-of-Rack (ToR) switching issues requires a structured and systematic approach. Since each rack operates as a semi-independent network unit, problems are often isolated but can still have cascading effects if not handled correctly. Engineers typically begin by identifying whether the issue is localized to a single rack, a group of racks, or a broader network segment.

One of the most common diagnostic methods involves analyzing interface statistics on the ToR switch. This includes checking for packet drops, error rates, link flapping, and bandwidth saturation. These indicators help determine whether the issue is physical, such as a faulty cable or transceiver, or logical, such as misconfigured routing or congestion.

In addition to switch-level diagnostics, server-side logs are also examined. Since ToR switches directly connect to servers, issues such as inconsistent connectivity or slow application response can often be traced back to either NIC configurations or mismatched network settings between the server and switch.

Congestion Analysis and Microburst Handling

A significant challenge in ToR environments is handling traffic microbursts, which are sudden spikes in data transmission that can overwhelm switch buffers. These bursts are common in virtualized and containerized environments where multiple workloads may simultaneously initiate communication.

To address this, engineers use advanced monitoring tools to detect buffer occupancy trends and queue depth variations. When microbursts are detected, adjustments may be made to buffer allocations, queue scheduling policies, or traffic shaping rules.

Proper congestion analysis ensures that ToR switches can maintain stable performance even under highly dynamic workloads, preventing packet loss and latency spikes.

Redundancy Validation and Failover Testing

Redundancy is a core principle of ToR architecture, but it must be regularly tested to ensure reliability. Failover testing involves simulating switch failures, link outages, or power disruptions to verify that traffic seamlessly shifts to backup paths.

In dual-ToR configurations, each server typically connects to two separate switches. During failover tests, engineers confirm that the network continues to operate without noticeable disruption when one switch becomes unavailable.

This process is critical for mission-critical environments where downtime is unacceptable, such as financial systems, healthcare platforms, and cloud service infrastructures.

Network Convergence and Recovery Behavior

Network convergence refers to the time it takes for a system to stabilize after a topology change, such as a switch failure or link disruption. In ToR-based systems, fast convergence is essential to minimize service interruption.

Modern routing protocols and spine-leaf architectures are designed to reduce convergence times by quickly recalculating optimal paths. Engineers evaluate convergence performance during testing to ensure that recovery occurs within acceptable thresholds.

Slow convergence can result in temporary packet loss or service degradation, making this an important metric in ToR performance evaluation.

Scalability Challenges in Large Deployments

While ToR switching is inherently scalable, extremely large deployments introduce new challenges. As the number of racks increases, so does the number of switches, uplinks, and configuration points. This can lead to operational complexity if not properly managed.

One of the primary scalability concerns is maintaining consistent performance across all racks. Variations in hardware models, firmware versions, or configuration standards can lead to uneven network behavior.

To address this, large organizations implement strict standardization policies and automated deployment pipelines that ensure every ToR switch is configured identically from the moment it is installed.

Inter-Rack Communication Efficiency

Although ToR switching optimizes intra-rack communication, inter-rack traffic must still traverse higher network layers. The efficiency of this communication depends heavily on the underlying spine or aggregation network.

To minimize latency, ToR switches are connected to multiple spine switches, allowing traffic to take the shortest available path. Equal-cost routing ensures that no single uplink becomes a bottleneck.

This design enables predictable performance even when workloads span multiple racks, which is common in distributed computing environments.

Hardware Failures and Resilience Planning

Hardware failure is an inevitable aspect of large-scale networking systems. In ToR environments, failures are typically confined to individual racks, which helps limit their impact.

Common hardware issues include power supply failures, port malfunctions, overheating, and switch ASIC degradation. Resilience planning involves not only redundancy but also proactive replacement strategies.

Predictive maintenance systems use telemetry data to identify early signs of hardware degradation, allowing teams to replace components before failure occurs.

Integration with Artificial Intelligence for Network Management

Artificial intelligence is increasingly being integrated into ToR network management systems. AI-driven platforms analyze massive amounts of telemetry data to detect anomalies, predict congestion, and optimize routing decisions.

These systems can automatically adjust configurations to improve performance, such as redistributing traffic loads or rerouting flows around congested paths.

Over time, AI systems learn from historical patterns, making them more effective at preventing issues before they impact users.

Environmental Impact and Data Center Efficiency

Sustainability has become a major concern in modern data center design. Since ToR switching involves a large number of distributed devices, optimizing energy usage is critical.

Efforts to reduce environmental impact include using energy-efficient switch hardware, optimizing airflow within racks, and implementing dynamic power management systems that adjust consumption based on traffic load.

Efficient ToR design contributes to lower overall power usage effectiveness (PUE), which is a key metric for data center sustainability.

Future Trends in Top-of-Rack Switching

The future of ToR switching is closely tied to advancements in networking speed, automation, and virtualization. As data rates continue to increase beyond 100 Gbps per port, ToR switches will need to evolve to handle significantly higher throughput without increasing latency.

Another major trend is the rise of fully automated data centers, where ToR switches are managed entirely by software-defined systems with minimal human intervention. This will enable faster provisioning, improved reliability, and more efficient resource utilization.

Additionally, the integration of quantum-safe encryption and advanced security protocols is expected to become more important as data sensitivity increases.

Convergence with Edge and Distributed Computing

ToR switching is also becoming increasingly important in edge computing environments. As computing moves closer to end users, smaller-scale data centers are being deployed in distributed locations.

In these environments, ToR switches provide a compact and efficient way to manage local server connectivity while maintaining integration with central cloud infrastructure.

This convergence allows for low-latency processing in applications such as autonomous systems, IoT networks, and real-time analytics platforms.

Operational Efficiency Through Standardization

One of the most effective strategies for managing large ToR deployments is strict standardization. This includes uniform hardware selection, consistent configuration templates, and centralized policy enforcement.

Standardization reduces complexity, minimizes configuration errors, and improves troubleshooting efficiency. It also simplifies training for network engineers, as they only need to learn a limited set of configurations and procedures.

In highly automated environments, standardization serves as the foundation for reliable and scalable network operations.

Conclusion

Top-of-Rack (ToR) switching has become a fundamental component of modern data center architecture due to its ability to balance performance, scalability, and operational simplicity. By placing a switch directly within each rack, ToR designs reduce cable complexity, improve traffic locality, and enable efficient scaling as infrastructure grows.

Its integration with modern technologies such as virtualization, cloud computing, and software-defined networking has made it highly relevant in today’s digital landscape. The architecture supports high-speed data movement, minimizes latency, and aligns well with the demands of distributed applications and microservices-based systems.

Despite its advantages, ToR switching also introduces challenges such as increased device management, higher hardware density, and the need for advanced automation. However, these challenges are effectively addressed through centralized control systems, AI-driven optimization, and standardized deployment practices.

As data centers continue to evolve toward higher speeds, greater automation, and more distributed computing models, ToR switching will remain a core building block of network design. Its adaptability and efficiency ensure that it will continue to support the next generation of computing infrastructure, from hyperscale cloud environments to edge computing deployments.