Virtual machine memory management becomes significantly more important as systems scale beyond simple use cases. While basic setups may work with simple allocation rules, real environments require a deeper understanding of how memory behaves under load. Every virtual machine competes for a shared pool of physical memory, and the way that pool is distributed directly affects performance, responsiveness, and system stability. A well-planned memory strategy ensures that no single machine starves the host or other virtual machines of resources.

How Memory is Shared Between Host and Virtual Machines

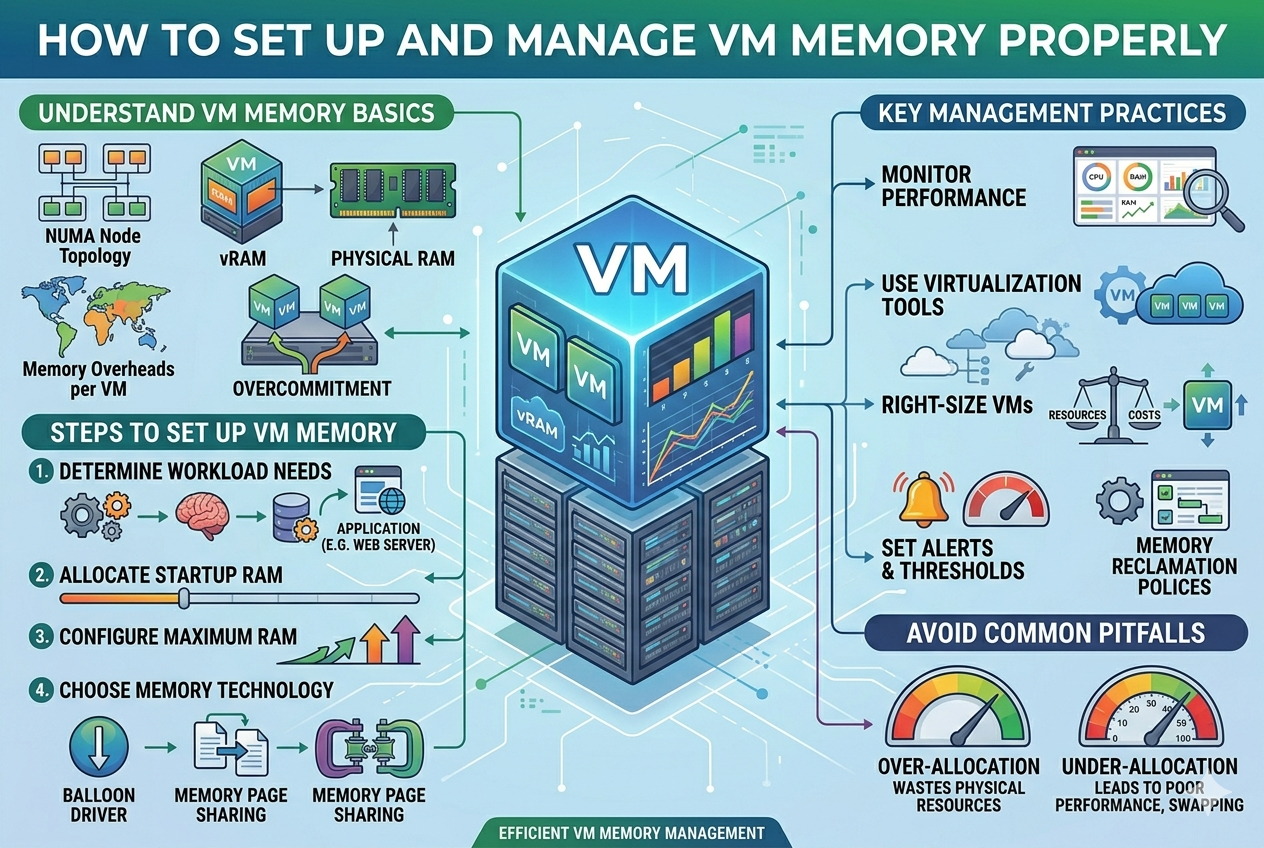

In virtualization, memory is not statically isolated unless explicitly configured that way. Instead, most hypervisors dynamically manage memory distribution between the host and running virtual machines. This means that unused memory in one virtual machine can sometimes be reclaimed and reassigned to another. However, this flexibility depends heavily on the hypervisor’s memory management techniques. Without proper control, memory contention can occur, especially when multiple virtual machines become active at the same time.

Understanding Memory Overhead in Virtualization

Every virtual machine consumes more memory than what is assigned to it. This additional usage is known as memory overhead. It includes space required for virtualization processes, device emulation, and system bookkeeping. As more virtual machines run simultaneously, this overhead increases and can reduce the total available memory on the host more than expected. Ignoring this overhead often leads to incorrect assumptions about available resources, which can result in overloading the system unintentionally.

The Role of Memory Ballooning

Memory ballooning is a technique used by many modern hypervisors to optimize memory usage. It works by installing a lightweight driver inside the virtual machine that can request memory back from the guest operating system when the host is under pressure. This reclaimed memory is then redistributed to other virtual machines that need it more urgently. While this improves efficiency, it also means that performance inside a virtual machine may fluctuate depending on overall system demand. If mismanaged, ballooning can lead to unexpected slowdowns inside guest systems.

Memory Swapping and Its Impact

When physical memory becomes insufficient, both host and guest systems may begin using disk-based swap space. Swapping is significantly slower than RAM, which means performance drops noticeably when it occurs. In a virtualized environment, swapping at the host level is particularly damaging because it affects all running virtual machines simultaneously. Guest-level swapping also degrades performance, but its impact is usually limited to that specific virtual machine. A well-designed system aims to minimize both types of swapping as much as possible.

Dynamic Memory Behavior in Modern Virtualization

Dynamic memory systems adjust allocation based on real-time demand. Instead of assigning a fixed amount of RAM permanently, the hypervisor increases or decreases memory based on workload activity. This is useful for environments where virtual machines experience fluctuating demand, such as testing or development systems. However, dynamic memory requires careful tuning. If minimum thresholds are set too low, virtual machines may struggle during sudden spikes in workload. If maximum limits are too high, they may still compete for resources under heavy load.

NUMA Awareness in Memory Management

Non-Uniform Memory Access, commonly known as NUMA, plays an important role in high-performance systems. In NUMA architectures, memory is divided into regions tied to specific CPU sockets. Accessing memory within the same NUMA node is faster than accessing memory from another node. If virtual machines are not configured with NUMA awareness, they may suffer from performance degradation due to cross-node memory access. Proper alignment of virtual CPUs and memory with NUMA nodes ensures more efficient processing and reduced latency.

Importance of Memory Reservation

Memory reservation ensures that a virtual machine is guaranteed a minimum amount of physical RAM at all times. Unlike dynamic allocation, reserved memory cannot be reclaimed by the host or shared with other virtual machines. This approach is commonly used for critical workloads where predictable performance is required. While reservation improves stability, it reduces overall flexibility because reserved memory is permanently tied to that virtual machine, even when it is idle.

Memory Limits and Control Policies

Setting memory limits helps prevent a single virtual machine from consuming excessive resources. These limits define the maximum amount of memory a virtual machine can use, regardless of availability on the host. Combined with reservations, limits allow administrators to create controlled environments where resources are distributed fairly. However, overly strict limits can restrict performance and prevent applications from using available memory efficiently when needed.

Guest Operating System Memory Behavior

Inside each virtual machine, the guest operating system manages memory just like a physical machine. It allocates memory to applications, caches frequently used data, and uses swap space when necessary. The behavior of the guest OS plays a major role in overall performance. For example, aggressive caching inside the guest can sometimes mask underlying memory pressure at the host level, making it harder to diagnose performance issues.

Host-Level Memory Optimization Techniques

The host system uses several strategies to optimize memory usage across virtual machines. These include deduplication of identical memory pages, compression of less frequently used memory, and prioritization of active workloads. These techniques help maximize efficiency, but they also introduce additional processing overhead. When the system is under heavy load, the benefits of optimization may be reduced, and performance tuning becomes necessary.

Impact of Workload Type on Memory Planning

Different workloads behave differently in terms of memory consumption. Databases often require large, stable memory allocations to cache data efficiently. Web servers may experience fluctuating demand depending on traffic levels. Development environments tend to be unpredictable, with varying usage patterns. Understanding the nature of each workload is essential when designing memory allocation strategies, as a one-size-fits-all approach rarely works effectively.

Common Mistakes in VM Memory Configuration

One of the most common mistakes is allocating maximum possible memory to every virtual machine without considering the host’s limitations. Another frequent issue is ignoring background system processes that also require memory on the host. In some cases, administrators rely too heavily on dynamic memory without setting proper minimum thresholds, leading to instability during peak usage. Poor monitoring practices also contribute to memory-related problems going unnoticed until performance degrades significantly.

Monitoring and Diagnosing Memory Issues

Effective monitoring involves tracking both host and guest memory usage continuously. On the host, indicators such as swap activity, memory pressure, and balloon driver behavior provide insights into system health. Inside virtual machines, memory consumption, cache usage, and paging activity reveal how efficiently applications are using resources. Correlating data from both levels is essential for identifying the root cause of performance issues.

Performance Tuning Strategies

Optimizing memory performance often involves adjusting multiple factors simultaneously. Reducing the number of active virtual machines can immediately improve stability. Adjusting memory allocation based on actual workload rather than estimated needs also helps. In some cases, enabling or disabling specific optimization features such as compression or deduplication can lead to better results depending on the environment. Fine-tuning requires iterative testing and observation rather than fixed rules.

Memory Fragmentation Challenges

Over time, memory in a host system can become fragmented due to continuous allocation and deallocation by virtual machines. Fragmentation makes it harder for the system to allocate large contiguous memory blocks, which can affect performance for memory-intensive workloads. Some hypervisors include mechanisms to mitigate fragmentation, but in heavily loaded systems, periodic maintenance or workload redistribution may be necessary.

Security Considerations in Memory Management

Memory sharing techniques such as deduplication can introduce potential security concerns in multi-tenant environments. Although modern systems implement safeguards, there is still a theoretical risk of information leakage between virtual machines under certain conditions. For this reason, some environments disable memory sharing features entirely when strict isolation is required. Security policies often influence how aggressively memory optimization features are used.

Real-World Memory Management Scenarios

In production environments, memory management is rarely static. A cloud server hosting multiple services may experience unpredictable spikes in demand, requiring dynamic redistribution of resources. A development environment may require frequent resizing of virtual machines as projects evolve. In enterprise systems, careful planning ensures that mission-critical applications always have guaranteed access to memory, even during peak load conditions. Each scenario demands a slightly different approach to balancing flexibility and stability.

Long-Term Memory Strategy Planning

Sustainable virtual machine environments require long-term planning rather than reactive adjustments. As workloads grow, memory requirements increase, and host systems must be upgraded accordingly. Regular audits of memory usage help identify inefficiencies and prevent resource exhaustion. A well-designed strategy anticipates future growth and leaves enough headroom for expansion without requiring constant restructuring of the environment.

Effective Memory Management

Proper virtual machine memory management is a combination of planning, monitoring, and continuous adjustment. It is not enough to simply assign RAM to virtual machines and expect stable performance. Instead, administrators must understand how memory flows between host and guest systems, how workloads behave under different conditions, and how optimization features influence overall performance. When managed carefully, memory becomes a powerful resource that allows virtualized environments to run efficiently, reliably, and at scale.

Advanced Memory Overcommit Strategies

Memory overcommitment is sometimes used in virtualized environments to maximize hardware utilization. It allows the total allocated memory across all virtual machines to exceed the physical memory available on the host system. This approach is based on the assumption that not all virtual machines will use their maximum assigned memory at the same time. While this assumption is often true in lightly loaded systems, it becomes risky in environments with consistent or unpredictable workloads. When demand suddenly increases, the host may struggle to satisfy memory requests, leading to swapping or performance degradation across all virtual machines simultaneously.

Understanding Memory Compression Techniques

Memory compression is a method used by some hypervisors to reduce the impact of memory pressure. Instead of immediately moving inactive memory pages to disk, the system compresses them in RAM, allowing more data to remain in faster memory storage. This reduces reliance on slower disk-based swap mechanisms and improves responsiveness under moderate memory pressure. However, compression itself requires CPU resources, so its effectiveness depends on the balance between available processing power and memory demand. In CPU-constrained environments, heavy use of compression can shift the bottleneck from memory to processing.

Role of Transparent Memory Sharing

In environments where multiple virtual machines run similar operating systems or applications, there is often a significant overlap in memory content. Transparent memory sharing identifies identical memory pages across virtual machines and stores only one copy in physical memory. This reduces overall memory consumption and improves efficiency. However, modern security considerations have led many systems to reduce or disable this feature in high-security environments due to theoretical risks of cross-VM data leakage. The effectiveness of memory sharing also decreases when workloads are highly diverse.

Huge Pages and Their Performance Impact

Memory is typically managed in small fixed-size units called pages. Huge pages increase the size of these units, allowing the system to manage memory more efficiently. By reducing the number of pages the system needs to track, huge pages can decrease overhead and improve performance for memory-intensive applications. Databases and large-scale computing workloads often benefit from this optimization. However, huge pages also reduce flexibility in memory allocation, which means they must be configured carefully to avoid wasted memory or allocation inefficiencies.

Memory Latency and Performance Sensitivity

Not all memory is accessed equally in a virtualized environment. Some workloads are highly sensitive to memory latency, meaning even small delays in memory access can significantly impact performance. In such cases, it is not only the amount of memory that matters but also how quickly it can be accessed. Factors such as NUMA alignment, CPU scheduling, and memory locality all contribute to latency behavior. Ensuring that virtual machines are placed close to their allocated memory resources can significantly reduce latency-related performance issues.

Memory Hot-Plug and Dynamic Scaling

Some modern virtualization platforms support memory hot-plugging, allowing memory to be added to or removed from a running virtual machine without requiring a reboot. This feature is particularly useful in cloud environments where workloads can scale rapidly. It provides flexibility to adjust resources in real time based on demand. However, not all operating systems fully support memory hot-plug functionality, and improper configuration can lead to instability or inconsistent memory reporting inside the guest system.

Impact of Background Services on Memory Usage

Both host and guest systems run background services that consume memory even when no active workloads are present. These services include system monitoring tools, update processes, logging mechanisms, and security agents. While each service may consume only a small amount of memory individually, their combined usage can become significant in environments running multiple virtual machines. Ignoring these background processes during memory planning can lead to unexpected resource shortages.

Memory Allocation in Multi-Tenant Environments

In shared environments where multiple users or departments run virtual machines on the same infrastructure, memory management becomes more complex. Each tenant expects consistent performance, which requires strict isolation and predictable resource allocation. To achieve this, administrators often use a combination of reservations, limits, and prioritization policies. This ensures that no single tenant can consume disproportionate resources at the expense of others. Fair allocation is essential for maintaining stability and user satisfaction in such environments.

Virtual Machine Memory Lifecycle

Memory usage in a virtual machine follows a lifecycle that changes over time. When a virtual machine starts, it consumes a baseline amount of memory for the operating system. As applications launch, memory usage increases dynamically. Over time, caching mechanisms may further increase memory consumption as the system stores frequently accessed data. When applications close or workloads decrease, memory is gradually released. However, not all memory is immediately returned to the host, as operating systems often retain cached data for performance reasons.

Balancing CPU and Memory Resources

Memory performance is closely tied to CPU availability. If a system has sufficient memory but lacks processing power, memory optimization techniques such as compression or ballooning may not function effectively. Similarly, excessive CPU usage can delay memory management operations, leading to inconsistent performance. A balanced allocation of both CPU and memory resources is essential for maintaining overall system stability and responsiveness.

Diagnosing Memory Bottlenecks

Identifying memory bottlenecks requires careful analysis of both system metrics and workload behavior. Symptoms such as slow application response, high swap usage, or inconsistent performance often indicate memory pressure. However, the root cause may not always be insufficient memory allocation. It could also result from inefficient application design, excessive caching, or poor NUMA configuration. Accurate diagnosis requires correlating multiple performance indicators rather than relying on a single metric.

Memory Prioritization in Mixed Workloads

When multiple types of workloads run on the same host, prioritization becomes essential. Critical applications such as databases or production services must be given higher priority compared to development or testing environments. This ensures that essential services remain stable even during peak usage. Prioritization can be achieved through reservation policies, scheduling rules, or resource isolation techniques. Without proper prioritization, less important workloads may consume resources needed by critical systems.

Impact of Storage Performance on Memory Behavior

Although memory and storage are separate resources, they are closely interconnected in virtual environments. When memory pressure leads to swapping, storage performance becomes a critical factor. Slow storage systems significantly worsen the impact of memory shortages. Conversely, fast storage solutions can partially mitigate the effects of swapping. This relationship highlights the importance of balancing both memory and storage performance when designing virtualized systems.

Scaling Memory in Growing Environments

As virtualized environments expand, memory requirements naturally increase. Scaling memory effectively requires both hardware upgrades and careful redistribution of workloads. Simply adding more physical memory is not always sufficient if existing virtual machines are not optimized. Regular reassessment of memory allocation ensures that resources are being used efficiently and that no system is consuming more than necessary. Proactive scaling prevents performance degradation during periods of rapid growth.

Common Misconceptions in Memory Management

One common misconception is that more memory automatically guarantees better performance. While sufficient memory is important, inefficient usage can still lead to performance issues. Another misconception is that unused memory is wasted memory, leading some administrators to over-allocate resources unnecessarily. In reality, modern systems use idle memory for caching and optimization, which improves overall efficiency. Misunderstanding these principles often results in poor configuration decisions.

Memory Stability in Long-Running Systems

In long-running virtual machine environments, memory behavior can change over time due to fragmentation, leaks, or gradual workload growth. Applications may slowly consume more memory without releasing it properly, leading to what appears to be memory exhaustion. Regular monitoring and periodic restarts of critical systems can help maintain stability. Long-term memory stability requires ongoing maintenance rather than one-time configuration.

Best Practices for Sustainable Memory Design

A sustainable memory design approach focuses on flexibility, predictability, and observability. Memory should be allocated based on real usage patterns rather than theoretical maximums. Systems should be monitored continuously to detect inefficiencies early. Flexibility ensures that resources can be adjusted as workloads evolve, while predictability ensures that critical applications remain stable under load. Observability allows administrators to make informed decisions based on actual performance data.

Virtual Memory Management

Effective virtual machine memory management is a continuous process that extends beyond initial configuration. It requires understanding how memory behaves at both the host and guest levels, how workloads interact with shared resources, and how optimization techniques influence performance. When managed properly, memory becomes a highly efficient resource that supports stable, scalable, and responsive virtualized environments. When mismanaged, it quickly becomes a bottleneck that affects every layer of the system.

Memory Behavior in Cloud and Scalable Environments

In cloud and large-scale virtualized systems, memory management becomes more dynamic and abstracted compared to traditional single-host setups. Resources are no longer tied to one physical machine but distributed across clusters of hosts. This introduces flexibility but also increases complexity. Memory can be shifted between physical nodes depending on demand, and workloads may be migrated automatically to balance utilization. While this improves efficiency, it also means memory performance is influenced by network latency, cluster scheduling decisions, and resource availability across multiple machines. Proper planning in such environments focuses on elasticity, ensuring that workloads can grow or shrink without causing instability.

NUMA Optimization in High-Performance Workloads

NUMA architecture plays a critical role in systems with multiple CPU sockets. Memory is divided into zones associated with specific processors, and accessing local memory is significantly faster than accessing memory from a remote node. When virtual machines are not aligned properly with NUMA boundaries, performance degradation occurs due to increased memory access latency. High-performance workloads such as databases and analytics systems are especially sensitive to this. Effective NUMA optimization involves ensuring that virtual CPUs and memory are allocated within the same physical node whenever possible, reducing cross-node traffic and improving consistency in execution speed.

Memory Reclamation and Eviction Mechanisms

When a system approaches memory exhaustion, hypervisors use reclamation techniques to recover unused or low-priority memory. This process may involve reclaiming cached pages, compressing memory regions, or evicting idle data from active memory into slower storage. Eviction policies determine which memory pages are removed first based on usage patterns and priority. While these mechanisms help prevent system crashes, they can introduce performance fluctuations. If reclamation becomes frequent, it usually indicates that the system is overcommitted or that workloads are not properly balanced.

Understanding Memory Contention in Dense Environments

Memory contention occurs when multiple virtual machines compete for limited physical memory resources. This is common in dense environments where high consolidation ratios are used to maximize hardware efficiency. While consolidation improves cost efficiency, it increases the risk of performance interference between workloads. One virtual machine experiencing heavy memory demand can indirectly impact others running on the same host. Contention issues are typically resolved by adjusting allocation policies, redistributing workloads, or reducing overall density to restore stability.

Memory Tuning for Predictable Performance

Memory tuning involves adjusting allocation strategies to achieve consistent and predictable system behavior. This includes setting appropriate reservation levels, defining maximum limits, and fine-tuning dynamic memory ranges. Predictability is often more important than maximum utilization in production systems. Stable memory performance ensures that applications behave consistently under varying loads. Tuning also involves evaluating cache behavior, swap activity, and workload characteristics to eliminate unnecessary variability in performance.

Virtual Memory Fragmentation Over Time

As virtual machines run for extended periods, memory fragmentation becomes an increasingly important issue. Fragmentation occurs when free memory is split into small non-contiguous blocks, making it difficult to allocate large continuous regions when needed. This can impact performance for workloads that require large memory allocations. Although modern hypervisors include mechanisms to reduce fragmentation, such as background defragmentation or memory compaction, heavily loaded systems may still experience inefficiencies that require workload redistribution or scheduled maintenance.

Memory Behavior in Containerized vs Virtualized Systems

Containers and virtual machines manage memory differently, even though they often run on the same underlying infrastructure. Virtual machines allocate dedicated memory spaces managed by a hypervisor, while containers share the host operating system kernel and rely on cgroup limits for memory control. This makes containers more lightweight but also more sensitive to host-level memory pressure. In mixed environments, container workloads can be affected by virtual machine memory usage if both compete for the same physical resources. Understanding this relationship is essential when designing hybrid infrastructure systems.

Impact of Caching on Perceived Memory Usage

Operating systems aggressively use available memory for caching frequently accessed data to improve performance. This can make memory usage appear higher than it actually is, leading to confusion during monitoring. Cached memory is not necessarily “consumed” in the traditional sense, as it can be quickly released when applications require additional resources. However, in virtualized environments, excessive caching across multiple virtual machines can contribute to overall memory pressure at the host level. Proper interpretation of cache usage is important to avoid incorrect scaling decisions.

Memory Scheduling and Resource Fairness

Memory scheduling determines how memory resources are distributed among competing virtual machines. Fair scheduling ensures that no single workload monopolizes system resources while others are starved. Some systems use proportional allocation, where memory is distributed based on predefined weights or priorities. Others rely on dynamic adjustments based on real-time demand. Poor scheduling can lead to unpredictable performance and resource starvation in lower-priority systems, especially during peak usage periods.

Recovery Strategies During Memory Exhaustion

When a system approaches critical memory limits, recovery strategies are activated to prevent failure. These may include aggressive swapping, termination of low-priority processes, or forced reclamation of memory from idle virtual machines. In extreme cases, the system may temporarily reduce performance across all workloads to maintain stability. Recovery behavior depends heavily on configuration and hypervisor capabilities. Proper planning aims to avoid reaching this state altogether, as recovery actions often result in noticeable performance degradation.

Memory Isolation and Security Considerations

In multi-tenant environments, memory isolation is essential to ensure that one virtual machine cannot access data from another. Hypervisors enforce strict boundaries between memory spaces, but advanced optimization techniques such as page sharing or deduplication can introduce theoretical security risks. Although modern implementations include safeguards, some high-security environments disable these features entirely to eliminate potential vulnerabilities. The trade-off between performance optimization and security isolation must be carefully evaluated based on system requirements.

Long-Term Memory Drift and Performance Degradation

Over time, virtual machine environments may experience gradual performance drift due to inefficient memory usage patterns. Applications may slowly consume more memory without releasing it properly, leading to memory pressure that develops gradually rather than suddenly. This type of issue is often difficult to detect because it does not produce immediate failures. Instead, performance slowly declines until corrective action is taken. Regular system audits and monitoring are essential to detect and address memory drift before it becomes critical.

Automation in Memory Management Systems

Modern virtualized environments increasingly rely on automation to manage memory efficiently. Automated systems can dynamically adjust allocation, migrate workloads, and rebalance resources without manual intervention. This improves responsiveness and reduces operational overhead. However, automation must be carefully configured to avoid unstable feedback loops where rapid adjustments lead to oscillating performance. Proper thresholds and control policies ensure that automation enhances stability rather than introducing unpredictability.

Memory Pressure Indicators and Early Warning Signs

Detecting memory pressure early is essential for maintaining system stability. Common indicators include increasing swap usage, growing latency in application response times, and elevated memory reclamation activity. Advanced systems may also track metrics such as balloon driver activity or NUMA imbalance. Recognizing these early signs allows administrators to take corrective action before performance degradation becomes severe. Preventive adjustments are far more effective than reactive recovery.

Balancing Performance and Efficiency in Design

A key challenge in virtual memory management is balancing performance with efficient resource utilization. Maximizing efficiency often involves high consolidation and dynamic allocation, while maximizing performance favors reserved and isolated resources. These two goals can conflict depending on system priorities. High-performance environments prioritize stability and predictability, while development or test environments may prioritize flexibility and density. Effective design requires aligning memory strategy with the intended purpose of the system.

Workload-Aware Memory Allocation Strategies

Different workloads require different memory allocation strategies. Compute-heavy applications benefit from stable, reserved memory allocations, while bursty workloads perform better with dynamic scaling. Understanding workload behavior allows for more intelligent allocation decisions that improve both performance and resource utilization. Workload-aware strategies reduce waste and ensure that memory is used where it is most needed at any given time.

Future Trends in Virtual Memory Management

Virtual memory management continues to evolve toward greater intelligence and automation. Emerging systems are increasingly capable of predicting memory demand based on historical usage patterns and adjusting allocation proactively. Integration with machine learning techniques is also being explored to improve prediction accuracy and reduce manual tuning requirements. As infrastructure becomes more complex, the ability to manage memory dynamically and intelligently will become even more critical for maintaining performance and efficiency.

Mastering VM Memory Management

Effective memory management in virtualized environments is not a static configuration task but an ongoing process of observation, adjustment, and optimization. It requires understanding how memory behaves under different workloads, how systems interact under pressure, and how optimization techniques influence overall stability. When properly managed, memory enables virtual environments to operate at high efficiency while maintaining consistent performance. When neglected, it quickly becomes the limiting factor that affects every layer of system operation.

Memory Optimization in High-Density Virtual Environments

In high-density environments where many virtual machines run on a single host, memory optimization becomes a central concern. The goal is to maximize utilization without crossing the threshold where performance begins to degrade. This requires careful coordination between allocation policies, workload distribution, and monitoring systems. In such setups, even small inefficiencies in memory usage can multiply across dozens or hundreds of virtual machines, leading to noticeable performance instability. The challenge is not only to allocate memory correctly but also to ensure that all systems remain balanced under changing workloads.

Advanced Memory Rebalancing Techniques

Modern virtualization platforms often include rebalancing mechanisms that automatically adjust memory distribution across active virtual machines. These systems analyze current demand and shift resources from underutilized machines to those experiencing higher load. Rebalancing improves overall efficiency but must be controlled carefully to avoid constant fluctuations. If adjustments occur too frequently, it can lead to instability, where virtual machines continuously gain and lose memory, preventing consistent performance. Effective rebalancing relies on stable thresholds and predictive logic rather than reactive changes alone.

Memory Performance Under Bursty Workloads

Bursty workloads, which experience sudden spikes in resource demand, are particularly challenging for memory management. These workloads may remain idle for long periods and then rapidly consume large amounts of memory in short bursts. If the system is not prepared, this sudden demand can overwhelm available resources and trigger swapping or reclamation processes. Designing systems for burst tolerance involves maintaining sufficient memory headroom and ensuring that dynamic allocation mechanisms can respond quickly enough to sudden changes.

The Role of Memory Prioritization Policies

Prioritization policies determine which virtual machines receive memory resources when demand exceeds supply. Critical systems are typically assigned higher priority, ensuring they maintain stable performance even under pressure. Lower-priority systems may experience reduced allocation or delayed access to additional memory. Without prioritization, all virtual machines compete equally, which can lead to unpredictable performance across the entire environment. Proper prioritization ensures that essential services remain unaffected during peak usage periods.

Memory Efficiency in Development and Testing Environments

Development and testing environments often prioritize flexibility over strict performance guarantees. In these setups, memory is frequently overcommitted to allow multiple virtual machines to run simultaneously on limited hardware. Since workloads are not production-critical, occasional performance fluctuations are acceptable. However, even in such environments, excessive overcommitment can slow down development workflows and reduce productivity. A balanced approach ensures that developers have enough resources without wasting hardware capacity.

Impact of Live Migration on Memory Usage

Live migration allows virtual machines to move between physical hosts without downtime. During this process, memory state must be continuously copied and synchronized between systems. This creates temporary additional memory and network overhead. If not managed properly, migration can temporarily strain both source and destination hosts. Efficient migration strategies minimize this impact by compressing memory transfers and prioritizing active pages over inactive ones. In large-scale environments, migration planning is essential to avoid resource bottlenecks.

Memory Stability in Long-Term Operations

Over extended periods, virtualized systems may experience gradual shifts in memory usage patterns. Applications evolve, workloads grow, and system behavior changes. Without periodic review, initial memory allocations may become outdated and inefficient. Long-term stability depends on continuous evaluation and adjustment of memory configurations. Systems that are not regularly tuned tend to accumulate inefficiencies that eventually affect performance and reliability.

Common Operational Mistakes in Memory Management

One frequent mistake is treating memory allocation as a one-time setup rather than an ongoing process. Another common issue is ignoring host-level resource constraints while focusing only on individual virtual machine needs. Over-reliance on automation without proper monitoring can also lead to unexpected performance issues. Additionally, failing to account for memory overhead and background system usage often results in overestimation of available resources. These mistakes can be avoided through consistent monitoring and periodic reassessment.

Memory Scaling Strategies for Growing Systems

As infrastructure grows, memory scaling becomes increasingly important. Scaling can involve adding physical memory, distributing workloads across additional hosts, or optimizing existing allocations. The most effective scaling strategies combine hardware expansion with efficient resource management. Simply adding more memory without addressing inefficiencies often leads to temporary relief rather than long-term improvement. Sustainable scaling requires both capacity planning and workload optimization.

Memory Behavior in Mixed Workload Environments

Mixed environments containing different types of workloads introduce additional complexity. Some applications require steady, predictable memory usage, while others fluctuate significantly. When these workloads share the same host, their behavior can interfere with one another if not properly isolated. Careful planning ensures that high-demand workloads do not negatively impact stable systems. This often involves separating workloads based on performance requirements or using strict resource controls.

Monitoring Strategies for Large-Scale Systems

In large environments, manual monitoring is not sufficient. Automated monitoring systems track memory usage across hosts and virtual machines in real time. These systems generate alerts when thresholds are exceeded or when abnormal patterns are detected. Effective monitoring focuses not only on current usage but also on trends over time, allowing early detection of potential issues. Without proper monitoring, memory problems often remain hidden until they significantly impact performance.

The Relationship Between Memory and System Design

Memory management is closely tied to overall system architecture. Decisions made during system design, such as host sizing, workload distribution, and virtualization strategy, directly influence memory efficiency. Poor design choices can lead to chronic memory shortages or underutilization of resources. Conversely, well-designed systems achieve a balance between performance, flexibility, and efficiency. Memory should be considered a foundational element during the design phase rather than an afterthought.

Future Direction of Memory Management Technologies

Memory management is evolving toward greater intelligence, automation, and predictive behavior. Future systems are expected to anticipate workload demands and adjust resources proactively. Machine learning techniques are being explored to analyze usage patterns and optimize allocation dynamically. These advancements aim to reduce manual intervention while improving both performance and efficiency. As systems become more complex, intelligent memory management will play a central role in maintaining stability.

Conclusion

Virtual machine memory management is a continuous balancing act between efficiency, performance, and stability. It involves understanding how memory is shared between host and guest systems, how workloads behave under different conditions, and how optimization techniques influence overall performance. Proper management requires more than just assigning memory values; it demands ongoing monitoring, adjustment, and planning.

A well-designed memory strategy ensures that virtual machines operate smoothly without overwhelming the host system. It prevents bottlenecks, reduces instability, and supports scalable growth. At the same time, poor memory management can quickly lead to performance degradation, system slowdowns, and resource exhaustion.

Ultimately, successful virtual memory management is about maintaining harmony between available resources and system demand. When this balance is achieved, virtualized environments become highly efficient, stable, and capable of supporting complex workloads reliably over time.