Protocol packets are fundamental building blocks of modern digital communication systems. Whenever data is transmitted over a network—whether it is a simple message, a video stream, or a file download—it is divided into smaller units called packets. This process is necessary because large chunks of data cannot efficiently travel across networks in a single piece. Instead, breaking data into packets allows for smoother transmission, better error handling, and more efficient use of network resources.

Each packet is designed with a structured format that includes both the actual data and essential control information. This control information ensures that the packet reaches the correct destination and can be properly reassembled with other packets once all parts arrive. Without this structured approach, network communication would be unreliable, slow, and prone to errors.

Packets are used in almost all modern networking protocols, including those that power the internet. Whether data is being transferred through wired connections or wireless signals, packet-based communication remains the standard method for ensuring reliability and scalability.

Structure of a Protocol Packet

A protocol packet is typically divided into distinct sections, each serving a specific function. The most common components include the header, payload, and sometimes a trailer.

The header contains important metadata about the packet. This includes the source address, destination address, packet sequencing information, and protocol-specific instructions. It acts like an instruction label that guides the packet through the network.

The payload is the actual data being transmitted. This is the meaningful content that the sender wants to deliver to the receiver, such as part of a file, a segment of a video, or a portion of a message.

The trailer, when present, is used for error detection and correction. It may contain checksums or other validation data to ensure that the packet has not been corrupted during transmission.

This structured design allows packets to travel independently across complex networks and still be correctly interpreted at the destination.

Packet Switching and Data Transmission

Packet switching is the method used to send packets across a network. Instead of dedicating a single communication path for the entire duration of a transmission, packet switching allows each packet to travel independently. This means that different packets from the same data stream may take different routes to reach the destination.

Once all packets arrive, they are reassembled in the correct order using sequencing information stored in the headers. This approach improves efficiency because network paths can be shared among many users simultaneously, reducing congestion and improving overall performance.

Packet switching is one of the main reasons the internet can support millions of users at the same time without requiring dedicated connections for each communication session.

Understanding Byte Counts in Networking

Byte counts represent the total amount of digital information being transmitted or received in a network. A byte is composed of eight bits, and it serves as a standard unit for measuring data size. Byte counts are used to quantify how much data is contained within packets, as well as the total volume of network traffic over a period of time.

Monitoring byte counts is essential for understanding network performance. It helps administrators determine how much bandwidth is being consumed, identify unusual traffic patterns, and ensure that systems are operating efficiently.

For example, a high byte count over a short period may indicate heavy data usage, such as video streaming or large file transfers. On the other hand, unusually low byte counts may suggest connectivity issues or underutilization of network resources.

Relationship Between Packets and Byte Counts

Packets and byte counts are closely connected because every packet carries a specific number of bytes. While packets define the structure and delivery method of data, byte counts measure the size of that data.

In practical terms, a network transmission is often analyzed in terms of both the number of packets sent and the total bytes transferred. This dual measurement provides a more complete understanding of network activity.

For instance, a network may transmit a large number of small packets or a smaller number of large packets, but both scenarios could result in similar byte counts. Understanding both metrics helps in diagnosing performance issues and optimizing network efficiency.

Maximum Transmission Unit and Packet Size

One important concept related to packets is the Maximum Transmission Unit, often referred to as MTU. This defines the largest size a packet can be when transmitted over a network segment. If data exceeds the MTU, it must be broken into smaller packets before transmission.

The MTU plays a crucial role in determining how efficiently data is transmitted. Larger packets can carry more data but may be more susceptible to errors, while smaller packets are easier to manage but may increase overhead due to additional headers.

Network engineers often adjust MTU settings to balance performance and reliability depending on the type of network being used.

Fragmentation and Reassembly of Packets

When a packet exceeds the MTU of a network path, it undergoes fragmentation. This means the packet is divided into smaller fragments, each of which is transmitted separately. At the destination, these fragments are reassembled into the original packet.

Fragmentation ensures that data can still be transmitted even when network limitations exist. However, it can also introduce delays and increase the risk of packet loss, since losing a single fragment can render the entire original packet unusable.

Efficient packet handling aims to minimize fragmentation whenever possible to improve overall network performance.

Error Detection and Reliability in Packet Transmission

To maintain reliability, packets include mechanisms for error detection. These mechanisms help ensure that data is not corrupted during transmission. Common techniques include checksums and cyclic redundancy checks.

When a packet arrives at its destination, the system verifies its integrity using the error-checking data included in the header or trailer. If an error is detected, the packet may be discarded and retransmitted.

This process ensures that even in unreliable network conditions, data can still be delivered accurately.

Byte Counts in Performance Monitoring

Byte counts are widely used in network performance monitoring. They help administrators track bandwidth usage, detect bottlenecks, and analyze traffic patterns.

By examining byte counts over time, it becomes possible to identify peak usage periods and optimize network resources accordingly. This is especially important in environments where multiple users or systems share the same network infrastructure.

Byte counts also play a role in billing systems for internet service providers, where data usage is measured and charged based on the total number of bytes transferred.

Packet Flow and Network Efficiency

The flow of packets through a network determines how efficiently data is delivered. Efficient packet flow minimizes delays, reduces congestion, and ensures smooth communication between devices.

Network protocols use various techniques such as flow control and congestion control to manage packet flow. These mechanisms adjust the rate at which packets are sent based on network conditions, preventing overload and maintaining stability.

When packet flow is optimized, byte counts are also more effectively distributed, leading to better overall network performance.

Role of Protocols in Packet Management

Network protocols define the rules for how packets are created, transmitted, and interpreted. Different protocols serve different purposes, but all rely on packet-based communication.

For example, some protocols prioritize speed, while others prioritize reliability. Regardless of their specific function, they all ensure that data is properly divided into packets and that byte counts are accurately maintained throughout transmission.

Protocols also determine how errors are handled, how packets are sequenced, and how data integrity is preserved.

Security Considerations in Packet Transmission

Security is an important aspect of packet-based communication. Since packets travel across multiple networks, they may be exposed to interception or tampering.

To protect data, encryption is often applied to the payload of packets. This ensures that even if a packet is intercepted, its contents cannot be easily read or altered.

Additionally, authentication mechanisms help verify that packets originate from trusted sources, reducing the risk of malicious activity.

Challenges in Packet and Byte Management

Despite their efficiency, packet-based systems face several challenges. Packet loss, latency, and congestion are common issues that can affect data transmission.

High byte counts in a network may indicate heavy usage, but they can also signal potential overload conditions. Managing these challenges requires continuous monitoring and optimization of network resources.

Network engineers must balance packet size, transmission speed, and error handling to ensure consistent performance.

Advanced Role of Protocol Packets in Network Layers

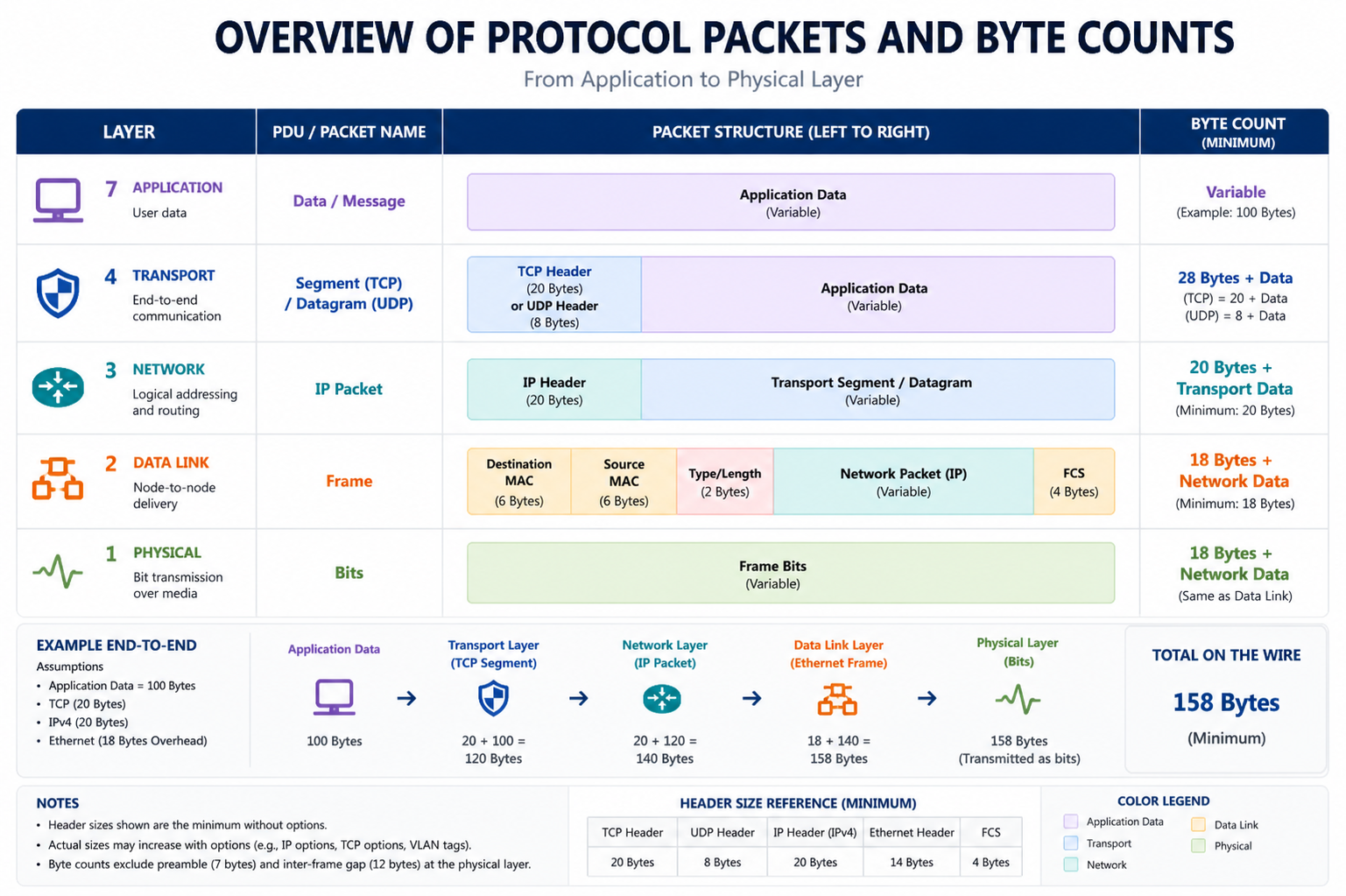

Protocol packets operate across multiple layers of network architecture, most commonly explained through the OSI model and the TCP/IP model. Each layer adds its own form of information to the packet, which helps ensure proper communication between devices even in complex and heterogeneous networks.

At the lower layers, packets are concerned with physical transmission and addressing. As they move up the stack, they begin to include more logical information such as session handling, data formatting, and application-specific instructions. This layered encapsulation allows networks to remain flexible and modular, meaning different systems can still communicate effectively even if they use different internal technologies.

Each layer adds a form of encapsulation, where additional headers are wrapped around the original data. When the packet reaches its destination, these layers are peeled off one by one until the original message is fully reconstructed. This structured process is essential for maintaining consistency and reliability in communication.

TCP/IP Model and Packet Behavior

In the TCP/IP model, packet behavior is primarily governed by two key protocols: Transmission Control Protocol (TCP) and Internet Protocol (IP). IP is responsible for addressing and routing packets to their correct destination, while TCP ensures reliable delivery through sequencing, acknowledgment, and retransmission mechanisms.

TCP breaks large data streams into segments, which are then encapsulated into packets for transmission. Each segment is numbered so that it can be correctly reassembled at the destination. If a packet is lost or corrupted during transmission, TCP ensures it is retransmitted.

IP, on the other hand, does not guarantee delivery but focuses on routing packets across interconnected networks. This separation of responsibilities allows for both flexibility and reliability in communication systems.

Routing and Packet Path Selection

Routing is the process of determining the optimal path for packets to travel from source to destination. Routers analyze packet headers, particularly the destination address, to decide where to forward each packet next.

Because packets may travel across multiple routers and networks, they do not always follow the same path. This dynamic routing allows networks to adapt to congestion, outages, or changes in topology.

Routing algorithms aim to optimize factors such as speed, reliability, and network load. As a result, packets belonging to the same data stream may arrive out of order, requiring reassembly at the destination.

Quality of Service and Packet Prioritization

Quality of Service (QoS) is a mechanism used to prioritize certain types of network traffic over others. In environments where multiple applications share bandwidth, QoS ensures that critical packets receive higher priority.

For example, voice calls and video streams require low latency and consistent delivery, so their packets are often prioritized over less time-sensitive data like file downloads or email transfers.

QoS policies may classify packets based on their headers, source applications, or type of service flags. This ensures that network resources are allocated efficiently and that performance-sensitive applications function smoothly even under heavy load.

Latency, Throughput, and Packet Performance

Latency refers to the time it takes for a packet to travel from source to destination. Lower latency indicates faster communication, which is especially important for real-time applications.

Throughput, on the other hand, measures the amount of data successfully transmitted over a network within a given period of time. While latency focuses on delay, throughput focuses on overall data capacity.

Packet size, routing efficiency, and network congestion all affect both latency and throughput. Larger packets may improve throughput but can increase latency if retransmissions are needed. Similarly, congested networks can slow down packet delivery, reducing performance.

Understanding the relationship between packets and these performance metrics is essential for optimizing network systems.

Packet Loss and Network Reliability

Packet loss occurs when one or more packets fail to reach their destination. This can happen due to congestion, hardware failures, interference, or routing errors.

When packet loss occurs, protocols like TCP initiate retransmission to recover missing data. However, excessive packet loss can significantly degrade network performance, leading to delays, reduced quality in streaming services, or failed communications.

Monitoring packet loss alongside byte counts provides a clearer picture of network health. While byte counts show volume, packet loss reveals reliability issues.

Fragmentation and Path Constraints in Detail

Although fragmentation allows large packets to be broken into smaller units, it introduces additional complexity into the network. Each fragment must carry its own header information, increasing overhead.

Moreover, if even one fragment is lost, the entire original packet may need to be retransmitted. This makes fragmentation less efficient in high-loss environments.

Modern networks often attempt to avoid fragmentation by using path MTU discovery, a process that determines the smallest MTU along the packet’s route. This helps ensure that packets are sized appropriately before transmission begins.

Byte Counts in Deep Network Analysis

In advanced network monitoring, byte counts are used not just for measuring traffic but also for behavioral analysis. By studying byte distribution over time, analysts can detect anomalies such as unexpected spikes, data exfiltration, or denial-of-service attacks.

Byte counts can be broken down into inbound and outbound traffic, allowing detailed insights into communication patterns. This helps organizations understand how data flows within their systems and identify potential inefficiencies.

In large-scale systems, byte counts are often aggregated across multiple devices to provide a comprehensive view of network usage.

Packet Inspection and Traffic Analysis

Packet inspection is a technique used to examine the contents of packets in detail. This can include headers, payload structure, and metadata. Deep packet inspection allows for more advanced analysis, including identifying application types, detecting malicious activity, and enforcing security policies.

Traffic analysis uses both packet information and byte counts to understand how networks are being used. By combining these metrics, administrators can identify trends, detect bottlenecks, and optimize performance.

These techniques are essential in modern cybersecurity and network management systems.

Congestion Control and Flow Regulation

Network congestion occurs when too many packets are transmitted simultaneously, overwhelming network resources. To manage this, protocols implement congestion control mechanisms that adjust transmission rates based on network conditions.

Flow control ensures that a sender does not overwhelm a receiver with too many packets at once. This is particularly important in systems where devices have varying processing capabilities.

Together, congestion control and flow regulation help maintain stability and prevent network collapse under heavy traffic loads.

Packet Scheduling and Queue Management

Routers and switches often use queues to manage incoming packets before forwarding them. Packet scheduling algorithms determine the order in which packets are processed.

Different scheduling methods prioritize different types of traffic. Some focus on fairness, while others prioritize speed or critical applications. Efficient queue management reduces delays and ensures balanced network performance.

Packet scheduling directly affects both latency and byte distribution across the network.

Byte Counting in Security Monitoring

In security systems, byte counts are used to detect unusual patterns of data transfer. Sudden increases in outbound byte counts may indicate data breaches or unauthorized access.

Similarly, unusual inbound traffic may signal attempts to overload a system or exploit vulnerabilities.

By correlating byte counts with packet behavior, security systems can identify threats more accurately and respond in real time.

Integration of Packets and Byte Metrics in Modern Networks

Modern networks rely heavily on the combined analysis of packets and byte counts to maintain performance and security. Packets provide structural insight into how data is transmitted, while byte counts provide quantitative measurement of how much data is flowing.

Together, they form a complete picture of network activity. This integration allows for advanced monitoring, optimization, and troubleshooting across complex systems.

As networks continue to grow in size and complexity, the importance of understanding both packet behavior and byte-level metrics becomes even more critical for ensuring efficient and secure communication.

Advanced Packet Analysis in Modern Networks

In modern networking environments, protocol packets are no longer only viewed as simple data containers. They are now deeply analyzed to understand performance, security, and behavior patterns across complex systems. Advanced packet analysis involves examining both the structure of packets and their movement across networks in real time. This allows administrators to detect inefficiencies, troubleshoot issues, and optimize performance at a much deeper level than basic monitoring tools can provide.

Packet analysis tools inspect headers, payload patterns, timing information, and routing behavior. By studying these elements, it becomes possible to understand not only where data is going, but also how efficiently it is traveling and whether it is behaving normally within expected parameters.

Network Monitoring Systems and Byte Tracking

Network monitoring systems play a critical role in tracking byte counts across devices, servers, and communication links. These systems continuously collect data about how many bytes are transmitted and received, often in real time.

Byte tracking helps identify usage patterns across different applications and users. It also supports capacity planning by revealing how much bandwidth is being consumed over time. When networks begin to approach their limits, byte tracking data provides early warning signals that allow administrators to take corrective action before performance is affected.

In large infrastructures, byte counts are often aggregated across multiple layers, giving a complete picture of traffic flow from individual devices up to entire data centers.

Flow Measurement Techniques in Networking

Flow measurement refers to the process of grouping packets into flows and analyzing their combined byte counts and behavior. A flow typically represents a sequence of packets sharing common attributes such as source, destination, protocol, or session.

By analyzing flows instead of individual packets, networks can achieve more efficient monitoring with less processing overhead. Flow-based analysis provides insights into long-term communication patterns, such as which services consume the most bandwidth or which connections remain active the longest.

This approach is widely used in performance optimization and security monitoring because it balances detail with scalability.

NetFlow and Similar Data Collection Methods

Flow-based technologies such as NetFlow and similar systems are used to collect detailed information about network traffic. These systems record metadata about packets, including byte counts, timing, and routing information, without capturing the full payload.

This allows administrators to analyze traffic behavior while minimizing storage and processing requirements. NetFlow-style data is especially useful for identifying bandwidth-heavy applications, detecting unusual traffic spikes, and understanding communication patterns across large networks.

By combining packet-level understanding with flow-level summaries, network analysis becomes both detailed and scalable.

Deep Packet Inspection and Security Enforcement

Deep Packet Inspection (DPI) is an advanced method used to examine the full contents of packets beyond just their headers. This includes analyzing payload data to identify application types, detect malicious content, and enforce security policies.

DPI systems can classify traffic based on behavior rather than just port numbers or protocols. This makes it possible to identify encrypted or disguised traffic that might otherwise bypass traditional filters.

However, DPI also requires significant processing power, especially in high-speed networks where large volumes of packets must be analyzed in real time.

Byte Counts in Cybersecurity Analysis

Byte counts play an important role in cybersecurity by helping detect unusual data movement. For example, a sudden increase in outbound byte counts from a system may indicate unauthorized data transfer or a potential breach.

Similarly, unexpected spikes in inbound byte counts may suggest an attempted attack or flooding behavior. Security systems often correlate byte counts with packet patterns to identify anomalies more accurately.

When combined with behavioral analysis, byte-level monitoring becomes a powerful tool for detecting threats and preventing data loss.

Packet Behavior in Cloud Computing Environments

Cloud computing environments rely heavily on packet-based communication between distributed systems. Virtual machines, containers, and microservices continuously exchange packets across virtual networks.

In these environments, byte counts are used to measure resource consumption and optimize cost efficiency. Since cloud services often charge based on data usage, accurate byte tracking is essential for billing and resource management.

Packet routing in cloud systems is highly dynamic, with virtual networks adjusting paths based on load balancing and availability. This makes packet analysis even more important for maintaining performance consistency.

IoT Networks and Lightweight Packet Structures

The Internet of Things (IoT) introduces unique challenges for packet communication. IoT devices often have limited processing power and bandwidth, requiring lightweight packet structures to ensure efficiency.

In such environments, packets are optimized to carry minimal overhead while still maintaining essential control information. Byte counts are carefully managed to reduce unnecessary data transfer and extend device battery life.

Because IoT networks can include thousands or even millions of devices, efficient packet handling is critical for maintaining scalability and stability.

5G Networks and High-Speed Packet Transmission

5G networks significantly increase the speed and capacity of packet-based communication. In these environments, packets are transmitted at extremely high rates with very low latency.

Byte counts in 5G systems can reach massive volumes due to the high bandwidth available for applications such as streaming, augmented reality, and real-time communication.

To manage this, advanced scheduling, slicing, and prioritization techniques are used to ensure that packets are delivered efficiently without overwhelming network resources.

Error Correction and Data Integrity in High-Speed Networks

As network speeds increase, maintaining data integrity becomes more challenging. Error correction mechanisms are therefore essential in ensuring that packets remain accurate during transmission.

These mechanisms often involve redundancy, checksums, and retransmission protocols. When errors are detected, affected packets are either corrected or resent to ensure consistency.

Byte counts are also used in error analysis, helping determine how much data is successfully transmitted versus how much needs retransmission.

Buffering and Packet Queues in Transmission Systems

Buffering is used to temporarily store packets when immediate transmission is not possible due to congestion or processing delays. Packet queues manage the order in which these packets are transmitted.

Efficient buffering helps prevent data loss and smooths out traffic spikes. However, excessive buffering can introduce latency, which may negatively impact real-time applications.

Byte counts within buffers help determine how much data is waiting to be processed, allowing systems to adjust dynamically to changing network conditions.

Traffic Optimization and Load Balancing

Traffic optimization involves distributing packet loads evenly across available network paths to avoid congestion. Load balancing systems analyze packet flow and byte counts to determine the most efficient routing strategies.

By distributing traffic intelligently, networks can maintain high performance even under heavy usage. This also reduces the likelihood of bottlenecks and improves overall reliability.

Byte-level analysis helps ensure that no single path becomes overloaded with excessive data.

Role of Artificial Intelligence in Packet Analysis

Artificial intelligence is increasingly being used to analyze packet behavior and byte patterns. Machine learning models can detect anomalies, predict congestion, and optimize routing decisions based on historical data.

AI systems can process large volumes of packet and byte data much faster than traditional methods, making them ideal for real-time network management.

These systems continuously learn from network behavior, improving their accuracy over time.

Future Trends in Packet and Byte Management

The future of packet and byte management is moving toward greater automation, intelligence, and integration. Networks are becoming more adaptive, with systems capable of self-optimizing based on real-time data.

Quantum networking, edge computing, and advanced virtualization are expected to further change how packets are handled and measured. Byte tracking will become even more granular, enabling precise control over data usage and performance.

As networks continue to evolve, the importance of understanding packet structures and byte metrics will remain central to ensuring efficient, secure, and scalable communication systems.

Scalability Challenges in Packet-Based Networks

As modern networks continue to expand in size and complexity, scalability becomes one of the most important challenges in packet-based communication systems. Every additional device, user, and application increases the number of packets being generated and transmitted simultaneously. This exponential growth places pressure on routing systems, bandwidth allocation, and processing capabilities.

To manage scalability, networks rely on hierarchical routing structures and distributed architectures. These approaches ensure that packet traffic is not concentrated in a single point, which could otherwise lead to congestion and performance degradation. Byte counts also increase significantly in large-scale environments, requiring more advanced monitoring systems capable of handling massive data volumes efficiently.

Scalability is not only about handling more traffic but also about maintaining consistent performance as demand grows. This requires continuous optimization of packet handling mechanisms and efficient management of byte-level data flow.

Edge Computing and Packet Processing Efficiency

Edge computing has introduced a new way of handling packet data by shifting processing closer to the source of data generation. Instead of sending all packets to centralized servers, edge systems process data locally, reducing latency and improving response times.

In this model, only relevant packets or summarized byte-level information are forwarded to central systems for further analysis. This reduces network congestion and improves overall efficiency.

By processing packets at the edge, systems can respond more quickly to real-time events, which is especially important in applications such as autonomous systems, smart cities, and industrial automation.

Virtual Networks and Packet Encapsulation

Virtual networks rely heavily on packet encapsulation techniques to create isolated communication environments within shared physical infrastructure. In these systems, packets are wrapped with additional headers that define virtual paths, allowing multiple logical networks to operate over the same hardware.

This encapsulation ensures that data remains separated and secure, even when it travels through shared physical links. Byte counts in virtual networks are tracked both at the physical and virtual layers, providing detailed insight into usage patterns.

Virtual networking is widely used in cloud computing and enterprise environments where flexibility and isolation are required simultaneously.

Bandwidth Management and Byte Allocation

Bandwidth management involves controlling how much data is allowed to flow through a network at any given time. Byte counts play a central role in this process, as they represent the actual volume of data being transmitted.

By analyzing byte usage patterns, networks can allocate bandwidth dynamically based on demand. High-priority applications may receive more bandwidth, while less critical services may be limited during peak usage periods.

This ensures fair distribution of network resources and prevents any single application from consuming excessive capacity.

Protocol Efficiency and Packet Overhead

Every protocol introduces a certain amount of overhead in each packet, typically in the form of headers and control information. While this overhead is necessary for routing, sequencing, and error handling, it also reduces the proportion of actual data that can be carried within each packet.

Protocol efficiency refers to the balance between useful payload data and overhead information. Highly efficient protocols minimize unnecessary overhead while still maintaining reliability and control.

Byte counts are directly affected by protocol efficiency, as more overhead increases total transmitted bytes without increasing useful data.

Network Virtualization and Packet Abstraction

Network virtualization abstracts physical network resources into logical components that can be managed independently. In this environment, packets are treated as flexible units that can be routed through virtualized paths rather than fixed hardware routes.

This abstraction allows for greater flexibility in network design and resource allocation. Byte counts in virtualized environments are often tracked separately for different virtual instances, enabling detailed monitoring and control.

Network virtualization is a key component of modern data centers and cloud infrastructures.

High-Frequency Packet Transmission Systems

In high-performance environments, such as financial trading systems or real-time analytics platforms, packets are transmitted at extremely high frequencies. These systems require minimal latency and highly optimized packet processing pipelines.

Even small delays in packet transmission can have significant consequences in such environments. Therefore, both packet structure and byte efficiency are carefully optimized to ensure maximum speed.

Byte counts in these systems are often enormous, requiring specialized hardware and software solutions to process data in real time.

Synchronization and Packet Ordering Mechanisms

Since packets may arrive out of order due to varying network paths, synchronization mechanisms are essential for reconstructing data accurately. Sequence numbers within packet headers allow systems to reorder packets correctly upon arrival.

Without proper synchronization, data streams could become corrupted or incomplete. Byte counts help verify that all expected data has been received, ensuring completeness of transmission.

This is especially important in applications involving large file transfers or continuous data streams.

Adaptive Networking and Dynamic Packet Adjustment

Modern networks increasingly use adaptive techniques to adjust packet behavior based on current conditions. This includes changing packet sizes, adjusting transmission rates, and rerouting traffic dynamically.

These adaptations are driven by real-time analysis of packet flow and byte counts. When congestion is detected, systems may reduce packet transmission rates or switch to alternative routes.

Adaptive networking ensures that performance remains stable even under fluctuating conditions.

Data Compression and Byte Optimization

Data compression techniques reduce the number of bytes required to transmit information. By eliminating redundancy, compression allows more efficient use of network resources.

Compressed packets carry more meaningful data per byte, reducing overall transmission costs and improving speed. However, compression also requires additional processing power for encoding and decoding.

Byte optimization through compression is widely used in video streaming, file transfer, and web communication systems.

Protocol Evolution and Modern Standards

Network protocols continue to evolve to meet the demands of modern communication systems. Newer protocols are designed to handle larger byte volumes, faster transmission speeds, and more complex routing scenarios.

These advancements improve both packet efficiency and byte-level management, enabling more scalable and reliable networks.

As technology advances, protocols are becoming more specialized for different types of traffic, such as real-time communication, bulk data transfer, and machine-to-machine interaction.

Integration of Packet Analytics with Big Data Systems

Packet and byte data are increasingly integrated into big data analytics platforms. These systems process vast amounts of network information to identify trends, optimize performance, and detect anomalies.

By combining packet-level detail with byte-level summaries, organizations can gain deep insights into network behavior across entire infrastructures.

This integration supports predictive analysis, allowing systems to anticipate congestion or failures before they occur.

Self-Healing Networks and Automated Packet Recovery

Self-healing networks are designed to automatically detect and correct issues related to packet loss, routing failures, or congestion. These systems use continuous monitoring of packets and byte counts to identify irregularities.

When a problem is detected, the network can automatically reroute packets, adjust configurations, or trigger retransmissions without human intervention.

This improves reliability and reduces downtime in critical systems.

Final Integration of Packet and Byte Concepts

Protocol packets and byte counts together form the foundation of all digital communication systems. Packets define how information is structured, transmitted, and reconstructed, while byte counts measure the actual volume and flow of that information.

Across all layers of networking—from physical transmission to cloud computing and intelligent systems—these two concepts remain essential. Their combined role ensures that data is delivered efficiently, accurately, and securely across increasingly complex and high-speed networks.

Conclusion

Protocol packets and byte counts together form the backbone of all modern digital communication systems. Packets provide the structure that allows data to be broken into manageable units, transmitted across complex networks, and accurately reassembled at the destination. Byte counts, on the other hand, measure the actual volume of data being transferred, offering a clear understanding of network usage, performance, and efficiency.

When combined, these two concepts give a complete view of how information flows through a network. Packets explain how data is delivered, while byte counts explain how much data is moving at any given time. This relationship is essential for monitoring traffic, optimizing performance, and ensuring reliable communication between devices.

In real-world networks, both packets and byte counts are constantly analyzed to improve speed, reduce congestion, and maintain security. From small local systems to large global infrastructures, they play a critical role in ensuring that digital communication remains stable, scalable, and efficient.

As technology continues to evolve, the importance of understanding packet behavior and byte-level data will only increase. Advanced systems such as cloud computing, edge networks, and intelligent traffic management all depend on these fundamentals to function effectively.