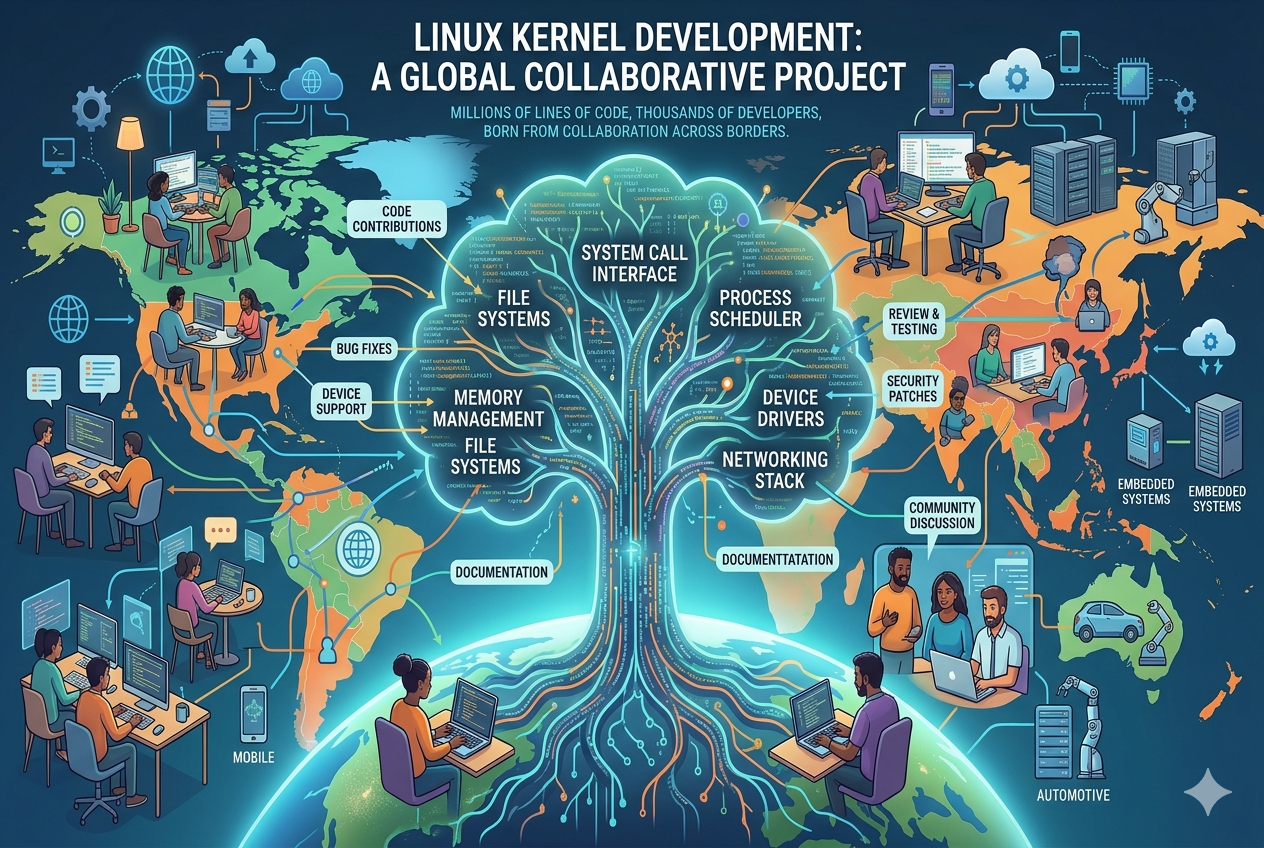

Linux kernel development is built on a uniquely open and distributed collaboration model that allows thousands of contributors to work together across different time zones, organizations, and technical backgrounds. Unlike traditional software development controlled by a single company, the kernel evolves through a community-driven approach where improvements are proposed, reviewed, tested, and refined collectively. This structure enables continuous evolution while ensuring that no single entity has absolute control over the system’s direction.

At the core of this model is the principle of merit-based contribution. Developers earn trust over time by consistently submitting high-quality patches and demonstrating technical expertise. Once recognized, they may become maintainers of specific subsystems, giving them responsibility for reviewing and approving changes in their area. This layered responsibility system creates a balance between openness and control, ensuring that innovation does not compromise system stability.

The distributed nature of development also allows the kernel to scale efficiently. Contributors from universities, research labs, independent communities, and major technology companies all participate in shaping its future. Each group brings different priorities and perspectives, which helps the kernel remain versatile and adaptable to a wide range of hardware and software environments.

The Structured Workflow of Contributions

Kernel development follows a highly organized workflow designed to manage complexity at scale. Contributions typically begin as patches submitted by developers to mailing lists where they are reviewed by peers and subsystem maintainers. This review process is essential, as it ensures that every change is carefully examined for correctness, performance impact, security implications, and compatibility with existing code.

Once a patch is reviewed and refined through feedback, it is integrated into subsystem branches maintained by trusted maintainers. These branches are then periodically merged into larger integration trees before eventually being included in official kernel releases. This hierarchical structure helps maintain order in a project that involves millions of lines of code and thousands of contributors.

Testing is another critical part of the workflow. Automated systems and community testing efforts are used to detect bugs and regressions early in the development cycle. Continuous integration practices help ensure that new changes do not break existing functionality. This rigorous process contributes significantly to the kernel’s reputation for reliability and performance.

Global Community and Distributed Expertise

One of the most remarkable aspects of Linux kernel development is the diversity of its contributor base. Developers from across continents collaborate seamlessly despite differences in language, culture, and time zones. This global participation creates a continuous development cycle where work progresses almost around the clock.

Large technology companies contribute heavily to kernel development because they rely on it for their infrastructure, cloud platforms, and devices. At the same time, independent developers and academic researchers contribute innovations in areas such as file systems, networking, security, and hardware optimization. This combination of corporate and independent contributions creates a rich ecosystem of shared knowledge.

The distributed nature of expertise also means that no single group dominates the kernel’s direction. Instead, decisions are made through technical discussion and consensus-building. This ensures that the kernel evolves in a balanced way that serves a wide variety of use cases, from embedded systems to enterprise servers.

Leadership and Maintenance Structure

Although the kernel is open and collaborative, it still requires structured leadership to function effectively. Maintainers play a key role in this system. They are responsible for specific subsystems such as memory management, device drivers, or networking. Their job is to review contributions, guide development, and ensure consistency within their area of responsibility.

Above the subsystem maintainers is a coordination layer that integrates changes into broader kernel releases. This hierarchy helps manage complexity while maintaining transparency. Decisions are not imposed arbitrarily; instead, they emerge from technical discussions and community consensus.

This leadership model is essential for maintaining quality in such a large and fast-moving project. Without it, the kernel would risk fragmentation or instability due to conflicting contributions. Instead, the structured approach ensures that development remains coordinated while still being open to innovation.

The Role of Continuous Improvement

Linux kernel development is not static; it is a continuously evolving system. New hardware support, performance improvements, security enhancements, and feature updates are regularly introduced. This constant evolution is necessary to keep up with the rapidly changing technology landscape.

One of the strengths of the kernel’s development model is its ability to adapt quickly to emerging needs. When new processors, architectures, or devices are introduced, support can be integrated through community collaboration. Similarly, security vulnerabilities are addressed rapidly through coordinated responses from developers worldwide.

This continuous improvement cycle is supported by frequent release schedules. New versions of the kernel are released regularly, each incorporating incremental improvements. This ensures that the system remains modern, efficient, and secure without requiring disruptive overhauls.

Code Quality and Review Culture

A defining feature of kernel development is its strong emphasis on code quality. Every contribution undergoes detailed scrutiny before being accepted. Reviewers check for correctness, efficiency, readability, and adherence to coding standards. This culture of rigorous review helps prevent bugs and ensures long-term maintainability.

Discussions around code changes are highly technical and often involve multiple iterations. Developers may refine their contributions several times based on feedback before final acceptance. This iterative process improves not only the code itself but also the overall understanding of the system among contributors.

The emphasis on quality also extends to documentation and maintainability. Since the kernel is used in critical systems worldwide, clarity and reliability are essential. Poorly written or inefficient code is not accepted, regardless of its origin.

Scalability Across Hardware and Systems

One of the most powerful aspects of the Linux kernel is its ability to scale across a wide range of hardware platforms. It runs on everything from small embedded devices to massive data center servers. This scalability is made possible through modular design and careful abstraction of hardware-specific functionality.

Developers contribute drivers and optimizations that allow the kernel to support new devices and architectures. As technology evolves, the kernel adapts to support faster processors, larger memory systems, and more complex networking environments. This adaptability is a direct result of its collaborative development model.

The kernel’s scalability also makes it a preferred choice for cloud computing environments. Its flexibility allows organizations to customize it for specific workloads, optimizing performance and resource usage.

Security and Stability as Core Principles

Security is a fundamental concern in kernel development. Because the kernel operates at the core of the operating system, vulnerabilities can have serious consequences. As a result, security fixes are prioritized and often addressed rapidly through coordinated global efforts.

Developers continuously analyze the codebase for potential weaknesses and implement protections against emerging threats. The review process itself acts as a layer of security, as multiple experts examine every change before it is integrated.

Stability is equally important. Since many systems depend on the kernel for critical operations, changes must be carefully evaluated to avoid introducing regressions. This careful balance between innovation and stability is one of the key reasons the kernel is trusted in mission-critical environments.

Innovation Driven by Community Needs

Innovation in kernel development is often driven by real-world requirements. Developers working on large-scale systems frequently contribute improvements that address performance bottlenecks or hardware limitations they encounter. These contributions benefit the wider community once integrated into the kernel.

This practical, needs-driven innovation ensures that development remains grounded in real-world usage rather than theoretical design alone. It also encourages continuous feedback between users and developers, creating a cycle of improvement.

Over time, this approach has led to significant advancements in areas such as networking performance, file system design, and process scheduling. Each improvement builds on previous work, resulting in a highly optimized and mature system.

Long-Term Evolution and Sustainability

Linux kernel development is designed for long-term sustainability. The modular structure of the codebase allows parts of it to be updated or replaced without disrupting the entire system. This makes it possible for the kernel to evolve over decades while maintaining backward compatibility.

The community-driven model also ensures that knowledge is distributed across many contributors rather than concentrated in a single organization. This reduces dependency risks and ensures continuity even as individual contributors come and go.

Sustainability is further supported by the global nature of participation. With developers from around the world contributing continuously, the project is not limited by geography or organizational boundaries.

The Future of Kernel Development

As technology continues to advance, Linux kernel development is expected to grow even more complex and influential. Emerging fields such as artificial intelligence, edge computing, and quantum technologies will require new levels of performance and flexibility from operating systems.

The collaborative model that has defined kernel development so far will remain essential in meeting these challenges. By combining global expertise, structured governance, and continuous innovation, the kernel will continue to evolve alongside modern computing needs.

Its ability to adapt, scale, and integrate contributions from diverse sources ensures that it will remain a foundational component of global computing infrastructure for years to come.

The Evolution of Development Practices Over Time

Linux kernel development has evolved significantly since its early stages, both in scale and methodology. In its initial phase, the project was relatively small, with contributions coming from a limited number of developers who could directly coordinate with each other. As adoption grew, the number of contributors expanded rapidly, requiring more structured processes to manage complexity. This transition marked the shift from informal collaboration to a highly organized global engineering effort.

Over time, development practices became more standardized to handle the increasing volume of contributions. Mailing lists became the primary communication channel, allowing developers from around the world to discuss technical changes in a transparent manner. This openness ensured that design decisions were visible to the entire community, reducing ambiguity and encouraging informed participation.

As the project matured, new tools and workflows were introduced to improve efficiency. Version control systems, patch tracking mechanisms, and automated testing frameworks helped streamline development. These improvements allowed the kernel to scale without sacrificing quality or stability, even as the number of contributors grew into the thousands.

Subsystem Specialization and Modular Architecture

A defining characteristic of Linux kernel development is its modular architecture. The kernel is divided into multiple subsystems, each responsible for a specific area such as memory management, file systems, networking, or device drivers. This separation of concerns allows developers to focus on specialized areas without needing to understand the entire codebase in detail.

Subsystem specialization makes collaboration more efficient by distributing responsibility among experts. Maintainers oversee their respective domains and ensure that contributions align with architectural goals and quality standards. This structure reduces complexity while enabling parallel development across different parts of the kernel.

Modularity also enhances maintainability. Since subsystems are loosely coupled, changes in one area are less likely to disrupt others. This design principle is crucial for a system as large and widely used as the Linux kernel, where stability and reliability are essential.

The Role of Communication in Kernel Development

Effective communication is a cornerstone of kernel development. Developers rely heavily on mailing lists and asynchronous discussions to propose changes, share feedback, and resolve technical disagreements. These discussions are highly detailed and often involve multiple iterations before reaching consensus.

The communication style is primarily technical and focused on problem-solving. Contributors are expected to provide clear explanations, evidence, and rationale for their proposed changes. This ensures that decisions are based on technical merit rather than personal preference.

Because contributors are spread across different time zones, asynchronous communication is essential. This allows development to continue continuously without requiring real-time coordination. As a result, progress can be maintained around the clock, contributing to the project’s rapid evolution.

Conflict Resolution and Decision-Making

With a large and diverse community, disagreements are inevitable in kernel development. However, the project has established mechanisms for resolving conflicts constructively. Technical discussions are encouraged to remain objective, focusing on code quality, performance, and design principles rather than personal opinions.

When disagreements arise, maintainers and senior contributors play a key role in guiding decisions. Their experience helps evaluate competing proposals and determine the most appropriate solution. In many cases, compromises are reached that balance different perspectives while maintaining system integrity.

Decision-making is ultimately driven by technical consensus. This means that proposals are accepted based on their merit and alignment with the project’s long-term goals. This approach ensures that the kernel remains stable, efficient, and forward-compatible.

Security Development Lifecycle

Security is deeply integrated into the kernel development lifecycle. Rather than being treated as a separate phase, security considerations are embedded throughout the entire process. Developers are encouraged to identify potential vulnerabilities early and address them during code review.

Security patches are often developed and deployed quickly in response to discovered vulnerabilities. The global nature of the community allows rapid coordination, ensuring that fixes are distributed efficiently. This responsiveness is critical given the kernel’s role in critical infrastructure systems worldwide.

In addition to reactive measures, proactive security practices are also emphasized. Code reviews, static analysis tools, and testing frameworks help identify potential risks before they become exploitable. This layered approach strengthens the overall resilience of the system.

Performance Optimization and System Efficiency

Performance optimization is a continuous focus in kernel development. Contributors regularly work to improve CPU efficiency, memory usage, I/O throughput, and overall system responsiveness. These optimizations are essential for ensuring that the kernel performs well across diverse hardware environments.

One of the strengths of the development model is that performance improvements often emerge from real-world usage. Developers working on large-scale systems frequently identify bottlenecks and contribute solutions that benefit the broader community. This feedback loop helps drive continuous improvement.

The kernel’s ability to efficiently manage system resources is one of its key advantages. Through careful scheduling, memory management, and hardware abstraction, it ensures that applications run smoothly even under heavy workloads.

Hardware Support and Driver Ecosystem

A major aspect of kernel development is maintaining and expanding hardware support. The kernel must interface with a vast array of devices, including processors, storage systems, network interfaces, and specialized hardware components. This requires a constantly evolving driver ecosystem.

Device drivers are often contributed by hardware manufacturers or community developers. These drivers enable the kernel to communicate effectively with hardware, ensuring compatibility and performance. As new devices are introduced, corresponding support is integrated into the kernel through collaborative development.

The driver ecosystem is one of the largest and most complex parts of the kernel. Its continuous expansion ensures that Linux remains compatible with modern hardware, making it suitable for both consumer devices and enterprise systems.

Testing Infrastructure and Quality Assurance

Testing plays a critical role in maintaining kernel stability. A combination of automated testing systems and community-driven testing efforts helps identify bugs and regressions early in the development cycle. This ensures that new changes do not negatively impact existing functionality.

Automated testing frameworks run a wide range of checks, including unit tests, integration tests, and performance benchmarks. These systems help detect issues quickly and provide feedback to developers during the review process.

Community testing also contributes significantly to quality assurance. Developers and users across the world test new kernel versions in real environments, reporting issues that may not be detected in controlled testing environments. This collaborative approach enhances reliability and robustness.

Release Cycle and Version Management

The kernel follows a structured release cycle that ensures regular updates and predictable development progress. New versions are released periodically, each incorporating improvements, bug fixes, and new features. This cycle helps maintain a balance between innovation and stability.

Between major releases, smaller updates are made to address critical issues and security vulnerabilities. This ensures that systems running the kernel remain secure and functional without requiring major upgrades.

Version management is carefully handled to maintain compatibility across different releases. This allows developers and organizations to plan upgrades effectively while minimizing disruption.

Long-Term Support and Maintenance Strategy

Long-term support (LTS) versions of the kernel play a crucial role in ensuring stability for enterprise and production environments. These versions receive updates for an extended period, focusing primarily on security fixes and critical bug corrections rather than new features.

LTS kernels are widely used in systems that require high reliability and minimal change, such as servers, embedded devices, and industrial systems. This approach allows organizations to maintain stable environments while still benefiting from ongoing security improvements.

The maintenance strategy ensures that even older systems remain secure and functional, contributing to the kernel’s widespread adoption in critical infrastructure.

Global Impact on Computing Infrastructure

The Linux kernel has become a foundational component of global computing infrastructure. It powers a vast range of systems, including servers, cloud platforms, mobile devices, networking equipment, and embedded systems. Its flexibility and reliability make it suitable for virtually every computing environment.

Its widespread adoption is a direct result of its open and collaborative development model. By allowing contributions from around the world, the kernel continuously evolves to meet new technological demands. This adaptability has made it a cornerstone of modern digital infrastructure.

The impact of the kernel extends beyond technology into industries such as finance, telecommunications, healthcare, and scientific research. Its stability and performance make it a trusted foundation for critical systems.

Continuous Community Growth and Knowledge Sharing

The kernel community continues to grow as more developers and organizations participate in its development. Knowledge sharing is a key aspect of this growth, with experienced contributors mentoring new developers and helping them understand complex systems.

This culture of mentorship ensures that expertise is passed on to new generations of developers. It also helps maintain consistency and quality across the project as it expands.

Workshops, conferences, and online discussions further support knowledge exchange, strengthening the global community and fostering innovation.

Enduring Strength of the Development Model

The long-term success of Linux kernel development lies in its ability to combine openness, structure, and technical excellence. Its collaborative model allows it to harness global expertise while maintaining strong governance and quality control.

This balance between freedom and discipline has enabled the kernel to grow into one of the most advanced and widely used software systems in the world. Its continued evolution reflects the strength of community-driven engineering and its ability to adapt to changing technological landscapes.

Advanced Scheduling and Process Management

Linux kernel development places significant emphasis on how the system manages processes and allocates CPU time efficiently. The scheduler is one of the most critical components of the kernel because it determines how tasks are prioritized and executed across available processor cores. Over time, this subsystem has evolved to support increasingly complex workloads, from simple desktop applications to massive distributed computing systems.

The scheduling system is designed to balance fairness, responsiveness, and throughput. It ensures that no single process monopolizes system resources while still allowing high-priority tasks to execute efficiently. Developers continuously refine scheduling algorithms to improve performance under different conditions, such as heavy multitasking environments or real-time computing scenarios.

Process management also extends to how the kernel handles task creation, termination, and inter-process communication. These mechanisms allow applications to interact smoothly while maintaining system stability. Improvements in this area directly impact system responsiveness and overall efficiency, making it a key focus of ongoing development efforts.

Memory Management and System Optimization

Memory management is one of the most complex and essential parts of the Linux kernel. It is responsible for allocating, tracking, and optimizing the use of system memory across all running processes. Efficient memory handling is crucial for performance, especially in systems with limited resources or high workloads.

The kernel uses advanced techniques such as virtual memory, paging, and caching to optimize memory usage. Virtual memory allows applications to operate as if they have access to large continuous memory spaces, even when physical memory is limited. This abstraction improves flexibility and simplifies application development.

Caching mechanisms further enhance performance by storing frequently accessed data in faster memory locations. This reduces the need for repeated access to slower storage devices. Developers continuously refine these systems to reduce latency and improve overall efficiency.

Memory management also includes mechanisms for detecting and preventing leaks, fragmentation, and corruption. These safeguards help maintain system stability over long periods of operation, which is essential for servers and mission-critical systems.

File Systems and Data Management Evolution

File systems are another core area of kernel development, responsible for organizing and managing how data is stored and retrieved. Over time, Linux has supported a wide range of file systems, each optimized for different use cases such as performance, reliability, or compatibility.

Modern file systems in the kernel are designed to handle large volumes of data efficiently while maintaining integrity and consistency. Features such as journaling help protect against data loss in case of unexpected system failures. This ensures that file operations can be recovered safely after crashes or power interruptions.

Developers continue to innovate in this area by improving performance, scalability, and reliability. Support for advanced storage technologies, such as solid-state drives and distributed storage systems, has further expanded the kernel’s capabilities. These improvements make it suitable for both personal computing and enterprise-scale data centers.

Networking Stack and Global Connectivity

The networking subsystem of the Linux kernel is one of its most powerful and widely used components. It enables communication between systems across local networks and the internet, supporting a wide range of protocols and technologies.

The kernel’s networking stack is designed for high performance and scalability. It efficiently handles large volumes of data traffic while minimizing latency. This makes it ideal for use in servers, cloud platforms, and high-speed networking environments.

Developers continuously work to improve throughput, reduce packet loss, and enhance security in network communications. Support for modern protocols and hardware accelerations ensures that the kernel remains competitive in evolving networking landscapes.

The networking subsystem also plays a key role in distributed computing and cloud infrastructure. Its flexibility allows systems to communicate efficiently across global networks, enabling large-scale applications and services.

Device Drivers and Hardware Integration

Device drivers form a critical bridge between the kernel and hardware components. They allow the operating system to interact with physical devices such as graphics cards, storage controllers, network interfaces, and input devices.

Kernel development includes continuous efforts to expand and improve driver support. As new hardware technologies emerge, corresponding drivers must be developed and integrated into the kernel. This ensures compatibility and optimal performance across a wide range of devices.

Driver development is often complex because it requires deep knowledge of both hardware architecture and kernel internals. Contributors working in this area play a vital role in maintaining the kernel’s versatility and adaptability.

The integration of drivers into the kernel follows strict review processes to ensure stability and security. Poorly designed drivers can cause system instability, so careful validation is essential before inclusion.

Security Architecture and Protection Mechanisms

Security in the Linux kernel is implemented through multiple layers of protection mechanisms. These include access control systems, privilege separation, and isolation techniques that prevent unauthorized access to critical system resources.

One of the key principles of kernel security is minimizing the attack surface. This involves reducing unnecessary complexity and ensuring that only essential components operate at high privilege levels. Developers continuously evaluate code to identify and eliminate potential vulnerabilities.

Modern security features also include sandboxing, encryption support, and secure memory handling. These mechanisms help protect systems from both internal and external threats. The collaborative development model ensures that security improvements are rapidly shared and integrated across the global community.

Security updates are treated with high priority, and patches are often distributed quickly to address newly discovered vulnerabilities. This responsiveness is essential for maintaining trust in systems that rely on the kernel for critical operations.

Real-Time Systems and Specialized Applications

The Linux kernel is also used in real-time computing environments where timing precision is critical. Real-time systems require predictable response times for tasks, often used in industrial automation, robotics, and telecommunications.

To support these use cases, the kernel includes real-time patches and configurations that reduce latency and improve determinism. These enhancements allow the system to meet strict timing requirements while maintaining general-purpose functionality.

Specialized applications often require customized kernel configurations. The modular nature of the kernel allows developers to tailor it to specific needs without modifying the entire system. This flexibility is one of the reasons it is widely used in embedded systems and specialized hardware platforms.

Scalability in Modern Data Centers

Linux kernel scalability is one of the main reasons it dominates modern data center infrastructure. It is capable of efficiently managing thousands of processes simultaneously while maintaining stability and performance.

In large-scale environments, the kernel must handle high levels of concurrency, network traffic, and storage operations. Continuous improvements in scheduling, memory management, and networking ensure that it can meet these demands effectively.

Cloud computing platforms rely heavily on the kernel’s ability to scale dynamically. Virtualization and containerization technologies built on top of the kernel allow multiple isolated environments to run efficiently on shared hardware.

This scalability has made Linux the backbone of much of the internet’s infrastructure, powering everything from web servers to large distributed computing systems.

Contribution Lifecycle and Developer Onboarding

Becoming a contributor to kernel development involves a learning process that emphasizes technical depth and community engagement. New developers typically start by submitting small patches or fixing minor issues before progressing to more complex contributions.

Mentorship from experienced contributors plays a key role in onboarding new developers. This helps ensure that newcomers understand coding standards, review processes, and architectural principles.

Over time, contributors gain recognition and may take on more responsibility, such as maintaining subsystems or reviewing other developers’ work. This progression helps sustain the long-term health of the project by continuously integrating new talent.

Sustainability of Open Development Model

The sustainability of Linux kernel development is rooted in its open and collaborative nature. Because contributions come from a wide range of sources, the project is not dependent on any single organization or individual.

This distributed responsibility ensures long-term resilience. Even if contributors leave or organizations change priorities, the community continues to maintain and evolve the kernel.

The open model also encourages transparency and accountability. All changes are publicly reviewed, ensuring that development remains accessible and trustworthy.

Technological Influence Across Industries

The Linux kernel has had a profound influence on nearly every major technology sector. It is widely used in cloud computing, artificial intelligence systems, telecommunications, automotive technology, and scientific computing.

Its flexibility allows organizations to build highly customized systems tailored to their specific needs. This adaptability has made it a preferred choice for both startups and large enterprises.

The kernel’s influence continues to grow as new technologies emerge. Its ability to integrate with modern innovations ensures that it remains relevant in rapidly changing technological landscapes.

Ongoing Innovation and Future Directions

Kernel development continues to evolve in response to emerging computing trends. Areas such as artificial intelligence, edge computing, and distributed systems are driving new requirements for performance and scalability.

Developers are continuously exploring ways to improve efficiency, reduce latency, and enhance system intelligence. These innovations are guided by real-world use cases and community-driven research.

The future of kernel development will likely involve even greater levels of automation, optimization, and hardware integration. Its open collaborative model ensures that it will continue to adapt and thrive alongside technological advancements.

Emerging Technologies and Kernel Adaptation

As computing continues to evolve, the Linux kernel is increasingly adapting to emerging technologies such as artificial intelligence, machine learning workloads, edge computing, and heterogeneous hardware systems. These advancements demand higher efficiency, better parallel processing capabilities, and more intelligent resource management from the kernel. Developers are actively working on optimizing subsystems to better support specialized accelerators like GPUs, TPUs, and other AI-focused hardware.

Edge computing has also introduced new challenges, where devices operate with limited resources but require high responsiveness and reliability. The kernel is being refined to operate efficiently in such constrained environments while still maintaining full compatibility with larger systems. This adaptability highlights the kernel’s ability to remain relevant across both traditional and next-generation computing paradigms.

Community Driven Growth and Knowledge Transfer

The continued success of Linux kernel development depends heavily on its strong culture of knowledge sharing and mentorship. Experienced developers actively guide newcomers through the contribution process, helping them understand complex system architecture and development standards. This ensures that expertise is continuously passed down, keeping the community vibrant and sustainable.

Open communication channels and collaborative review processes allow ideas to be freely exchanged and refined. This environment encourages learning and innovation, enabling contributors to grow while improving the quality of the kernel itself. Over time, this shared knowledge base strengthens the entire ecosystem and ensures long-term stability.

Enduring Impact on Global Computing Infrastructure

The Linux kernel has become an indispensable foundation of modern computing infrastructure, powering everything from personal devices to global-scale data centers. Its reliability, flexibility, and performance have made it a trusted choice for critical systems across industries such as finance, healthcare, telecommunications, and scientific research.

Its open and collaborative development model ensures that it continues to evolve alongside technological advancements. By integrating contributions from a diverse global community, the kernel remains adaptable, secure, and efficient. This enduring impact highlights its role not just as a software component, but as a fundamental pillar of the digital world.

Conclusion

Linux kernel development stands as one of the most remarkable examples of large-scale global collaboration in software engineering. Its success is rooted in an open development model where thousands of contributors collectively shape a complex and highly critical system. Through structured workflows, rigorous code review, and distributed responsibility, the kernel has achieved a rare balance between innovation, stability, and long-term sustainability.

The strength of this project lies in its ability to evolve continuously without losing coherence. Developers from different regions and organizations contribute specialized knowledge, ensuring that every part of the system—from memory management to networking and security—receives constant refinement. This shared effort has allowed the kernel to scale across an extraordinary range of environments, from small embedded devices to massive cloud infrastructures.

Equally important is the culture of transparency and technical discipline that defines its development process. Decisions are driven by engineering merit, detailed discussion, and consensus rather than centralized control. This ensures that improvements are both practical and reliable, reinforcing trust in the system across industries worldwide.

As computing continues to advance, the Linux kernel remains adaptable to new challenges and technologies. Its collaborative foundation ensures that it will keep evolving alongside modern demands, maintaining its position as a core pillar of global digital infrastructure.