NetFlow data is a widely used method for understanding how traffic moves across a computer network. It was originally developed to help network engineers gain visibility into traffic patterns and improve the performance, security, and reliability of IT systems. Instead of inspecting every single packet in detail, NetFlow focuses on summarizing traffic into flows, making it easier to analyze large volumes of network activity efficiently.

In modern IT environments, where networks carry massive amounts of data every second, having a clear view of what is happening inside the network is essential. NetFlow provides that visibility by collecting metadata about traffic flows, such as source and destination addresses, protocols, and duration of communication.

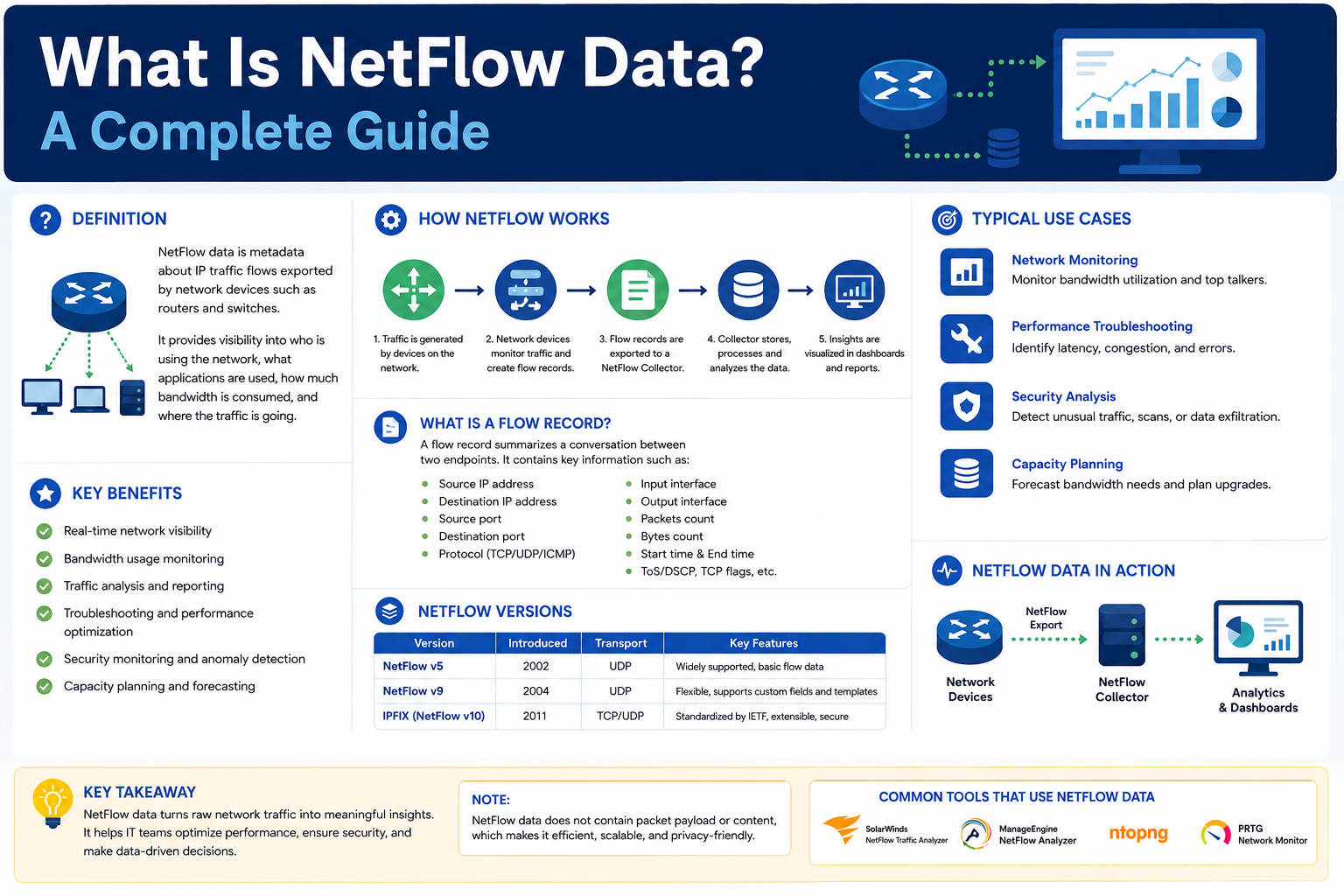

Understanding NetFlow Data

NetFlow data refers to the structured information collected about network traffic flows. Rather than capturing the full content of each packet, it records only the key attributes that describe how communication is happening between devices. This makes it highly efficient for monitoring and analysis.

A flow is essentially a set of packets that share common characteristics, such as the same source IP address, destination IP address, ports, and protocol type. When these packets are grouped together, they form a flow that represents a conversation between two endpoints on a network.

NetFlow data helps organizations answer important questions such as which applications are consuming the most bandwidth, which users are generating the most traffic, and when network congestion is occurring.

How Network Flows Work

A network flow is defined as a unidirectional sequence of packets that share specific attributes. These attributes typically include the source address, destination address, transport protocol, and port numbers. Each flow represents one direction of communication between two systems.

For example, when a user accesses a website, multiple flows are created between the user’s device and the web server. One flow represents outgoing traffic from the user, while another represents incoming responses.

Each flow contains statistical information such as the number of packets, total bytes transferred, and the duration of the communication. This aggregated view allows network administrators to analyze behavior without examining every packet individually.

Flow Records and Their Role

Flow records are the stored output generated from observed network flows. These records contain summarized details about each flow, including timestamps, traffic volume, and communication endpoints.

Instead of storing raw packet data, flow records compress the information into meaningful summaries. This reduces storage requirements while still providing valuable insights for analysis and troubleshooting.

Flow records are typically sent from network devices to centralized systems for further processing and long-term storage.

Flow Exporters and Flow Collectors

Flow exporters are network devices such as routers, switches, or firewalls that generate NetFlow data. These devices observe traffic passing through them and convert it into structured flow records. They continuously monitor packet streams in real time and group them into flows based on shared attributes such as IP addresses, ports, and protocols. Once the data is organized, it is prepared for export to a centralized collector for analysis. Flow exporters play a critical role in ensuring accurate and timely visibility into network behavior, making them essential components for performance monitoring, troubleshooting, and security analysis in modern network infrastructures.

Once generated, the flow records are sent to a flow collector. The collector is a system responsible for receiving, storing, and organizing NetFlow data from multiple sources.

Flow collectors play a crucial role in network monitoring because they allow administrators to analyze traffic patterns across the entire infrastructure in one centralized location. This makes it easier to identify trends, detect anomalies, and troubleshoot issues.

Different Versions of NetFlow

Over time, NetFlow has evolved to support more advanced features and broader compatibility.

NetFlow version 9 introduced a more flexible structure using templates. These templates define what data is included in each flow record, allowing for customization based on monitoring needs. It also improved scalability and added support for modern network protocols. This flexibility allows network administrators to adapt NetFlow to different environments without changing the core system.

Templates can be updated dynamically, which reduces configuration complexity and improves efficiency in large-scale networks. In addition, NetFlow version 9 enhances performance by minimizing unnecessary data collection and ensuring that only relevant information is exported. This makes it highly effective for both enterprise and service provider networks.

Another widely used variant is IP Flow Information Export, which is an open standard designed to work across multiple vendors. It maintains compatibility with NetFlow concepts while offering greater flexibility for integration in diverse environments.

Some networking vendors also developed their own versions of flow-based monitoring systems, which follow similar principles but are optimized for their specific hardware and software platforms.

Why NetFlow Data is Important

NetFlow data is essential for managing and securing modern networks. It provides deep visibility into how network resources are being used and helps organizations make informed decisions.

One of its most important benefits is troubleshooting. When network issues occur, NetFlow data can help identify when and where the problem started. By analyzing historical flow records, engineers can trace abnormal behavior and pinpoint the root cause of performance degradation.

NetFlow is also widely used for security monitoring. It helps detect suspicious activities such as unusual traffic spikes, unauthorized access attempts, and data exfiltration. By identifying these patterns early, organizations can respond to potential threats before they cause serious damage.

Another major advantage is bandwidth management. NetFlow allows administrators to see which applications or users are consuming the most network resources. This helps optimize traffic flow and ensure fair usage across the organization.

It is also valuable for compliance and auditing purposes. Many organizations need to maintain records of network activity for regulatory requirements. NetFlow provides a reliable way to document traffic behavior over time.

How NetFlow Data Works in Practice

NetFlow operates through a structured process that involves several stages.

First, network devices monitor traffic as it passes through them. Each packet is analyzed and assigned to a specific flow based on shared attributes. This process allows the system to group related packets together.

Next, flow data is exported from the device. Instead of sending every packet, the device sends summarized flow records to a collector. This reduces network overhead and improves efficiency.

The collector then stores and organizes the incoming data. Since large networks can generate millions of flow records, collectors are designed to handle high-speed data ingestion and storage.

Finally, the data is analyzed using specialized tools. These tools help visualize traffic patterns, identify anomalies, and generate reports for network administrators.

Implementing NetFlow in Network Environments

Before implementing NetFlow, organizations must ensure that their network devices support flow monitoring capabilities. Most modern routers, switches, and firewalls are capable of exporting flow data.

Configuration typically involves enabling flow monitoring on selected devices and defining what information should be collected. This may include source and destination addresses, protocol types, and traffic volume.

It is also important to configure templates when using advanced versions of NetFlow. Templates define the structure of flow records and ensure that relevant data is captured consistently.

Proper planning is essential to avoid performance issues. Sampling techniques are often used to reduce the amount of data collected. Instead of analyzing every packet, only a percentage of traffic is monitored, which helps balance accuracy and system performance.

Organizations should also establish data retention policies. Since flow data can accumulate quickly, it is important to define how long records should be stored before being archived or deleted.

Best Practices for Using NetFlow

To get the most value from NetFlow, it should be implemented carefully and strategically.

One important practice is using appropriate sampling rates. Excessive sampling can overload systems, while too little sampling may reduce visibility. Finding the right balance is essential for accurate monitoring.

Another best practice is integrating NetFlow with security monitoring systems. When combined with other security tools, NetFlow enhances threat detection and response capabilities.

Regular analysis of flow data is also important. Continuous monitoring helps identify trends and detect issues before they escalate into serious problems.

Proper storage management should not be overlooked. Since flow data can grow rapidly, efficient storage and archiving strategies are necessary to maintain system performance.

Tools Used with NetFlow

NetFlow is often used alongside specialized tools that help visualize and analyze data.

Some tools provide dashboards that display real-time network activity, making it easier to understand traffic patterns. These visualizations help identify congestion points and performance bottlenecks.

Other tools focus on data storage and aggregation, allowing organizations to maintain long-term records of network behavior. These systems make it easier to perform historical analysis and generate reports.

Log analysis platforms are also commonly used with NetFlow data. They help correlate network activity with other system events, providing a more complete view of IT environments.

Conclusion

NetFlow data is a powerful method for gaining visibility into network traffic and understanding how data moves across systems. By summarizing traffic into flows, it allows organizations to efficiently monitor performance, troubleshoot issues, and enhance security.

Its ability to provide detailed insights without overwhelming storage systems makes it an essential tool in modern networking. When properly implemented and combined with analytical tools, NetFlow becomes a critical component of network management strategies.

As networks continue to grow in size and complexity, the importance of traffic analysis tools like NetFlow will only increase. It remains one of the most effective ways to maintain visibility, ensure security, and optimize performance in IT environments.