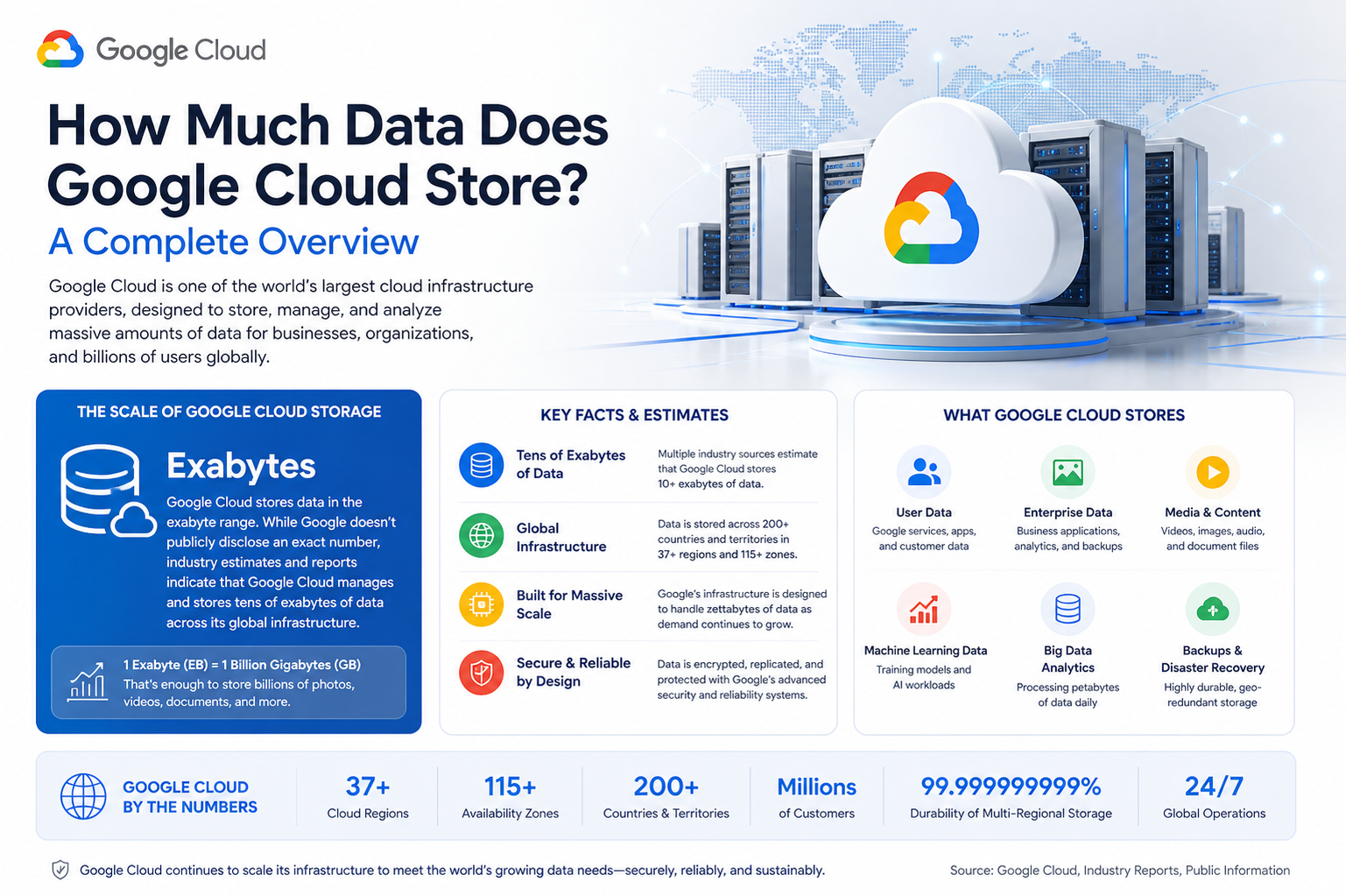

Google Cloud operates on a massive global infrastructure designed to store and manage data at an almost unimaginable scale. Instead of relying on a single fixed storage capacity, it functions as a continuously expanding system that grows alongside global digital demand. Every second, new data is generated by applications, businesses, devices, and users, and this data is seamlessly absorbed into the platform’s distributed network.

The system is built to handle exabytes and beyond, which represents an extremely large volume of digital information. This includes everything from simple text files to complex machine learning datasets, high-resolution videos, and real-time analytics streams. The architecture is designed in a way that storage is not limited by a single location but spread across multiple interconnected data centers around the world.

How Google Cloud Handles Massive Data Volumes

Instead of storing data in one centralized location, Google Cloud distributes it across a global network of highly secure data centers. This approach ensures that storage capacity is virtually unlimited from a user’s perspective. When data is uploaded, it is automatically split, replicated, and stored in multiple physical locations.

This distributed design allows the system to scale without interruption. As demand increases, additional storage resources are automatically added to the network. This means that businesses and developers never have to worry about running out of space, even when handling extremely large datasets or rapidly growing user bases.

The Role of Distributed Architecture in Storage Growth

The foundation of Google Cloud’s storage capability lies in its distributed architecture. Instead of relying on a single server or region, data is spread across multiple zones and regions. Each region contains several isolated zones that work independently but remain connected through high-speed networks.

This structure ensures not only scalability but also resilience. If one location experiences an issue, data can still be accessed from another region without disruption. This redundancy plays a key role in supporting continuous storage expansion while maintaining system reliability.

Types of Data Stored Across the Platform

Google Cloud is designed to handle a wide variety of data types, making it suitable for nearly every digital use case. Structured data, such as database records, is commonly stored for applications, financial systems, and enterprise operations. Unstructured data, including images, videos, audio files, and documents, also forms a large portion of stored content.

In addition, semi-structured data used in analytics and machine learning is rapidly increasing. Modern businesses rely heavily on data-driven decision-making, which requires storing and processing large datasets efficiently. Google Cloud provides the infrastructure needed to support these complex workloads without performance limitations.

Real-Time Data Processing and Storage Integration

One of the most important aspects of Google Cloud’s storage system is its integration with real-time processing capabilities. Data is not only stored but also actively used for computing tasks such as analytics, artificial intelligence, and application performance optimization.

This integration means that storage and computing are closely connected. As data is stored, it can immediately be analyzed or processed without needing to be moved between separate systems. This reduces latency and improves efficiency, especially for large-scale operations that rely on fast decision-making.

Continuous Expansion Driven by Global Demand

The amount of data stored in Google Cloud continues to grow at an extremely fast rate due to increasing global digitization. Businesses across all industries are moving their operations to cloud-based environments, which significantly increases storage demand.

Every day, millions of new users and applications contribute to the growing data ecosystem. This includes mobile apps, streaming platforms, e-commerce systems, artificial intelligence models, and Internet of Things devices. As digital transformation accelerates worldwide, the need for scalable storage continues to rise.

Data Replication and Reliability Systems

To ensure reliability, Google Cloud automatically replicates data across multiple locations. This means that each piece of data is stored in more than one place, reducing the risk of loss due to hardware failure or regional issues.

This replication process happens in real time and is managed by advanced automation systems. It ensures that data remains available even during unexpected disruptions. At the same time, it supports the platform’s ability to scale, since new storage nodes can be added without affecting existing data integrity.

Security and Data Protection at Scale

As the volume of stored data increases, security becomes even more critical. Google Cloud uses multiple layers of protection to safeguard information, including encryption, identity management, and access controls.

Data is encrypted both during transmission and while stored, ensuring that unauthorized access is prevented. Additionally, strict access policies allow organizations to control who can view or modify their data. These security measures are applied consistently across all storage environments, regardless of scale.

Efficiency Through Automated Storage Management

One of the reasons Google Cloud can handle such large amounts of data is its use of automated management systems. These systems continuously monitor storage usage and optimize how data is distributed across infrastructure.

Automation helps balance workloads, reduce storage costs, and improve performance. It also ensures that resources are used efficiently, allowing the platform to scale without requiring manual intervention. This is especially important for global systems that operate 24/7.

Impact of Artificial Intelligence on Data Growth

Artificial intelligence plays a major role in increasing the amount of data stored in Google Cloud. Machine learning models require large datasets for training, testing, and optimization. These datasets often include billions of data points, contributing significantly to overall storage usage.

As AI adoption continues to grow across industries such as healthcare, finance, and technology, the demand for storage is expected to increase even further. Google Cloud provides the infrastructure needed to support these advanced workloads at scale.

Support for Enterprise-Level Data Needs

Large organizations rely heavily on cloud storage for mission-critical operations. Google Cloud supports enterprise-level workloads that involve massive datasets, high-speed processing, and global accessibility.

Businesses use the platform to store customer data, transaction records, application logs, and analytical insights. The ability to scale storage dynamically ensures that enterprises can expand their operations without worrying about infrastructure limitations.

Future Growth of Cloud Storage Capacity

The future of Google Cloud storage is expected to grow alongside global digital expansion. As more industries adopt cloud-based systems, the total amount of stored data will continue to rise exponentially.

Emerging technologies such as artificial intelligence, machine learning, and edge computing will further increase storage requirements. At the same time, advancements in infrastructure will allow even greater scalability and efficiency.

Advanced Storage Architecture and Engineering Principles

The foundation of Google Cloud’s ability to store such massive volumes of data lies in its highly advanced engineering principles. The system is not built as a traditional storage model but as a layered, distributed, and self-optimizing infrastructure. Each layer of this architecture plays a specific role in ensuring scalability, speed, and resilience.

At the core, data is broken into smaller chunks and distributed across multiple physical machines. This process allows the system to handle extremely large datasets without relying on any single storage unit. By dividing data into manageable pieces, the platform ensures that storage and retrieval remain efficient even as volume increases exponentially.

The architecture is also designed with fault tolerance in mind. Every piece of data is replicated across different physical locations, ensuring that even if one server or entire facility experiences failure, the information remains accessible. This redundancy is a key reason why the system can scale without compromising reliability.

Role of Automation in Scaling Storage

Automation plays a central role in managing the enormous storage ecosystem. Google Cloud uses intelligent systems that continuously monitor storage demand, system health, and performance metrics. These systems automatically allocate new resources whenever needed, ensuring that storage capacity expands seamlessly.

This automation eliminates the need for manual infrastructure management. Instead of engineers physically configuring storage expansion, the system dynamically adjusts itself based on real-time usage patterns. This approach not only improves efficiency but also ensures that storage availability keeps pace with global demand.

The automated systems also optimize data placement. Frequently accessed data is stored closer to computing resources, while less frequently used data is moved to more cost-efficient storage tiers. This dynamic optimization helps balance performance and cost at scale.

Data Lifecycle Management and Optimization

Every piece of data stored in Google Cloud follows a structured lifecycle. From the moment it is created, the system determines how it should be stored, accessed, and eventually archived or deleted. This lifecycle management ensures that storage resources are used efficiently.

Hot data, which is accessed frequently, is stored in high-performance systems for quick retrieval. Warm data, which is accessed less often, is moved to slower but more cost-effective storage. Cold data, which is rarely accessed, is archived in long-term storage systems designed for durability rather than speed.

This tiered approach allows the platform to handle enormous amounts of data without wasting resources. It ensures that high-performance storage is reserved for critical workloads while still maintaining access to historical information when needed.

Global Network and Edge Integration

Google Cloud’s storage system is deeply integrated with a global network of edge locations. These edge systems bring data closer to users, reducing latency and improving access speed. When users request data, it is often served from a nearby edge location rather than a distant central server.

This integration significantly enhances performance for applications that require real-time responsiveness. It also reduces the load on central storage systems, allowing them to focus on long-term data management and large-scale processing.

The edge network works in harmony with central storage systems, ensuring that data flows efficiently across different layers of the infrastructure. This interconnected design is essential for supporting billions of users worldwide.

Scalability Through Containerized Infrastructure

Another important factor contributing to storage scalability is the use of containerized infrastructure. Instead of relying on static servers, workloads are run inside containers that can be easily moved, replicated, or scaled.

This flexibility allows storage-related services to expand rapidly without physical constraints. When demand increases, additional containers are deployed automatically, increasing processing and storage capacity in real time.

Containerization also improves efficiency by ensuring that computing resources are used optimally. This reduces waste and allows the system to support larger volumes of data without requiring proportional increases in physical infrastructure.

Data Consistency and Synchronization at Scale

Managing consistency across massive distributed systems is one of the most complex challenges in cloud storage. Google Cloud addresses this through advanced synchronization mechanisms that ensure data remains consistent across all locations.

When data is updated in one region, changes are propagated across all replicas in a controlled and efficient manner. This ensures that users always access the most recent version of their data, regardless of where they are located.

The system uses sophisticated algorithms to balance consistency and performance. In some cases, slight delays in synchronization are acceptable to maintain system speed, while in critical applications, immediate consistency is enforced.

Economic Model Behind Large-Scale Storage

The economic structure of Google Cloud storage is based on efficiency and scalability. Instead of charging for fixed storage capacity, the system uses a flexible model where users pay based on usage. This aligns costs with actual consumption, making it suitable for both small and large organizations.

This model also encourages efficient use of storage resources. Since users are charged based on the amount of data stored and processed, unnecessary storage is minimized. This contributes to overall system efficiency at a global scale.

At the same time, large-scale operations benefit from economies of scale. As the infrastructure grows, the cost per unit of storage decreases, allowing the system to remain competitive while expanding capacity continuously.

Impact of Big Data on Storage Growth

The rise of big data has been one of the primary drivers of storage expansion. Modern applications generate vast amounts of structured and unstructured data that must be stored, analyzed, and processed in real time.

Industries such as healthcare, finance, retail, and transportation rely heavily on data analytics to make informed decisions. This requires storing massive datasets that can be accessed and processed efficiently.

As data generation continues to accelerate, storage systems must evolve to handle not just larger volumes but also more complex data types. This has pushed cloud platforms to develop increasingly sophisticated storage architectures.

Artificial Intelligence and Predictive Storage Management

Artificial intelligence is not only a consumer of storage but also a tool for managing it. Google Cloud uses machine learning algorithms to predict storage demand, optimize resource allocation, and improve system performance.

These predictive systems analyze usage patterns to anticipate future storage needs. This allows the platform to prepare resources in advance, preventing bottlenecks and ensuring smooth scalability.

AI also helps in detecting anomalies, identifying inefficiencies, and improving data distribution strategies. This results in a more intelligent and adaptive storage ecosystem.

Environmental Considerations of Large-Scale Storage

Managing such vast amounts of data also comes with environmental considerations. Large data centers consume significant amounts of energy, and optimizing efficiency is a key priority.

Google Cloud focuses on improving energy efficiency through advanced cooling systems, optimized hardware, and renewable energy integration. These efforts help reduce the environmental impact of large-scale storage infrastructure.

Efficient data management also plays a role in sustainability. By reducing unnecessary data duplication and optimizing storage tiers, the system minimizes resource consumption while maintaining performance.

Challenges in Managing Exponential Data Growth

Despite its advanced design, managing exponential data growth presents ongoing challenges. One of the primary challenges is maintaining performance while continuously expanding storage capacity.

As data volume increases, ensuring fast retrieval and processing becomes more complex. The system must balance storage distribution, replication, and access speed across billions of data points.

Another challenge is ensuring data security at scale. As the system grows, the number of potential vulnerabilities increases, requiring continuous improvements in encryption, authentication, and monitoring systems.

Evolution of Storage Technologies

Storage technology has evolved significantly over time, moving from physical servers to highly abstracted cloud systems. This evolution has enabled unprecedented scalability and flexibility.

Modern storage systems are no longer limited by hardware constraints in a traditional sense. Instead, they rely on distributed computing, virtualization, and automation to achieve near-infinite scalability.

Future developments are expected to further enhance storage efficiency through technologies such as quantum computing, advanced compression algorithms, and even more intelligent automation systems.

Long-Term Outlook for Cloud Storage Expansion

The long-term outlook for cloud storage suggests continuous and rapid expansion. As digital transformation accelerates across industries, the demand for scalable storage will only increase.

Emerging technologies such as autonomous systems, smart cities, and advanced AI models will generate even larger datasets. This will require storage systems that can grow dynamically without performance degradation.

Google Cloud’s architecture is designed precisely for this future, ensuring that it can continue to scale alongside global digital evolution.

Data Replication Strategies for Global Reliability

One of the most important reasons Google Cloud can handle such massive amounts of data is its advanced replication strategy. Instead of storing a single copy of data in one location, the system automatically creates multiple copies across different geographic regions. This ensures that data remains safe even in the event of hardware failure, natural disasters, or network disruptions.

Replication happens in a structured way, where data is first stored locally and then distributed to other regions for redundancy. These copies are continuously synchronized to ensure consistency. The system is designed in a way that users do not experience delays or inconsistencies while accessing their information, even though multiple versions exist in the background.

This approach also improves accessibility. If one region experiences high traffic, requests are automatically redirected to another region where the same data is available. This ensures uninterrupted service and stable performance at a global scale.

High-Performance Storage Tiers and Data Prioritization

To manage the enormous volume of information efficiently, Google Cloud organizes data into different performance tiers. Each tier is designed to handle specific usage patterns based on how frequently data is accessed.

Frequently used data is stored in high-speed storage systems that allow instant retrieval. This is essential for applications that require real-time responses, such as financial transactions, live analytics, and interactive applications. Less frequently accessed data is moved to lower-cost storage tiers that prioritize capacity over speed.

This intelligent prioritization ensures that system resources are used efficiently. It also allows the platform to scale storage capacity without compromising performance or increasing unnecessary costs. Over time, data naturally moves between tiers based on usage patterns, ensuring optimal placement throughout its lifecycle.

Massive Object Storage Capabilities

Google Cloud supports object storage systems that can handle trillions of individual data objects. Each object can be as small as a simple text file or as large as a high-definition video or complex dataset.

These objects are stored in a highly scalable system that does not impose traditional file system limitations. Instead, each object is assigned a unique identifier, allowing it to be retrieved independently from any location in the network.

This object-based structure is one of the key reasons why the platform can scale to such massive levels. It eliminates hierarchical storage limitations and allows data to be distributed freely across global infrastructure.

Role of Metadata in Managing Large-Scale Storage

Metadata plays a crucial role in managing the enormous volume of stored data. Each data object contains metadata that describes its attributes, such as creation time, access frequency, storage class, and location.

This metadata allows the system to quickly locate, organize, and manage data without needing to scan entire storage systems. It significantly improves efficiency and enables rapid retrieval even when dealing with billions of objects.

Metadata also supports automation by providing the system with the information needed to make intelligent decisions about data placement, replication, and lifecycle management.

Latency Reduction Through Intelligent Routing

To ensure fast access to stored data, Google Cloud uses intelligent routing systems that direct user requests to the nearest available data center. This reduces latency and improves performance, especially for global users accessing data from different regions.

Routing decisions are made dynamically based on network conditions, server load, and geographic proximity. This ensures that users always receive data from the fastest and most reliable source available at that moment.

This intelligent routing system is essential for maintaining performance consistency across a globally distributed infrastructure.

Storage Optimization Through Compression Techniques

As data volume increases, efficient storage becomes critical. Google Cloud uses advanced compression techniques to reduce the physical space required to store large datasets.

Compression algorithms are applied depending on the type of data being stored. For example, text-based data can be compressed significantly without loss of information, while media files use specialized compression methods to reduce size while maintaining quality.

This optimization allows the platform to store more data within the same physical infrastructure, effectively increasing overall capacity without requiring proportional hardware expansion.

Integration with Data Analytics Systems

One of the unique strengths of Google Cloud storage is its tight integration with analytics systems. Stored data is not isolated but directly connected to powerful analytical tools that can process large datasets in real time.

This integration allows organizations to extract insights directly from stored data without needing to transfer it between systems. It reduces processing time and enables faster decision-making.

As data continues to grow, this integration becomes even more important, since businesses rely heavily on real-time insights derived from massive datasets.

Scalable Database Systems Supporting Storage Growth

In addition to object storage, Google Cloud supports highly scalable database systems designed to handle structured data at massive scale. These databases can automatically adjust their capacity based on workload demands.

They are designed to support both transactional operations and analytical queries simultaneously. This dual capability allows businesses to run complex operations without needing separate systems for storage and processing.

The scalability of these databases ensures that even as data volumes increase, performance remains stable and responsive.

Disaster Recovery and Data Continuity Systems

To ensure uninterrupted access to data, Google Cloud includes advanced disaster recovery systems. These systems are designed to quickly restore data and services in the event of unexpected failures.

Data is continuously backed up and replicated across multiple regions, ensuring that recovery can happen almost instantly if needed. This level of protection is critical for businesses that rely on continuous access to their data.

Disaster recovery systems are fully automated, reducing downtime and ensuring that data remains available even in extreme situations.

Role of Machine Learning in Storage Optimization

Machine learning is deeply integrated into storage management systems. It is used to analyze usage patterns, predict demand, and optimize resource allocation.

These systems learn from historical data to improve future performance. For example, they can predict which data will be accessed frequently and move it to faster storage tiers in advance.

This predictive capability improves efficiency and ensures that the system continues to scale smoothly as data volumes increase.

Data Governance and Compliance at Scale

As storage systems grow, maintaining data governance becomes increasingly important. Google Cloud implements strict policies to ensure that data is managed in compliance with global regulations.

These governance systems control how data is stored, accessed, and processed. They also ensure that organizations can meet regulatory requirements related to privacy, security, and data retention.

At scale, these systems are essential for maintaining trust and ensuring responsible data management.

Hardware Innovation Supporting Storage Expansion

Behind the software layer, continuous hardware innovation supports storage growth. Advanced servers, high-capacity drives, and optimized networking equipment are used to build the physical foundation of the system.

These hardware components are designed for efficiency, durability, and scalability. They are constantly updated and improved to support increasing storage demands.

By combining advanced hardware with intelligent software systems, the platform achieves both scale and performance simultaneously.

Long-Term Data Preservation Strategies

Not all data is actively used, but it still needs to be preserved for long periods. Google Cloud supports long-term storage solutions designed for archival data that must remain accessible for years or even decades.

These systems prioritize durability and cost efficiency over speed, ensuring that historical data is preserved without consuming high-performance resources.

This long-term approach is essential for industries that rely on historical records and compliance-based data retention.

Security Architecture for Large-Scale Data Storage

As data storage scales into the exabyte and beyond range, security becomes one of the most critical components of the entire system. Google Cloud uses a layered security architecture designed to protect data at every stage, including storage, transmission, and processing. This layered model ensures that even as the volume of stored data grows, protection mechanisms remain strong and consistent.

At the foundation, encryption is applied automatically to all stored data. This means that information is converted into unreadable formats before it is saved to storage systems. Even if unauthorized access were to occur at the physical level, the data would remain protected. Encryption is also applied during data transfer between systems, ensuring that information remains secure while moving across networks.

Access control systems add another layer of protection. Only authorized users and applications are allowed to interact with specific datasets. These permissions are managed through identity-based systems that verify user roles and access levels. This ensures that sensitive data is only available to those with proper authorization.

Identity and Access Management at Scale

Managing billions of data objects requires a highly advanced identity and access management system. Google Cloud uses structured policies that define who can access what data and under what conditions. These policies are applied consistently across all regions and services.

Access permissions are highly granular, allowing organizations to define controls at the level of individual datasets or even specific operations. This ensures that users only interact with the data they are permitted to use, reducing the risk of unauthorized access or data leakage.

At scale, this system becomes essential because millions of users and applications interact with stored data simultaneously. Automated enforcement ensures that policies remain consistent without requiring manual oversight.

Monitoring and Threat Detection Systems

Continuous monitoring is a core part of maintaining security in large-scale storage environments. Google Cloud uses real-time monitoring systems that analyze activity across its entire infrastructure. These systems detect unusual behavior patterns that could indicate potential security threats.

Machine learning models are used to identify anomalies in data access patterns, network traffic, and system behavior. When suspicious activity is detected, automated responses can be triggered to mitigate risks immediately.

This proactive approach allows the system to respond to potential threats before they escalate, ensuring that large volumes of data remain secure even in complex environments.

Encryption Key Management Systems

Encryption alone is not sufficient without secure key management. Google Cloud uses dedicated key management systems to generate, store, and rotate encryption keys securely. These keys are essential for decrypting data when authorized access is required.

Key management systems are isolated from general storage systems to reduce risk exposure. They also include strict access controls and auditing capabilities to track how and when keys are used.

Regular key rotation ensures that even if a key is compromised, its usefulness is limited. This adds another layer of protection for large-scale data storage.

Compliance with Global Standards

As data storage expands globally, compliance with international regulations becomes essential. Google Cloud aligns its systems with multiple global standards related to data privacy, security, and operational transparency.

These compliance frameworks ensure that organizations using the platform can meet legal and regulatory requirements across different regions. This is especially important for industries such as healthcare, finance, and government services, where data protection standards are strict.

Compliance is built into the infrastructure itself, meaning that security and regulatory requirements are not optional but integrated into the system’s design.

Scalability of Security Systems

One of the unique challenges of large-scale cloud storage is ensuring that security systems scale at the same rate as data growth. Google Cloud addresses this by automating most security processes and integrating them directly into the infrastructure.

Instead of manually configuring security for each new dataset, policies are automatically applied based on predefined rules. This ensures consistency and reduces the risk of human error.

As storage capacity increases, security systems expand automatically alongside it, ensuring that protection remains strong regardless of scale.

Data Isolation and Multi-Tenancy Protection

Cloud environments often host data from multiple organizations simultaneously. To ensure privacy, Google Cloud uses strong data isolation techniques. Each customer’s data is logically separated from others, even when stored on shared physical infrastructure.

This multi-tenancy model ensures that no organization can access another’s data, even if they share underlying hardware. Isolation is enforced at both the software and infrastructure levels, providing strong separation between different data environments.

This structure allows efficient use of resources while maintaining strict privacy boundaries.

Performance Optimization in Large Storage Systems

As data volume increases, maintaining high performance becomes increasingly challenging. Google Cloud uses advanced optimization techniques to ensure that storage systems remain fast and responsive.

Data caching plays an important role in improving performance. Frequently accessed data is temporarily stored in high-speed memory systems, allowing faster retrieval. This reduces the need to repeatedly access slower storage layers.

Load balancing systems distribute traffic evenly across multiple servers, preventing bottlenecks and ensuring smooth performance even during peak usage periods.

Impact of Edge Computing on Storage Efficiency

Edge computing has significantly improved how data is accessed and processed in large-scale storage systems. By bringing computation closer to the user, edge systems reduce the distance data must travel.

This reduces latency and improves response times, especially for applications that require real-time processing. Edge systems also reduce the load on central storage infrastructure, allowing it to focus on long-term data management.

The integration of edge computing with cloud storage creates a more efficient and responsive global system.

Data Migration and Scalability Flexibility

One of the strengths of Google Cloud storage is its ability to support seamless data migration. Organizations can move large datasets into or within the platform without significant downtime or disruption.

Migration tools are designed to handle large-scale transfers efficiently, ensuring that data remains accessible throughout the process. This flexibility allows businesses to scale their storage needs without operational interruptions.

As data grows, migration systems ensure that storage remains optimized and well-distributed across infrastructure.

Sustainability in Large-Scale Storage Infrastructure

Sustainability is an increasingly important consideration in managing large data storage systems. Google Cloud focuses on reducing the environmental impact of its infrastructure through energy-efficient technologies and renewable energy sources.

Data centers are designed to optimize cooling and power usage, reducing overall energy consumption. Efficient hardware and software optimization also contribute to lower environmental impact.

Sustainability efforts ensure that large-scale storage growth does not come at the cost of excessive resource consumption.

Future of Distributed Storage Systems

The future of data storage is expected to become even more distributed and intelligent. Systems will likely become more autonomous, with advanced AI managing nearly all aspects of storage, optimization, and security.

Storage systems may evolve to become even more decentralized, allowing data to be stored and processed closer to users across global networks. This will further improve performance and scalability.

As data generation continues to increase, future systems will need to handle not only larger volumes but also more complex and diverse data types.

Long-Term Evolution of Cloud Storage Ecosystems

Cloud storage systems are continuously evolving to meet global demand. Over time, they are expected to become more efficient, more intelligent, and more deeply integrated with emerging technologies.

Advancements in artificial intelligence, quantum computing, and networking will likely redefine how data is stored and processed at scale. These innovations will further enhance scalability and performance.

The evolution of cloud storage reflects the growing importance of data in modern digital ecosystems.

Conclusion

Google Cloud stores an enormous and continuously expanding amount of data through a highly advanced, globally distributed infrastructure. Instead of relying on a fixed storage limit, it operates as a scalable ecosystem that grows automatically as digital demand increases. This makes it capable of supporting everything from small applications to massive enterprise systems without interruption or capacity constraints.

The strength of the platform lies in its layered architecture, where data is distributed across multiple regions, replicated for safety, and managed through intelligent automation systems. This design ensures that storage remains reliable, fast, and accessible even as data volumes reach extremely high levels. Every component of the system works together to maintain performance while handling exponential growth.

Another key factor is the integration of automation and machine learning, which allows the system to optimize storage usage, predict demand, and manage resources efficiently. Combined with strong security systems, encryption, and global compliance standards, Google Cloud ensures that large-scale data storage remains both safe and dependable.

As global digital transformation continues to accelerate, the amount of data stored in cloud environments will keep increasing rapidly. Google Cloud is designed specifically for this future, with the ability to scale dynamically and adapt to new technologies, workloads, and user demands. Its architecture ensures that it can continue handling massive data growth while maintaining efficiency, performance, and reliability over time.